Do readers actually care if your blog is AI generated?

Introduction

Imagine you just finished reading a 1,500-word breakdown of technical market shifts. You feel smarter. But then you notice a tiny note at the bottom: ‘Generated with AI assistance.’ Does that change how you feel about the advice you just took to heart? For most of us, the answer is a messy, complicated ‘maybe.’ It isn’t that we hate the tech. We just value the sweat and blood behind a solid argument.

This is the exact spot where every modern creator is stuck right now. We’re trying to scale up without losing the personality that makes people actually want to read our stuff. Let’s be real: the demand for fresh content is moving faster than most of us can type. Using a high-performance AI blog writer isn’t about being lazy. It’s about staying in the game. The internet never sleeps, after all.

The friction between speed and soul

If you’re building a brand, you’re probably looking at the AI blog writer ROI and worrying about scaring off your audience. That’s fair. If readers feel like they’re just being farmed for clicks, they’re gone. But people are smarter than we think. They care about accuracy and whether you’re wasting their time.

Using GenWrite to do the grunt work isn’t a trick. It’s about using content automation to get the facts out while you handle the big-picture strategy. Research suggests readers are actually pretty forgiving of AI—if the quality is there. They want value. They only get annoyed when the writing feels hollow or repeats itself for no reason.

Transparency as a trust builder

This is why picking a dedicated AI SEO blog writer is a big deal. Generic tools give you generic results. A specialized AI seo content generator focuses on keyword-driven blog writing that actually answers a reader’s question. It’s not just filling a page with words.

We’ve hit a point where ‘is this AI?’ isn’t even the right question to ask. The real question is: ‘Does this solve my problem?’ We’re going to look at reader perception of AI and the AI blog ethics that matter for publishers today. We’ll also see how automated on-page SEO writing fits into a real workflow. More importantly, we’ll look at how to keep your seo optimization for blogs from sounding like a robot wrote it.

AI content transparency is a chance to show you care about quality. By using smart content structure and internal linking, you can build something that feels human, even if a machine helped with the foundation.

Why does trust drop even when the writing is good?

A drop of about 0.15 on a seven-point scale happens the second a reader spots an AI label. It doesn’t matter if the writing is sharp or the data is perfect. This transparency penalty suggests that being honest about using an AI writing tool triggers a trust gap. It’s a strange situation. We’re doing the right thing by being open, yet readers often penalize us for it. I’ve seen this in tests where five thousand people across different fields rated identical articles. When one version was tagged as AI-assisted, people trusted it less than the exact same text credited to a human. This isn’t a failure in grammar. It’s a gut reaction to the feeling that it isn’t real. Readers aren’t necessarily judging the quality; they’re judging the origin.### The authenticity gap in digital contentMost readers want to feel a connection to the person behind the screen. When they see a disclaimer, that connection breaks. They start looking for patterns they would’ve ignored otherwise. Even when using a sophisticated AI SEO article writer, just mentioning machine involvement shifts the reader’s mindset. They stop asking what they can learn and start asking if the piece is real. This isn’t true for every niche. Technical or data-heavy sectors are often more forgiving. But for most blogs, the hurdle is psychological. Research into how readers perceive AI bylines shows that even when they don’t get the tech, they’re still skeptical. It’s a reflex that quality alone can’t always fix.### Overcoming the transparency penaltySo, how do we handle this without being deceptive? It comes down to how you integrate content automation into your workflow. At GenWrite, we focus on material that actually helps people rather than just filling space. If the content is helpful enough, the penalty usually fades as the reader gets what they need. Using a high-end SEO content optimization tool makes sure the technical quality is high, but you still need a human touch. I recommend checking your drafts with an AI content detector to see where the machine patterns are too obvious. It’s not about hiding; it’s about refining the voice so it sounds like a person who actually cares about the topic.### Balancing automation and trustSuccessful keyword research is only half the battle. If your audience feels like they’re being fed a generic script, they’ll leave. We’ve found that search intent alignment is the best way to fix this trust issue. When the content answers their specific question perfectly, the source matters a lot less. Transparency is tricky. You want to be ethical, but you also want to keep the authority you’ve worked hard to build. It’s a balance between using the best tools and keeping the human element front and center. If you provide enough utility, that 0.15 penalty won’t matter much for your long-term growth.

The blind taste test paradox

Think about two glasses of wine sitting on a table. One is a fancy vintage, the other is a modern synthetic blend. In blind tests, people often pick the synthetic one because it’s smoother. But the second the labels come out, the vintage suddenly “tastes” better. It’s a mental trick. We see this exact thing happening with AI. In a massive study of 86,000 people, readers didn’t just struggle to tell the difference—they actually liked the AI passages more. It wasn’t just short news snippets, either. Fantasy authors have seen LLM-penned flash fiction outrank stories from award-winning humans. While complexity changes things, the pattern is there. This creates a weird tension. If the automated blog content value is high enough to win blind tests, why does the vibe shift when we’re honest about the source? It’s human favoritism. We value the grind of a human creator even if the result is a bit clunky. We’re often judging the effort rather than how useful the information is. At GenWrite, I see this friction every day. Users want the speed of an AI blog generator, but they’re terrified of the uncanny valley response from their audience. The reality is that readers usually don’t care about the process until they’re told to care. When content solves a problem or keeps them entertained, they stay on the page. I’ve found that the best way to bridge this gap is by humanizing your writing through sharp editing and personal stories. You don’t have to hide the tool, but you shouldn’t let the machine be the only voice. Most people won’t even think to check for AI content detectors if the quality is high and the facts are right. The stakes are high. If you lean too hard into the machine, you lose the soul that keeps people coming back. But if you ignore these tools entirely, you’ll get outpaced by competitors using SEO optimization to dominate the search results. It’s a balance of keeping that human touch while reaping the benefits of scale.

Search intent vs human writing: what wins?

If the blind taste test proves quality is often indistinguishable, the battle moves from the page to the purpose. Why are you reading? Most digital interactions start with a specific itch,a question that needs an answer or a problem that needs a fix. In these moments, search intent is the undisputed king. A reader looking for “how to reset a router” doesn’t want a poetic memoir about the flickering lights in a dark hallway; they want the five steps to get back online.

This is where the debate over AI blogs vs human blogs often misses the mark. AI is built for utility. It excels at parsing data to provide the most direct path to an answer. When I use GenWrite to handle SEO optimization, the goal is to satisfy the search engine’s requirements and the user’s immediate need for information. If the content answers the “what” and the “how” efficiently, the user experience stays high, regardless of the author’s biological status.

But utility has a ceiling. It solves the immediate problem but rarely builds a relationship. Think about a search for “how to cook carbonara.” An AI-generated list of ingredients and steps is perfectly functional. It gets dinner on the table. However, a reader might return to a specific chef’s site because of the way they describe the sound of the guanciale sizzling or their specific trick for avoiding scrambled eggs. That human connection is the differentiator. It transforms a one-time visitor into a loyal subscriber.

The reality is that “winning” depends entirely on your business goals. For high-volume traffic generation where the goal is to capture broad queries, content automation is almost unbeatable. It allows brands to cover more ground and maintain a consistent presence without the massive overhead of a full writing staff. But for high-stakes thought leadership or opinion pieces, the human element remains the anchor of authority. Results vary based on the niche, but the trend toward efficiency is clear.

So, does search intent beat human writing? In the short term, yes. If you don’t answer the user’s question, they’ll bounce in seconds, no matter how much “soul” you’ve injected into the prose. But if you want them to remember your name after they’ve closed the tab, you need more than just a correct answer. The most effective strategy involves using AI to handle the heavy lifting of informational SEO while reserving human oversight for the nuance and the personal perspectives that machines can’t yet replicate.

The balance between efficiency and empathy doesn’t have to be a conflict. It’s a spectrum. Most readers are happy to consume AI-assisted content if it saves them time and provides accurate data. They only start to care about the “who” when the “what” becomes personal. If your content strategy ignores search intent in favor of pure “humanity,” you’ll likely end up writing for an audience of one. Conversely, if you ignore the human connection, you’re just a commodity that can be replaced by the next faster algorithm.

The ‘uncanny valley’ of unedited AI slop

Meeting search intent is the bare minimum. If a user finds the answer they need but feels like they’re reading a refrigerator manual, they won’t return. This is where most automated content fails. It falls into an uncanny valley where the grammar is perfect, but the logic is too linear and the tone is weirdly sterile.

We call this AI slop. It’s the content equivalent of processed food,filling but lacking nutrition. Think of the ‘Infinite Facts’ pages that post heartwarming stories about 100-year-old twins that don’t exist. These models often hallucinate sentimental details to fill the silence, which is a fast track to losing your reputation. Readers smell the lack of a real pulse.

People are becoming hyper-aware of this. The McDonald’s Netherlands Christmas ad was pulled because the AI tone felt soulless. It tried to simulate holiday cheer but felt like a corporate simulation. When your blog does this, your blog audience engagement will crater. People don’t want a lecture; they want a conversation.

AI often defaults to a specific, irritating rhythm. It tries to sound profound by ending paragraphs with dramatic summaries that say nothing. It relies on predictable patterns and fake punchlines that act as red flags. If your blog reads like a mediocre high school essay, you’ve already lost. The evidence here is mixed on whether readers always spot it, but the bounce rates suggest they feel the lack of depth.

To fix this, move beyond the default. Using an AI blog generator like GenWrite helps handle the technical side of keyword mapping and structure. But the final layer must be sharp. I’ve seen brands try to automate everything without a second look. It’s a mistake. The ethics of using these tools involve more than just disclosure. It involves a commitment to not boring your audience to death with generic summaries.

This is why the ‘Wikipedia effect’ is a death sentence for blogs. It provides information without a point of view. It lacks the specific, lived-in details that make an article feel authoritative. If you want to scale, you need a tool that handles AI blog ethics and SEO while you focus on adding reality. Otherwise, you’re just adding to the noise of the internet.

Don’t let your content become a flat, gray wall of text. Use tools to build the foundation, then make it punchy. Cut the fluff. Stop trying to sound like a visionary and just say what’s true. That’s how you stay out of the uncanny valley and keep readers coming back for more than just facts.

Does byline transparency actually help or hurt?

If you’ve managed to sidestep the robotic “slop” that often plagues modern blogs, you’re still left with a nagging question: do you tell your audience how the sausage is made? It’s a messy trade-off. You want to be honest because honesty usually builds trust, but the second you slap an AI disclosure on a post, you might be inviting a bias you didn’t earn. AI content transparency isn’t just a moral choice; it’s a strategic one that determines whether your readers stay or bounce.

The reality is that byline transparency often acts as a Rorschach test. Some readers see a disclosure and appreciate the ethics. Others see it and immediately tune out, assuming the content lacks depth or accuracy. It’s not just about the tech, either. Consider the backlash some newsrooms faced when they swapped human names for marketing-heavy bylines like an “Express Desk.” Readers didn’t see that as efficiency; they saw it as a mask for cost-cutting.

The generic byline trap

When you use a vague byline, you’re often signaling that no one is willing to stand behind the work. This is where things get tricky for businesses using an AI blog generator to scale their output. If you hide behind a generic tag, you aren’t fooling anyone. In fact, readers are becoming hyper-aware of these patterns. They often interpret a lack of a human name as a sign that a machine did all the work, even if an editor spent hours refining the draft. This directly impacts byline credibility in the eyes of a savvy audience.

But does this skepticism actually hurt your bottom line? Not always. If your goal is purely informational, like explaining how to reset a router, the reader’s need for an answer usually outweighs their curiosity about the author. They want the solution, not a pen pal. But for thought leadership, that lack of a human face creates a massive barrier to entry.

Balancing disclosure with authority

You have to decide where the value lies. If you’re using GenWrite to handle the heavy lifting of keyword research and initial drafting, you’re still the one directing the strategy. Is it more honest to credit the tool or the person who prompted it? There’s no industry consensus yet, and that’s the problem. Some believe that disclosing involvement is the only way to maintain long-term trust in AI writing. They argue that if you’re caught “faking” human authorship, the reputational damage is permanent.

Yet, others point out that we don’t disclose the use of spellcheckers or grammar tools. Why is a more advanced assistant different? The middle ground is often the most effective. Instead of a binary choice, many successful sites are moving toward “AI-assisted” disclosures. This acknowledges the technology while maintaining the human’s role as the authority. It tells the reader that a human is still steering the ship, which mitigates that “uncanny valley” fear.

Why byline credibility matters for SEO

Search engines don’t necessarily penalize AI content, but they do prioritize expertise and trust. A generic byline makes it much harder to prove those qualities. If your site is just a sea of anonymous posts, you aren’t building a brand; you’re just filling space. So, does transparency help or hurt? It helps your long-term integrity, but it might hurt your click-through rates in the short term if your audience is tech-skeptical. You’ve got to know your crowd. Are they looking for a quick fix or a deep connection? Your byline strategy should follow that answer.

When is it morally risky to use AI?

Transparency only solves the problem of honesty; it doesn’t solve the problem of appropriateness. Even with a clear disclosure, using synthetic text to address a reader’s deepest fears or financial vulnerabilities feels wrong to most people. This reaction isn’t just a preference for human prose. It’s a visceral response often described as moral disgust. When we’re dealing with life-altering decisions, we don’t just want accuracy. We want a pulse. We want to know that if the advice fails, there’s a person who feels the weight of that failure.

The medical empathy gap

In the healthcare space, the stakes are literal. A patient researching a terminal diagnosis or a parent looking for mental health support isn’t just looking for a list of symptoms. They’re looking for a connection. AI can aggregate data points with terrifying speed, but it lacks the capacity for human empathy in content that readers instinctively demand in these moments. If a reader finds out a comforting article about grief was generated by an LLM, the words don’t just lose value,they become offensive. It feels like a simulation of care, which is often worse than no care at all.

Financial risk and the social contract

Finance follows a similar logic. We’ve seen how AI hallucinations can lead to disastrous financial advice or biased underwriting. But beyond the technical errors, there’s a breach of the social contract. When a bank or a fintech blog uses AI to give investment advice, they’re offloading the moral burden of that advice onto a machine. This creates a massive gap in accountability. Most readers believe that if you’re going to tell someone how to handle their life savings, you better have the skin in the game to back it up.

When automation meets ethics

This is where the distinction between efficiency and ethics becomes sharp. Tools like GenWrite are excellent for SEO optimization and scaling a brand’s digital footprint in competitive markets. They handle the mechanics of content creation so humans can focus on strategy. But using a blogging agent for a generic marketing post is fundamentally different from using it to write a suicide prevention guide. The AI blog ethics of the future will be defined by where we draw this line.

The cost of missing accountability

And that’s the reality: AI doesn’t have a reputation to lose. It doesn’t have a conscience. When we use it in high-stakes domains, we’re essentially asking the reader to trust a void. This doesn’t always lead to a drop in traffic, but it almost always leads to a drop in brand authority. If you aren’t willing to put a human name behind a high-stakes claim, you shouldn’t be making the claim at all. The AI writing impact is a net positive for the web, but only if we respect the boundaries of human experience.

How readers identify AI without being told

Imagine you’re reading a guide on fixing a plumbing issue. You expect the author to mention that one specific nut that always gets stuck or the time they flooded their basement because they forgot the main shut-off valve. Instead, the text flows with a sterile, frictionless precision. It claims that fixing a leak is less about wrenches and more about the ‘sanctity of the home.’ That’s the first red flag. It’s a rhetorical trick I see constantly in raw LLM outputs,a pivot from a concrete task to an abstract, slightly hollow philosophy that no human writer would actually voice while holding a wrench. Honestly, most readers don’t need a sermon; they just need to know how to stop the water. This doesn’t always hold, as some high-end models are getting better at mimicry, but the lack of specific context usually gives them away.

Savvy users have developed a sixth sense for these AI content markers. It isn’t just about whether the grammar is correct; in fact, the grammar is often too perfect. Human writing is messy. We use sentence fragments for emphasis. We end sentences with prepositions. We have voices that include specific quirks or recurring jokes. When a blog post feels like it was written by a committee of librarians who have never experienced a bad day, reader perception of AI starts to tingle. The absence of error becomes its own kind of mistake.

the contrastive framing trap

One of the most predictable patterns is the use of contrastive framing. You’ll see sentences that attempt to elevate a mundane topic by claiming it is ‘not merely’ a technical task but a ‘journey’ or a ‘philosophy.’ While true in a metaphorical sense, this specific structure is a default setting for many models trying to sound insightful. It’s a shortcut to gravitas that usually signals a lack of original thought. If every third paragraph uses this ‘higher purpose’ pivot, the reader starts to tune out. They are looking for the answer to their search query, not a forced epiphany.

the absence of scar tissue

What’s usually missing from automated text is what I call ‘scar tissue’,the specific, often painful lessons that come from actually doing the work. An AI blog generator can synthesize millions of data points to tell you that search engine optimization is vital, but it won’t tell you about the time a broken tracking pixel cost a client five figures in a single weekend. That specific, gritty detail is what builds blog audience engagement.

When we use tools like GenWrite, the goal is to handle the heavy lifting of SEO optimization and keyword research, but the smartest users know that the final layer of humanity comes from injecting those specific stories. If a post lacks a single mention of a specific tool’s bug, a specific person’s name, or a specific date something went wrong, it feels like it exists in a vacuum. It’s the difference between a textbook and a conversation.

the inspirational pivot

Watch for the moment the writing shifts from technical details to a broad, sweeping conclusion that sounds like a motivational poster. This shift often happens at the end of sections. Instead of ending with a practical next step, the text might drift into talking about the ‘power of connection’ or ‘the future of the industry.’ It’s a way for the model to exit a topic it doesn’t truly understand. Most readers don’t need to be inspired by a blog post about spreadsheet macros; they just need to know how to fix the error in cell B12. When the tone shifts from ‘helper’ to ‘philosopher’ without warning, the automation is exposed.

Building a better ‘human-in-the-loop’ workflow

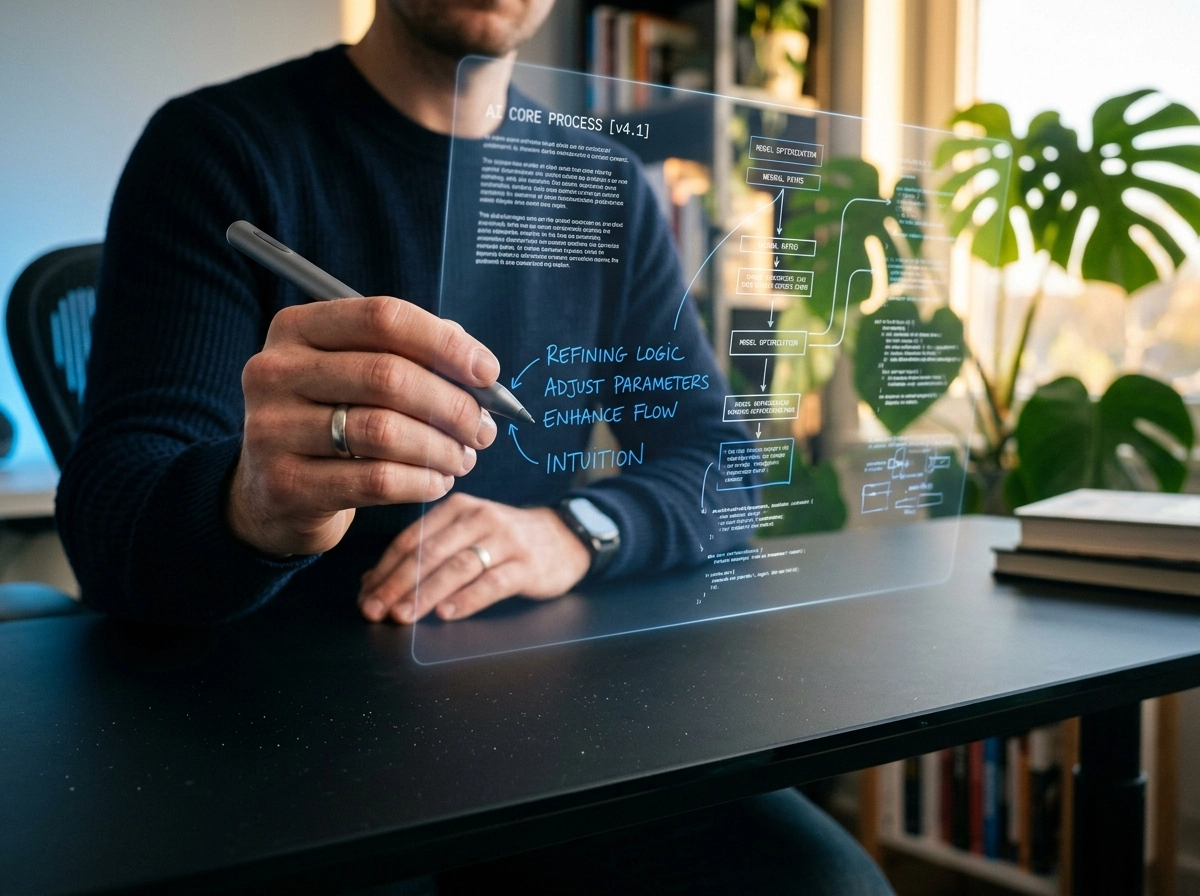

Solving the ‘tells’ of AI isn’t about making the machine smarter; it’s about making the process more human. If the reader detects a lack of personality or a suspiciously perfect rhythm, the solution is a deliberate human-in-the-loop (HITL) architecture. This isn’t a safety net for errors. It’s a strategic framework for injecting human expertise where it creates the most weight. I’ve found that the most effective teams treat AI as a high-speed engine and the human as the navigator.

When you use an AI blog generator to handle the heavy lifting of keyword research and initial drafting, you’re not abdicating responsibility. You’re freeing up mental bandwidth to address the deeper AI content marketing questions that a machine simply can’t answer. Who is the actual audience? What specific pain point are we solving today? Automation handles the ‘what’ and ‘how,’ but the human must always own the ‘why.’

Designing for strategic friction

The mistake most firms make is trying to remove all friction from their publishing process. They want a one-click solution that posts directly to WordPress without a second thought. But friction is where the value lives. A better workflow uses ‘strategic pauses.’ In environments like n8n or Cloudflare, this is often called a ‘wait-for-approval’ pattern. The AI agent gathers the data, structures the argument, and even suggests internal links, but it stops before the publish button.

This pause allows a human editor to look at the draft and ask: ‘Is this actually helpful, or is it just noise?’ This doesn’t always hold for every single social post, but for long-form content, it’s non-negotiable. The goal of human-AI collaboration is to ensure that the final output doesn’t just meet SEO requirements but actually provides original insight. If you aren’t adding a unique perspective or a proprietary data point during this review, you’re likely producing ‘slop’ that readers will eventually ignore.

The 80/20 rule of production

I recommend a split where AI handles roughly 80% of the production volume. This includes the initial SEO optimization, competitor analysis, and generating the first 1,500 words of prose. The remaining 20% is the human’s job. This 20% isn’t just proofreading for typos,it’s about adding the ‘meat’ that AI lacks. This means inserting real-world friction, like a story about a project that failed or a specific tool configuration that didn’t work as expected.

GenWrite is designed to facilitate this by automating the tedious parts of the cycle, such as image addition and link building, so you can focus on the narrative. The reality is that automated blog content value is determined by the quality of the prompts and the depth of the final edit. If you treat the AI output as a finished product, you’re leaving trust on the table. But if you treat it as a high-quality substrate to build upon, you can scale your traffic without losing your soul.

Validating high-stakes claims

Finally, the human-in-the-loop must act as the ultimate arbiter of truth. AI is excellent at pattern recognition but can be confidently wrong about specific technical details or recent events. In high-stakes niches like finance or healthcare, the human’s role shifts from editor to fact-checker. You should verify every statistic and every technical claim.

So, does this slow you down? A little. But the trade-off is a brand that people actually trust. You can still publish ten times more content than a manual writer could, but each piece will carry the weight of human authority. It’s about finding that sweet spot where efficiency meets empathy. Readers don’t hate AI; they hate being lied to by a machine that doesn’t understand them.

The deception penalty is harder to fix than bad writing

A $193,000 fine isn’t just a line item on a balance sheet; it’s a permanent stain on a brand’s digital record. That was the price one company paid for claiming its automated services were equivalent to a human lawyer’s expertise. When you transition from a human-in-the-loop workflow to total automation without disclosure, you aren’t just saving time. You’re gambling with a deception penalty that often costs more to rectify than the lifetime salary of a full-time writing staff.

Bad writing is a technical problem you can solve with a better editor or a more refined prompt. But deception is a systemic failure of brand integrity. If readers feel they’ve been tricked into consuming machine output under the guise of human thought, the resulting fallout is rarely about the quality of the prose. It’s about the breach of the implicit contract between creator and consumer.

And the stakes are rising beyond just losing a few newsletter subscribers. Regulatory bodies are increasingly treating AI-generated deception as a form of consumer fraud. For instance, the recent crackdown on platforms generating fake consumer reviews highlights a sharp shift in how automation is viewed by the law. If your content strategy relies on pretending an algorithm is a person, you’re building on sand.

the hidden costs of broken trust

Trust in AI writing is fragile because it’s built on the expectation of utility. Readers generally forgive a dry technical manual if it solves their problem, but they rarely forgive being lied to about who,or what,wrote it. This is why transparency is actually a survival mechanism.

When you use an AI blog generator like GenWrite, the goal isn’t to hide the technology but to use it to deliver superior value. The efficiency of content automation allows you to focus on the strategy and data that actually move the needle, rather than spending hours on the basic mechanics of sentence structure.

But there’s a limit to what automation can cover up. This doesn’t always hold true for every niche,some low-stakes entertainment blogs might get away with it,but for brands in finance, health, or professional services, the deception penalty is nearly impossible to outrun. Once a search engine or a savvy reader flags your content as disingenuous, your organic reach often plummets regardless of how many keywords you’ve stuffed into the headers.

why reputation is a non-renewable resource

Fixing a bad blog post takes an hour of editing. Fixing a reputation for being a bot farm takes years of expensive PR and high-touch human content. The reality is that most brands can’t afford the recovery required after a transparency scandal.

So, the smart move isn’t to avoid AI, but to avoid the lie. Use tools to scale, but keep the human touch visible and the disclosure honest. It’s much easier to explain why you used AI to help research a topic than it is to explain why you pretended a machine was a subject matter expert.

Will 2026 readers accept AI-led strategy?

If the deception penalty is the current roadblock, then 2026 is where we finally stop staring at the dashboard and start looking at the road. By then, the “is this AI?” debate will likely feel as dated as asking if a photographer used a digital camera or film. We’re moving toward a reality where the strategy itself is AI-led, and honestly, readers will probably be fine with it,as long as the value is undeniable.

The shift won’t happen because people suddenly love bots. It’ll happen because the sheer volume of data required to stay relevant will exceed human capacity. When you’re trying to figure out the future of blogging, you have to look at how brands are already pivoting. They aren’t just using AI to write sentences; they’re using it to map out entire content ecosystems.

The rise of the co-creative agent

But here’s where it gets interesting. We’re seeing the rise of multimodal systems that don’t just stop at a text block. One strategic input can trigger a coordinated campaign of videos, social posts, and deep-dive articles. For a small business, using an AI blog generator isn’t about being lazy. It’s about surviving. It allows a two-person team to compete with a massive agency by automating the tactical production while they focus on the brand’s unique narrative.

So, does the automated blog content value hold up under scrutiny? It doesn’t always hold. If you’re just churning out generic advice, you’re still going to fail. The readers of 2026 will be even more sensitive to “slop” than they are now. They’ll accept AI-led strategy only when it results in more precise, helpful, and tailored information. The machine handles the SEO optimization and the competitor analysis, but the human provides the soul and the specific edge cases that a model might miss.

Solving the AI content marketing questions

And that brings us to the real AI content marketing questions we should be asking. It’s not about whether we use these tools, but how we integrate them. Tools like GenWrite are already showing that you can maintain high standards while scaling up. The brands that win won’t be the ones hiding their AI usage. They’ll be the ones showing how AI helped them find the exact answers their readers were looking for.

The reality is that readers want solutions. If an AI-led strategy delivers a better, faster, and more accurate answer than a human-led one, the “human touch” argument starts to lose its weight in a professional context. We’re entering an era of co-creation where the lines are permanently blurred. It might feel a bit uncomfortable now, but in a couple of years, it’ll just be how things are done.

Closing or Escalation

The shift toward AI-led strategy isn’t a distant hypothetical; it’s a process happening in real-time. If you wait for the market to decide the rules of engagement, you’ve already lost the lead. The ‘deception penalty’ is the single greatest threat to your domain authority and brand equity. It doesn’t matter how perfect your syntax is if the reader feels tricked. You need a framework that prioritizes AI content transparency without sacrificing the speed that automation provides.

Implementing a tiered disclosure policy

Standardizing how you label content prevents confusion. Not every post needs a flashing neon sign, but honesty builds a buffer against future algorithm shifts or reader backlash. A simple, three-tiered approach works best for most digital properties. It allows you to maintain a high volume of output while keeping the trust you’ve worked hard to build.

| Content Type | Disclosure Level | Description |

|---|---|---|

| Human-Only | None | Zero AI involvement in drafting or ideation. |

| AI-Assisted | Footnote | AI handled research or outlines; human wrote the final copy. |

| AI-Generated | Header | AI drafted the core text; human performed editorial review. |

By being upfront, you transform a potential liability into a mark of sophistication. Readers respect a brand that says, “We used an AI blog generator to pull this data quickly so we could spend more time verifying the results for you.”

The editorial safety net

Speed is useless if it produces a generic void. Every piece of content, regardless of its origin, must pass a human-centric audit. This isn’t about fixing grammar,it’s about injecting the friction and nuance that LLMs naturally smooth over. If the text reads like a dry encyclopedia entry, your audience will bounce regardless of the byline.

Essential check-list items

- Does this include a specific, non-generic anecdote or case study?

- Are the transitions logical or just repetitive?

- Did we remove any hallucinated or overly flowery adjectives?

- Is the perspective bold, or is it trying to please everyone?

Using tools like GenWrite allows you to automate the technical side of content creation, such as SEO optimization and keyword research, which frees up your mental energy for this high-level review. This balance is what sustains blog audience engagement over the long haul. You aren’t just dumping text onto a page; you’re using a sophisticated system to handle the heavy lifting while you provide the strategic direction.

Maintaining the edge

The reality is that AI blog ethics will continue to evolve as tools become more integrated into our workflows. But the core principle remains: your audience’s time is valuable. If you use AI to create value, they’ll stay. If you use it to fill space, they’ll leave. The goal isn’t just to publish; it’s to remain a trusted source in an increasingly crowded digital space. So, ask yourself: is your current workflow building a bridge to your readers, or is it digging a moat they’ll never cross?

If you’re tired of churning out generic content that readers ignore, GenWrite helps you automate the research and structure while keeping your unique human voice front and center.

Frequently Asked Questions About AI Content

Why do readers feel cheated when they find out a post is AI-written?

It’s about the unspoken contract between you and your audience. When they spend time reading your work, they expect a human perspective; finding out it’s just a machine output feels like you’ve cut corners, which breaks that bond of trust.

Can I hide the fact that I use AI to write my blogs?

You could, but it’s risky. Most readers have developed a sixth sense for ‘AI slop,’ and if they catch you in a lie, the deception penalty is much harder to recover from than just admitting you use tools to help with the heavy lifting.

How can I make AI content sound less like a robot?

The secret is adding your own ‘messy’ human quirks. Inject personal anecdotes, specific brand examples, and strong opinions that an AI wouldn’t naturally come up with—that’s what keeps readers engaged.

Does using AI for blog posts hurt my SEO?

Not necessarily, as long as you’re providing real value. Google cares about helpful content, but if your AI-generated posts are just repetitive, low-effort fluff, you’ll eventually see your traffic dip because readers aren’t sticking around.

Is it ever okay to use AI for sensitive topics like health or finance?

Honestly, it’s best to avoid it there. Readers expect empathy and accountability in high-stakes areas, and when they sense a machine is giving them advice on their personal life or money, it often triggers a feeling of moral disgust.