Do I really need a dedicated AI SEO blog writer or is a general tool enough?

Introduction

Picture your organic traffic chart taking a sharp, 28% dive just when you thought you’d finally cracked the code. It’s a nightmare, but it’s the reality for B2B SaaS teams who leaned too hard on generic AI to flood the zone. They treated a basic chatbot like a seasoned AI SEO blog writer. Then reality hit. Search engines are getting smarter at spotting low-effort fluff. In this new environment, pumping out more content isn’t the win it used to be.

We aren’t moving away from AI. We’re moving toward integrated content engines. You can’t just toss a vague prompt at a bot and pray. Getting search intent alignment right takes nuance. General models sound like writers, sure, but they don’t do the heavy lifting for competitor analysis or SEO optimization.

If you’re still using a basic chatbot for content creation, you’re probably spending more time editing than you would have spent writing. It’s frustrating. Specialized AI writers change that. They aren’t just text generators; they’re an ai seo writing assistant that actually gets how ranking works. We’re past the era of generic drafts. It’s all about fine-tuning AI content for your specific niche.

At GenWrite, we see successful teams treat AI as a drafting partner. It’s part of a bigger content automation workflow. They let the tech handle the repetitive stuff—keyword research, link building, and image addition. That leaves the humans to focus on strategy.

But do you really need a dedicated blog analysis tool, or is it just another monthly bill?

The stakes are high. If you pick the wrong tech, you aren’t just wasting money on WordPress auto posting that fails to rank. You’re teaching search engines to ignore your site. The line between general chatter and specialized SEO is the difference between growing and fading away. Generic isn’t enough anymore.

The core differences between general assistants and dedicated writers

General language models are built to predict the next likely word in a sentence. They’re essentially sophisticated calculators for prose, prioritizing fluency over strategic relevance. If you ask a general assistant to write a blog post, it creates a surface-level response based on historical training data that might be years old. This creates a disconnect between what sounds good and what actually ranks on a search results page.

Probability versus precision in SEO

General tools rely on probability, not performance. They don’t understand that a specific keyword needs to appear in an H3 or that a competitor just updated their guide with a new technical insight. They simply guess. In contrast, specialized seo automated software acts as a research engine first and a writer second. These tools scan the live search environment to see what’s working right now, not what worked when the model was last trained.

But the real danger is the “ghost ranking” phenomenon. This happens when your content looks perfect to a human but remains invisible to search engines because it lacks the necessary semantic architecture. While a general AI might produce a beautiful 2,000-word essay, it often misses the Natural Language Processing (NLP) terms that Google expects for that specific topic. A dedicated ai driven content platform bridges this gap by mapping out competitor subtopics before a single sentence is written.

Static data versus live SERP analysis

Most general AI tools operate in a vacuum. They don’t know that your top three competitors all include a specific comparison table or a unique data point. Dedicated writers like GenWrite or Surfer SEO pull this data in real-time. They look at word counts, image density, and link structures of the current winners. This isn’t just about writing; it’s about reverse-engineering success. If you’re looking for the best AI tools for writing SEO rich blog content, you’ll find that the top performers always prioritize this competitive context.

Drafting versus scoring

General tools stop once the text is generated. You get a block of content and you’re on your own. Specialized platforms provide a real-time feedback loop. They score your content against hundreds of ranking factors while you work. This might include checking for keyword stuffing or ensuring your meta tag generator output aligns with the body text.

And let’s be honest about the quality check. General AI often leaves digital fingerprints that search engines are becoming better at identifying. Using a specialized AI content detector is often necessary because general tools tend to follow predictable linguistic patterns. Dedicated platforms are designed to vary these patterns, making the content feel more human and authoritative. So, while a general assistant is a great starting point for a brainstorm, it lacks the technical backbone required to sustain high-traffic rankings over the long term.

Why Project Aura changed the math for content production

Why Project Aura changed the math for content production

Businesses using generic AI-only workflows saw their rankings drop by an average of 23% after the Project Aura update. This wasn’t a minor tweak. It was the end of low-effort scaling. If you’re still using basic prompts to churn out thousands of pages, your visibility is likely vanishing. Standard automated blog software often fails because it lacks the competitive depth required for modern search. It’s a tough lesson: search engines are now great at spotting content that lacks real depth.

When AI Overviews take over, organic click-through rates can tank to 0.61%. That’s a grim reality for brands used to simple blue links. Your content has to be the answer these engines cite. It’s about information density now. If you don’t provide the specific data they want, you’re invisible.

The math changed because search engines now penalize keyword cannibalization aggressively. Creating multiple thin pages for similar queries is a liability. Instead, there’s a push toward enterprise content solutions where every post is a specific tool for growth. I’ve seen sites get buried because they thought more was better. In reality, fewer, more thorough articles would’ve saved their traffic. In high-competition sectors, the penalty for redundancy is faster than it used to be.

Specialized platforms like GenWrite do the hard work by looking at what competitors are doing before writing a single word. If you look at the GenWrite about page, you’ll see we focus on the whole process, not just text generation. It’s about finding the gaps that top-tier AI tools for SEO blog writing identify. We make sure your site doesn’t just repeat what’s already out there. The battle is won in the research phase. If the AI doesn’t know what’s already ranking, it can’t build something better.

Ranking in AI answer engines is now as important as traditional search rankings. Without unique data, LLMs won’t cite you. The gap between a general assistant and a dedicated writer is the difference between growth and disappearing. Speed isn’t enough. You have to be right. General tools are fine for emails, but they lack the technical specifics needed for a post-Aura world.

Questions Organized by Category

The 2026 update fallout made one thing clear: search engines want more than just words. They’re hunting for intent and structural depth. You can’t just toss a lazy prompt at a chatbot and hope to survive the next algorithm shakeup. It’s about precision. I’m seeing a huge shift toward specialized tech stacks where every tool has one specific job.nnWorried about content costs? Don’t slash quality. Instead, get efficient. Stop treating AI like an all-in-one tool that does everything “okay.” Break your workflow into three layers: research, execution, and oversight. This simple split is what saved the sites that grew while others lost 40% of their traffic overnight.nn### Strategic research and intentnHow do you know what to write before opening a doc? You don’t—unless you’ve done the work. This is about keyword clustering and spotting gaps in what your competitors are doing. Using niche SEO tools gives you a solid base. Guessing is just too expensive in today’s search climate.nn### Execution and technical deploymentnThis is where you actually write. It’s also where most people mess up by using tools that ignore internal links or image optimization. Dedicated tools like GenWrite handle the boring stuff automatically. Is a general assistant enough, or do you need a dedicated agent? We’ll get into that.nn### Oversight and the human elementnEven with great automation, you still need editorial control. You have to balance AI speed with human nuance. If you’re scared of sounding like a robot, an AI humanizer helps keep that authentic voice without slowing you down.nnI’ve organized the FAQs below around these three pillars to keep things simple. Whether you’re checking if your current tool is a bottleneck or you’re building a new stack from scratch, these categories will help you find the answers you need.

Q: Can a general tool like ChatGPT handle long-tail keyword targeting?

The assumption that a general-purpose LLM can effectively execute long-tail keyword targeting ignores the fundamental gap between linguistic probability and live search data. While ChatGPT is talented at expanding a seed keyword into descriptive phrases, it operates in a vacuum. It doesn’t see the actual search volume, the difficulty scores, or the volatile intent shifts that define modern search results. It understands how words relate to each other, but not how they perform in a competitive market.

This disconnect often leads to what we call “ghost rankings.” You might publish a perfectly written post that ranks at the top for a specific five-word phrase, but if no one’s searching for that exact string, your traffic remains at zero. Without real-time SERP access, general tools can’t tell the difference between a high-opportunity niche and a linguistic dead end. Results can vary based on the niche, but the underlying risk of wasting resources on zero-volume terms remains constant.

Specialized AI writers solve this by tethering their generation process to live SEO metrics. Instead of guessing what a user might type, tools like GenWrite integrate with search databases to validate intent before the first sentence is even written. If you’re trying to extract data from complex sources for your research, using a research tool like ChatPDF AI can help organize your thoughts, but the final output still needs that SEO-specific validation to ensure it reaches an audience.

It isn’t merely about volume; it’s about the underlying intent. A general tool might see “best budget camera for hiking” and “affordable hiking cameras” as identical. A specialized system recognizes that the first is a listicle intent while the second might be a product category. If you misidentify the intent, you won’t rank, no matter how many keywords you pack into the headers. And since the 2026 update, search engines have become even more aggressive in filtering out content that doesn’t provide a direct, intent-matched answer.

We’ve seen that AI writers for SEO content outperform general assistants because they analyze the current search results to see what’s actually working. They look at the average word count of the top three results, the number of images used, and the specific semantic terms that competitors are winning with. A general chatbot simply can’t see the competition or the topical gaps you need to fill.

So, can you use ChatGPT for brainstorming? Absolutely. But relying on it for the final strategy is a gamble. The reality is that search engines reward precision. If your content doesn’t map perfectly to what users are looking for right now, it’s essentially invisible. Using a dedicated platform ensures that your long-tail strategy is built on a foundation of data rather than linguistic guesswork.

Q: How much does it actually cost to run a dedicated AI blog writer?

A typical human-written post commands roughly $611, while an AI-generated equivalent drops that figure to about $131. That 4.7x difference isn’t just a margin; it’s a structural rewrite of how marketing budgets function. This shift allows teams to scale content without the linear increase in overhead that used to define the industry.

But the sticker price of a subscription rarely tells the whole story. Platforms like eesel AI offer specialized tasks at roughly $4.00 per post, provided you’re already paying for their $99/month integration with a helpdesk stack. If you’re managing a high-volume site, these micro-costs add up, especially when you factor in the human time needed to verify that the output doesn’t hallucinate technical details.

I’ve found that the real tax on cheap AI isn’t the software fee,it’s the editing. When using generic tools, you spend hours fixing broken links or generic prose. This is why specialized AI writing software is gaining ground. These tools aren’t just generating text; they’re handling the SEO heavy lifting that usually requires a junior strategist.

Let’s talk about GenWrite. Instead of just giving you a text box, it handles the end-to-end process: researching keywords, adding images, and even handling WordPress auto posting. When you calculate the hourly rate of a human doing those manual tasks, the cost of a dedicated writer starts to look more like a massive saving. You aren’t just buying words; you’re buying back the three hours a human would spend formatting and linking.

Many teams start with a basic $20 ChatGPT subscription and think they’ve solved their content production costs. But reality hits when traffic plateaus because the content lacks internal link building or competitor analysis. The evidence is mixed on whether raw AI text survives long-term without these layers, but the costs of fixing bad AI later are always higher than doing it right the first time.

And it’s not just about the monthly bill. If a tool doesn’t analyze what your competitors are doing, you’re essentially shouting into a void. Dedicated platforms often include these audits in their base price. So, while a $39 or $99 monthly fee might seem higher than a free tool, the ROI shifts when you stop paying a human to do the keyword research manually.

The reality is that integration matters more than most admit. A tool that lives in isolation creates a friction cost. If your AI writer can’t talk to your CMS or pull from your existing knowledge base, your team will spend their day copy-pasting. That’s a hidden drain on resources that most pricing pages conveniently ignore. It doesn’t always hold that the cheapest tool is the most cost-effective.

Q: Do these tools replace my need for human freelance writers?

Imagine a content manager at a growing fintech firm who used to juggle six different freelance writers, each with varying levels of subject matter expertise. Every month was a cycle of briefing, waiting, and heavy editing to ensure the technical details were accurate. It was an expensive, slow-moving machine. Today, that same manager uses a single AI SEO blog writer to generate the foundation of their technical library. They didn’t fire their writers; they promoted them.

The math has changed. Instead of paying for the labor of stringing sentences together, you’re now paying for the expertise to judge those sentences. This is the shift from creator to curator. In this new world, the “writer” is the person who understands the customer’s pain points deeply enough to tell the AI exactly what to focus on.

The 10-80-10 workflow in action

Most high-output teams are moving toward a 10-80-10 model. It starts with 10% human strategy,setting the tone, picking the angle, and defining the unique value proposition. Then, the AI takes over for the middle 80%, handling the bulk of the research and drafting. Finally, a human returns for the last 10% to perform quality control.

This last step is where the value lives. It’s about adding that specific anecdote from a sales call or a detailed take on a recent regulatory change that an LLM wouldn’t know. If you are looking for AI writing tools to improve your content creation, you’ll find that the best ones aren’t meant to work in a vacuum. They’re designed to be the engine that a human driver steers.

Why human experience still wins

Search engines have become expertly adept at spotting content that lacks “lived experience.” An AI can describe how a product works, but it can’t describe the relief a customer felt when that product saved their weekend. That’s why human freelancers aren’t going away; they’re becoming more specialized.

The role of the generalist writer who summarizes top-ten lists is mostly over. Those tasks are now handled by platforms like GenWrite, which can manage the technical SEO and structural requirements more efficiently than a human can. But the demand for subject matter experts,people who can provide the “Experience” and “Trust” in the E-E-A-T framework,has never been higher.

Scaling with enterprise content solutions

For larger organizations, these enterprise content solutions allow for a scale that was previously impossible. You can maintain a daily publishing schedule across multiple verticals without a massive increase in headcount. The AI handles the repetitive parts of SEO, like keyword density and internal linking, while your team focuses on high-level brand strategy.

It’s a mistake to think of this as a zero-sum game between humans and software. It’s more of a partnership where the AI handles the “science” of SEO and the human handles the “art” of persuasion. Sometimes the output needs a heavy hand; other times, the AI hits the mark on the first try. But having a human eyes-on-glass approach ensures you don’t fall into the trap of publishing generic text that search engines might eventually devalue. This doesn’t always hold for every niche, but for technical B2B content, the curator model is the only way to stay competitive.

Q: What features define a specialized SEO content engine?

When you move from being a writer to a content curator, your success hinges on the quality of the data your engine ingests. A general-purpose AI lacks the context of the current search results, treating a prompt like a creative writing exercise rather than a competitive technical challenge. A specialized SEO content engine functions as a research partner, identifying the specific signals that search engines prioritize before it ever types a single word.

real-time serp and semantic gap analysis

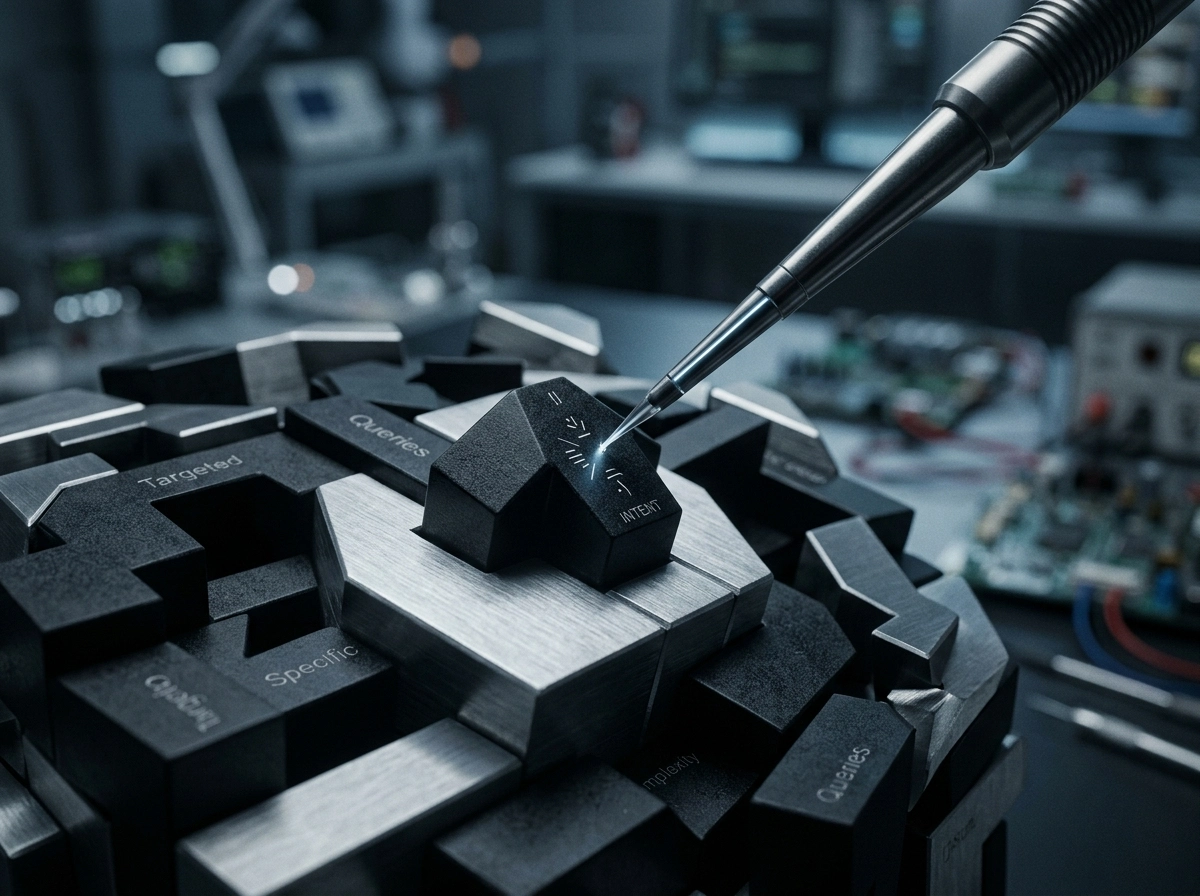

The primary differentiator for niche content tools is their ability to perform live Search Engine Results Page (SERP) scans. While a general model relies on data from months or years ago, specialized automated blog software analyzes the top 10 results in real-time. It looks for semantic gaps,topics your competitors are covering that you’ve missed,and ensures your outline addresses those specific queries. It’s about finding the missing pieces of a puzzle rather than just describing the picture.

But scanning isn’t enough; the engine must interpret entity relationships. It identifies the core concepts, people, and locations that search engines associate with your primary keyword. If you’re writing about high-performance laptops but fail to mention thermal throttling or NVMe speeds, the engine flags this as a topical authority deficit. This doesn’t always guarantee a number one ranking, but it ensures you’re at least in the right conversation.

multi-source signals and e-e-a-t integration

Modern search algorithms have moved beyond simple text matching to reward Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T). A specialized engine like GenWrite understands that human-like trust signals often come from outside traditional articles. It looks for Reddit citations to capture genuine user sentiment and pulls in YouTube data to provide a multi-media perspective. Integrating these perspectives helps move a blog post from a generic summary to a resource that feels lived-in and verified.

When I look at the best AI tools for writing SEO-rich blog content, the standouts are those that treat the internet as a dynamic database. They don’t just generate text; they cite real-world discussions. This might include pulling a specific user recommendation from a forum or embedding a video that demonstrates a complex process. These elements aren’t just window dressing; they’re the markers of authority that keep readers on the page longer.

automated workflow and structural precision

Technical SEO requires more than just good prose; it demands structural discipline. A specialized engine handles the heavy lifting of meta-description generation, internal link placement, and automatic image sourcing without manual intervention. It understands the hierarchy of H3 and H4 tags, ensuring that the architecture of the post is as readable for a crawler as it is for a human reader.

And let’s be honest, the efficiency of automated blog software lies in its ability to handle the boring stuff. This includes WordPress auto-posting and bulk generation that maintains a consistent brand voice across hundreds of entries. By the time you review the draft, the engine has already handled the competitive analysis and keyword density checks that would take a human hours to complete. You aren’t just getting a writer; you’re getting an entire SEO department in a single interface.

Q: Is the SEO output of a general tool really that much worse?

General AI tools are built to be agreeable, not to rank. That’s the fundamental flaw most people ignore when they copy-paste from a standard chat interface into their CMS. It’s a gamble that doesn’t pay off. Pure AI content that skips a specialized SEO layer sees a 23% drop in rankings compared to human-led efforts. It’s not just about the quality of the prose. It’s about the intent.

The hidden cost of generic outputs

General assistants create content that sounds authoritative but lacks the technical backbone needed for search visibility. They don’t perform real-time SERP analysis. They don’t understand the current competitive density for specific terms. So, you end up with generic content that search engines flag as low-value. It’s a waste of digital space.

But businesses that switch to an optimized workflow see an 8-12% gain in rankings. This isn’t a fluke. It’s the result of using a system that understands the nuances of long-tail keyword targeting. If you’re looking for SEO-rich blog content tools, you’ll find that the best ones don’t just generate text. They build a data-backed structure first.

Why the 23% drop happens

Search engines have gotten better at identifying empty content. When a general tool writes, it often follows a predictable pattern. It stays safe. It avoids specific, hard-to-verify facts. And it rarely includes the kind of internal and external linking that builds authority. This doesn’t always hold true for every niche, but the trend is undeniable.

This generic approach is a massive business risk. If you’re losing nearly a quarter of your ranking potential, you’re essentially paying to fail. It’s much more efficient to use a tool like GenWrite that automates the technical research. It handles the image selection, link building, and competitor analysis that a general tool simply ignores.

Moving toward an optimized hybrid workflow

The 8-12% gain comes from the specialized-AI model. This isn’t about making the AI smarter; it’s about giving it better constraints. A specialized SEO engine starts with a data set. It looks at what’s already ranking and asks what’s missing. And that’s the secret.

General tools can’t tell you what’s missing because they only know what’s common. They reproduce the average. If you want to beat the average, you can’t use an average tool. The performance gap between AI-only and optimized is only going to widen as search algorithms prioritize expertise over word count. Stop treating your blog like a side project for a general assistant. The data is clear: the output is worse because the input lacks context.

The search for intent over keyword density

If you’re still obsessing over how many times a keyword appears in your text, you’re playing a game that ended years ago. The real question isn’t whether your primary phrase shows up five or fifteen times. It’s whether you’ve actually solved the user’s problem. General LLMs are programmed to be helpful assistants, which means they’re prone to ‘pleasing’ your prompt by repeating your keywords back to you. This creates a loop where the content looks right to a human but feels hollow to a search engine.

The disconnect between keywords and intent

Why does this happen? General tools treat every prompt as a standalone creative task. They don’t look at the live search results to see what Google is actually rewarding right now. If you ask for a blog about ‘sustainable gardening,’ a general AI might give you a poetic essay on the beauty of nature. But if the top ten results are all ‘how-to’ guides for urban apartments, that poetic essay will never rank.

Specialized AI writers approach this differently. Instead of just generating text based on a language pattern, they start by reverse-engineering the search results. They ask: Is the user looking for a step-by-step guide, a list of product comparisons, or a deep explanation? If you feed a general tool a prompt, it guesses. A platform like GenWrite analyzes the competition to see that users actually want a curated list with pros and cons, not a philosophical lecture.

Why niche tools win on search signals

This is where niche content tools prove their worth. They don’t just fill space; they map the content structure to the user’s hidden intent. When I look at the best AI tools for writing SEO-rich blog content, the standout feature isn’t the prose quality,it’s the ability to interpret search signals. If the top-ranking pages all include a ‘how-to’ section and a specific data table, a specialized engine will ensure those elements are present.

But let’s be honest: intent is hard to pin down. It’s not a static metric. One day a query might be informational; the next, it’s transactional because of a new product launch. General tools can’t keep up with that shift because they’re working off a frozen dataset. They don’t ‘see’ the live web the way a dedicated SEO engine does. You might end up with a beautifully written piece that targets a keyword perfectly but misses the intent entirely, leaving you stuck on page three.

The cost of missing the mark

So, what happens if you ignore this? You waste resources on content that Google views as ‘thin’ or ‘unhelpful,’ even if it’s 2,000 words long. The reality is that recovery from an intent-mismatch penalty is much harder than simply fixing a few typos. You have to rewrite the entire logic of the piece. That’s why I always lean toward systems that prioritize actual search data over simple text generation.

How to build your hybrid content stack

Building a hybrid content stack isn’t about collecting subscriptions. It’s about data flow. Most marketers fail because they expect a single chat window to handle keyword research, competitor analysis, and draft generation. This approach creates prompt fatigue. You end up with siloed data and generic content that search engines ignore. A real stack separates the brains from the brawn. You need a strategy tool to identify topical gaps and an execution tool to build the prose.

Strategy starts with identifying what your audience actually wants. If you rely on a general assistant, you’re guessing. You need a research layer that defines what you should write before you ever open a text editor. MarketMuse is often the standard here because it finds gaps in your content compared to the top search results. It tells you what’s missing, but it isn’t a writer. It’s a researcher. Trying to make it draft a 2,000-word blog is a mistake.

The three-tier architecture

A functional hybrid stack follows a clear structure. The first tier is the intelligence layer. This includes tools like MarketMuse or Ahrefs. They define the ‘what.’ The second tier is the creation layer. This is where AI writing software fits in. Tools like Jasper are good for brand voice, but they still require a pilot to manage the output.

The third tier is the automation layer. If you’re managing dozens of sites, manual drafting is a bottleneck. This is where GenWrite changes the equation. It automates the end-to-end process by researching keywords and publishing directly to your site. It removes the friction between having an idea and having a live post. Without this layer, your stack is just a collection of disconnected tasks.

Choosing between strategy and execution

You have to decide if you’re building for scale or for high-touch manual control. Tools like eesel AI are excellent for internal knowledge management, but they struggle with external SEO. They don’t see that your competitor just updated their schema or that a new Reddit thread is stealing your traffic. For the open web, you need a robust SEO tool comparison to see which platforms actually connect to live search data.

Why integration beats features

The biggest mistake is the silo trap. If your keyword research lives in one app and your drafts live in another, you lose context. Your tools must talk to each other. I’ve seen teams waste months trying to make a general assistant act like a technical SEO specialist. It’s a waste of time. A general tool doesn’t know about search intent or SERP volatility.

The hybrid stack works because it respects the limits of AI. Use the intelligence layer to find the intent discussed previously. Use the creation layer to turn that intent into prose. Use GenWrite to handle the technical distribution and link building. This isn’t just about saving time. It’s about ensuring your content actually has a chance to rank.

Closing or Escalation

You’ve assembled the stack, but the real work starts when you stop looking for the “perfect” algorithm and start building a better operation. It’s easy to get caught in a loop of testing every new AI SEO blog writer that hits the market. But the hard truth? Even the most sophisticated tool won’t save a broken workflow. If you’re ready to move past the experimentation phase, your next step isn’t just buying another subscription; it’s auditing your existing library for AI visibility and setting up a governance framework that actually sticks.

Think about it this way. Most teams fail because they treat AI like a vending machine,put a prompt in, get a blog out. That’s a recipe for generic noise. Instead, you need to think like a publisher. This means implementing a “Master Prompt” framework. This isn’t just a long instruction block. It’s a structural asset that encodes your brand voice, negative constraints (the things you never say), and specific formatting rules. By the time a draft hits a human editor, it should already be 80% of the way to your brand standards. It’s about pre-alignment.

And honestly, if you’re managing multiple domains or high-volume output, you’re likely looking for enterprise content solutions that can handle the heavy lifting without constant babysitting. This is where a dedicated platform like GenWrite comes in. It doesn’t just “write”; it automates the research, link building, and publishing steps that usually eat up your entire week. But even with automation, the human-in-the-loop model is your insurance policy against the search volatility we’ve seen lately. Results vary based on how strictly you enforce these editorial checks, but the data suggests that human-curated AI content consistently outperforms raw output.

If you’re still weighing your options, looking at a curated list of the best AI tools for writing SEO-rich blog content can help you spot the nuances between an auditor and a pure generator. You might find that a combination of a specialized auditor and a generator like GenWrite provides the balance you need. Not every tool is built for the same purpose. Some focus on the “humanizing” aspect while others focus on the raw SEO technicals.

So, where do you go from here? Start by looking at your current output. Is it actually solving a user’s problem, or is it just filling space? The search engines are getting better at identifying “hollow” content every single day. Your goal is to use AI to build depth, not just volume. This shift requires a change in mindset from “how many blogs can we ship?” to “how many of our blogs are authoritative enough to win?”.

What happens if you ignore this operational shift? You’ll likely end up with a mountain of content that looks great on paper but fails to move the needle on the SERPs. The real growth is hiding in the details of your governance. Tomorrow morning, don’t start a new draft. Audit your top ten performing pages. See where the generic phrasing is diluting your authority. That’s the first step toward a strategy that actually survives the next update.

If you’re tired of generic content that doesn’t rank, GenWrite handles the heavy lifting of SEO research and automated publishing so you can focus on strategy.

Frequently Asked Questions

Can a general tool like ChatGPT handle long-tail keyword targeting?

Not really. While it’s great at writing, it doesn’t understand the technical SERP data or search intent required to rank for specific long-tail keywords. You’ll end up with grammatically correct text that misses the mark on what users are actually searching for.

How much does it actually cost to run a dedicated AI blog writer?

It’s significantly cheaper than hiring human writers for every single post. While human-written content averages $611 per piece, specialized AI workflows can drop that cost by 4.7x, letting you reinvest those savings into high-level strategy and editing.

Do these tools replace my need for human freelance writers?

They don’t replace humans, they just change the job description. You’re moving from a creator role to a curator role, where your main task is to add unique insights and brand voice that AI can’t fake.

What features define a specialized SEO content engine?

Look for tools that do more than just write. You need features like SERP intent mapping, automated keyword clustering, and the ability to pull in real-time data from sources like Reddit or YouTube to build genuine topical authority.

Is the SEO output of a general tool really that much worse?

The data is pretty clear on this. Businesses relying solely on raw AI output saw a 23% drop in rankings after the Project Aura update, while teams using human-in-the-loop workflows actually saw an 8-12% gain.