Will Google actually rank your AI-generated blog posts?

Introduction

You’ve likely seen the carnage: websites that once dominated search results suddenly vanishing after a core update, their traffic graphs looking like a cliff edge. It’s easy to point the finger at the algorithms and claim that google rankings for ai content are a thing of the past. But if you look closer at the survivors, you’ll find that many of them are still using AI,they’re just doing it better than you are.

The anxiety surrounding AI is misplaced. We’ve spent months analyzing why some sites thrive while others get buried, and the pattern is clear: the risk isn’t the technology, but the lazy, uninspired way it’s being deployed. If you’re just hitting ‘generate’ and ‘publish’ without a second thought, you aren’t building an asset; you’re creating noise. I’ve seen agencies shift from an ‘AI-first’ mentality to a ‘human-expert-led’ model, and the results are night and day. One specific agency saw their traffic stabilize after the March 2024 core update simply by replacing generic drafts with original case studies.

So, why does ai blog writer visibility fluctuate so wildly? It comes down to whether your automated blog post creator is actually solving a user’s problem or just filling a quota. When I tracked the results of an ai blog content creator over a month-long experiment, the posts that ranked weren’t the ones with the most keywords,they were the ones that felt authoritative.

The hard truth is that search engines don’t care who wrote the words; they care if the words deserve to be there. If your seo automated software is churning out repetitive, hollow advice, you’re essentially painting a target on your back. At GenWrite, we built our platform to avoid these pitfalls by prioritizing end-to-end optimization that mirrors human research. It’s about using automation to handle the heavy lifting of keyword research and competitor analysis so you can focus on adding the unique value that keeps readers on the page.

What happens if you ignore this? You’ll likely join the ranks of small business owners who churn out 50 ‘optimized’ posts a month only to realize zero of them are ranking. The pivot to quality isn’t just a suggestion; it’s a survival tactic. We’re moving toward an era where only the most helpful content survives, regardless of its origin.

If you’re worried about whether your content will survive the next update, you’re asking the wrong question. Instead, ask yourself if a human would actually find your post useful if the search engines didn’t exist. That shift in perspective changes everything about how you use these tools.

Questions Organized by Category

You can’t just throw a prompt at a chatbot and expect a first-page result. That’s a fantasy. To win, you’ve got to break your approach into three pillars: Policy, Quality, and Strategy. This isn’t just about avoiding a slap from an algorithm; it’s about building a traffic engine that doesn’t break when the next core update rolls around. If you ignore these buckets, your site is a ticking time bomb.

policy: is my content legal in google’s eyes?

Google’s stance is clear. They don’t hate AI content; they hate “scaled content abuse.” This policy targets anyone,human or bot,who floods the web with low-value pages just to rig the rankings. If you use a specialized writing software designed for search, you’re already safer. These tools prioritize structure and relevance over raw volume. Don’t be the person who publishes 1,000 pages of fluff. You’ll get caught, and your domain will suffer. The real question isn’t “can I use AI?” but “is my AI use helping or hurting the user experience?”

quality: does this post actually earn its place?

Perfect grammar is the bare minimum. Google now hunts for information gain. Does your post actually add something new? Or is it just a remix of the current top ten results? Data shows that ranking ai content requires a unique angle. If your tool just scrapes and repeats, you’re wasting your time. You need to inject specific data, case studies, or contrarian viewpoints. Without a unique “why,” your post is just noise. High quality isn’t about word count; it’s about the depth of the insight provided.

strategy: moving from keywords to intent

Stop chasing every low-competition keyword you find. It’s a dead end. We’ve seen better results at GenWrite by letting an ai seo blog writer focus on user intent instead of just raw volume. This approach ensures your content answers the question the searcher actually has. It’s about authority, not just search engine optimization for blogs. If your strategy is just “post more,” you’ve already lost. Focus on the questions that actually drive conversions and solve problems.

Does Google have a specific penalty for AI-generated text?

837 websites out of 49,345 were deindexed following the March 2024 core update. That’s a staggering number. But it’s often misinterpreted as a war on silicon-based writing. The reality is that Google doesn’t have a specific “AI detector” penalty designed to flag and bury your work just because a large language model helped you write it. nnInstead, the algorithm focuses on outcomes. If your content exists solely to fill space or manipulate search results without offering something new, it falls under the “scaled content abuse” category. This is the distinction that matters. Google is looking for low-effort, high-volume spam that provides zero unique value to the person searching.nn### the myth of the ai-detector penaltynMany creators worry that a secret “AI score” will doom their rankings. But the evidence suggests otherwise. Google’s google helpful content update processes focus on whether a human finds the page useful, not whether a human typed every single character. If the text is accurate and engaging, the machine origin is irrelevant. nnI’ve watched site owners get reckless. One experiment involved taking a high-performing 8,000-word article and replacing just the introduction with generic AI text. Within days, traffic dropped from 40 clicks a day to absolute zero. The issue wasn’t the AI itself, but the fact that the new intro lacked the context and flow that originally kept readers on the page. nn### why scaled content abuse is the real threatnYou can’t just hit “generate” a thousand times and expect to own the first page. Google’s spam policies are quite clear about mass-produced content that lacks originality. If you’re using tools to churn out thousands of pages that are just rehashed versions of existing sites, you’re asking for trouble. nnThis is where a balanced approach is necessary. Using a tool like GenWrite helps bridge the gap by focusing on SEO optimization and competitor analysis rather than just dumping raw text onto a page. It’s about building a workflow where the AI handles the heavy lifting, but the final output remains high-quality and aligned with what users actually want. This prevents the ai generated text quality from dipping into the “spam” territory that triggers manual actions. nn### how to stay on the right side of qualitynThe fear that AI content will lose its search visibility in the coming years is only valid if you ignore user intent. If your blog post answers a specific question better than anyone else, Google will rank it. It’s that simple, yet that difficult. nnResults here aren’t always consistent, though. Some sites with significant AI-generated text still rank perfectly well because they provide specific data or unique formatting that helps the user. Others with human-written content get buried because they’re boring or unoriginal. The source of the words is secondary to the value they provide. nnSo, focus on the substance. Ensure your AI-powered blog creation includes relevant links, images, and keyword research. If you treat the machine as a research and drafting partner rather than a “set and forget” machine, you’ll avoid the pitfalls that caught those 837 deindexed sites.

The difference between being indexed and actually ranking

Imagine you’ve just pushed fifty new posts to your site using a basic automation script. You wake up the next morning, check your index status, and see every single URL has been successfully crawled. You’re ecstatic because, on paper, you’ve just “won” at scale. But three weeks later, those same pages haven’t moved past page eight, and your organic traffic is a flat line.

the illusion of indexing

Indexing is just Google acknowledging your page exists. It’s essentially the search engine saying, “I’ve seen this, and I’ve put it in the filing cabinet.” Ranking, however, is a vote of confidence that your content is better than the millions of other options available. AI content often falls into a honeymoon period where it gets indexed quickly because the technical structure is clean, but it fails to sustain any real visibility.

Google’s crawlers are efficient at finding new text, but they’re also getting better at identifying empty content. If your posts are grammatically perfect but lack unique insights, they won’t survive the first algorithm update. We’ve seen this happen with a personal finance site that published generic coffee bean reviews. The articles were technically flawless, yet they lost nearly all their traffic because they didn’t offer the real-world perspective that users actually want.

why the honeymoon ends

The initial boost in visibility is often Google’s way of testing the waters. If users land on your page and immediately bounce because the text feels robotic or repetitive, the algorithm notices. This is why many are asking if AI content will lose its Google rankings in 2025 as the bar for quality continues to rise. It’s no longer enough to just occupy space; you have to earn the click.

The reality is that automated content quality is now judged on human signals like E-E-A-T. A recent test for the keyword “SEO training Houston” proved this. Fully machine-written posts were eventually removed from the index entirely, while content that included specific local details and expert insights saw rapid re-indexing and growth. This doesn’t always hold true for every niche, but the trend is undeniable.

building for long-term visibility

To avoid the index-then-vanish cycle, you need a tool that does more than just string sentences together. GenWrite focuses on the full lifecycle of a post, from deep competitor analysis to automated link building. This ensures that when Google’s bots crawl your site, they find more than just text. They find a well-structured resource that looks like it was built by a human expert.

Success with an ai blog writer visibility strategy requires moving past the more is better mindset. While it’s tempting to flood the zone, the evidence is mixed on whether high-volume, low-effort content can survive long-term. You’re much better off using AI to handle the heavy lifting of research and formatting while ensuring the final output provides genuine value. If you don’t, you’re just renting space in the index until the next cleanup.

How to satisfy E-E-A-T when using a writing assistant

E-E-A-T is the tax Google levies on automated efficiency. It’s the friction between high-speed output and the human ‘proof of work’ that search engines now demand. If an LLM merely predicts the next likely word based on its training data, it is just a linguistic mirror. It reflects existing knowledge without adding anything new. To maintain google rankings for ai content, you have to inject the one thing an algorithm can’t simulate: lived experience.

The experience gap in automated drafts

The first ‘E’ in E-E-A-T is often the hardest for ai writing tools for seo to satisfy. It requires a physical or historical presence. An AI can describe a software deployment, but it hasn’t felt the panic of a 2:00 AM server crash or the relief of a successful patch. This is where your intervention changes a generic guide into an authoritative resource.

Treat the initial draft as a skeletal structure. For it to stand, you must add first-person evidence. This includes specific case studies, screenshots of your own dashboard, or proprietary data points. If you’re using GenWrite to handle content automation, the tool manages the structure and keyword placement, but your role is to layer in the ‘I saw this’ or ‘we tested that’ moments. These micro-stories signal to Google that the content was born from real-world utility rather than a database crawl.

Engineering expertise into the synthesis

Expertise isn’t just knowing facts; it’s the ability to weigh them against each other. Most generic AI content fails because it treats every data point with equal importance. To bridge this, I recommend a research-heavy approach before the writing starts. Use tools like Perplexity to find primary sources, then feed those specific insights into your writing assistant with instructions to prioritize them over general knowledge.

This method ensures the output isn’t just a summary of the top 10 search results. Instead, it becomes a synthesis of expert opinion. You’re creating helpful content fast by using the AI as a logic engine rather than a creative lead. It’s a simple but necessary shift in how we view the human-AI partnership. The AI handles the linguistics, while you handle the intellectual framework.

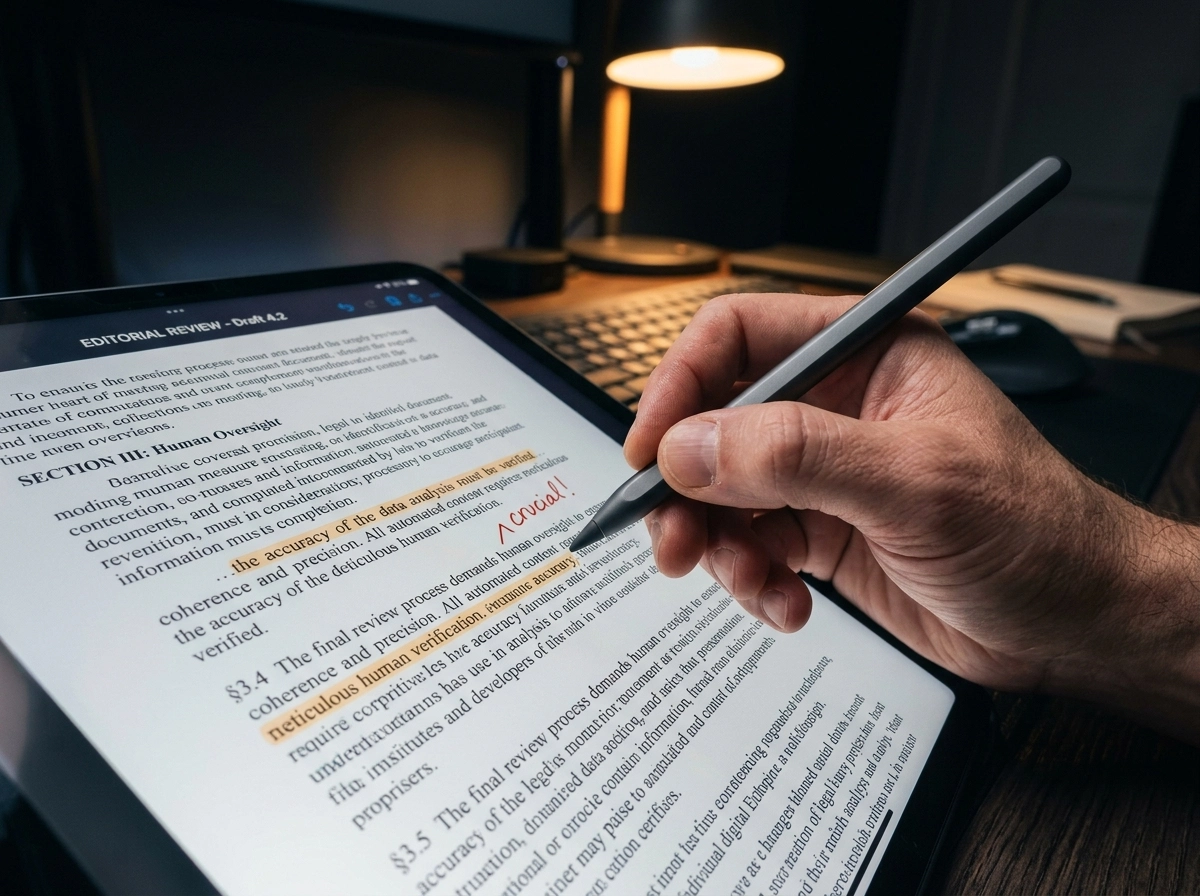

Establishing authority and trust through verification

Trustworthiness is the most sensitive component of the E-E-A-T framework. Google’s algorithms look for signals that the information is safe, accurate, and transparent. The reality is that LLMs still hallucinate technical specs or legal requirements. Every technical claim in your blog post needs a manual check. This isn’t just about avoiding errors; it’s about building a reputation for reliability that survives algorithm shifts.

Practical verification steps

- Link to primary sources. Replace generic external links with direct links to whitepapers, official documentation, or original research reports.

- Use real author bylines. Ensure the post is attributed to a real person with a verifiable digital footprint. Avoid generic ‘Admin’ or ‘Staff’ tags.

- Refresh the data. Manually update any statistics that the AI might have pulled from older training data to ensure current accuracy.

Don’t expect an AI to build authority on its own. It provides the canvas, but your expertise provides the signature. This doesn’t always hold true for low-competition niches where volume is king, but for high-stakes industries like finance or healthcare, human oversight is the barrier to entry. If you ignore the verification step, you’re gambling with your domain authority.

Using GenWrite for the technical foundation

The benefit of a tool like GenWrite is that it automates the SEO mechanics that humans often find tedious. It handles keyword research and competitor analysis, ensuring the content is structurally optimized for search from the start. But once the technical skeleton is ready, the ‘Experience’ and ‘Expertise’ layers remain your responsibility.

Think of it as a division of labor. The AI handles the ‘Search’ part of SEO, and you handle the ‘Engine Optimization’ part by making the content genuinely useful for humans. This hybrid approach is the only way to avoid the volatility that often hits purely synthetic sites during core updates. By focusing your time on adding unique value rather than typing every word, you can scale quality as easily as you scale quantity.

Why ‘lazy prompting’ is the fastest way to lose traffic

Efficiency is often a trap. Most marketers treat LLMs like a vending machine: insert a basic prompt, receive a finished article, and expect traffic to follow. This is the fastest way to kill your site’s authority. If you use a generic prompt, you get a generic result.

Asking a tool to “write a blog post about SEO” is a recipe for failure. The model simply calculates the most statistically likely response based on its training data. It produces the “average” of everything it has seen. This results in ai generated text quality that is technically correct but strategically worthless. You’re just adding noise to a room that’s already too loud.

The trap of consensus content

Google doesn’t need another post that summarizes the same five tips found on every other website. They look for information gain,the specific value a user gets from your page that they can’t find elsewhere. Lazy prompting creates a feedback loop where AI rehashes what AI has already written.

When your content merely reorganizes the existing top 10 results, it scores low on originality. It doesn’t matter how well the prose flows if the ideas are stale. Google’s algorithms are increasingly tuned to reward unique perspectives and data points that aren’t part of the general consensus.

Why your traffic will eventually hit zero

Search engines are getting better at identifying hollow content that lacks a human-in-the-loop signature. Many experts are debating whether AI content will lose its rankings in 2025, and the answer usually comes down to effort. If your seo blog writing software isn’t pulling in fresh data or unique competitor insights, you’re just renting space on the search results page. That lease is about to expire.

Real automation requires a deep workflow, not just a single text box. That’s why we built GenWrite to handle the heavy lifting of keyword research and competitor analysis before a single word is drafted. It’s about building a structure that forces the AI to be specific rather than generic.

Data over fluff

You can’t skip the research phase. If you aren’t feeding the machine data about what your competitors are missing, it will fill the gaps with fluff. Fluff is the first thing Google filters out when a core update hits.

It is better to publish nothing than to publish something that signals your site is a low-effort content mill. The reality is that AI is a multiplier. If you multiply zero effort, you still get zero results. High-ranking content requires a foundation of actual data, specific examples, and a clear understanding of search intent that a simple prompt can never capture.

What the March 2024 core update taught us about scale

The March 2024 core update wiped out a massive 40% of unhelpful, unoriginal content from search results. This wasn’t a minor tweak; it was a systemic purge of pages that offered zero information gain. For those relying on lazy prompting or bulk generation without oversight, the results were catastrophic. Analysis of the fallout showed that nearly half of the sites deindexed during this period consisted of 90% to 100% AI-generated material. It’s a clear signal that Google’s systems for evaluating automated content quality have evolved far beyond simple keyword matching.

The fallout of the 40% reduction

The update didn’t just target small affiliate blogs. It took aim at “Site Reputation Abuse,” where high-authority domains hosted thin, automated content to hijack rankings. This parasite SEO tactic was effectively neutralized because the content lacked the depth required by the google helpful content update standards. If your strategy relies on hiding low-quality text behind a strong domain, you’re building on sand. The reality is that scale without soul is a direct path to manual actions or algorithmic invisibility.

We’ve seen that the volume of content is now a major red flag if it isn’t backed by relevance. But this doesn’t mean AI is the enemy. It means the method of deployment is what matters. Tools like GenWrite focus on deep SEO optimization and competitor analysis to ensure every post adds value rather than just taking up space. It’s about using an AI blog generator to handle the heavy lifting of research and formatting while maintaining a human-centric focus on what the reader actually needs.

Identifying the red flags of automation

There’s a persistent debate about whether AI content will lose its rankings in 2025 and beyond, but the March update provided the blueprint for survival. The sites that survived,and even thrived,were those that used automation to scale expertise, not just word counts. They didn’t just publish; they curated. They used AI to identify gaps in competitor coverage and filled them with specific, data-driven insights. It’s not about the tool; it’s about the intent behind the tool.

Google’s sophisticated classifiers are now adept at identifying “scaled content abuse.” This occurs when pages are generated for the primary purpose of manipulating search rankings rather than helping users. If you’re churning out 50 posts a day with no editorial oversight, you’re essentially inviting a site-wide penalty. The evidence here is mixed for some niche sites that still fly under the radar, but for most, the risk of a total traffic collapse is too high to ignore. You can’t just hit ‘generate’ and walk away anymore.

Bridging the gap between volume and value

What most guides miss is that helpfulness is a measurable metric for Google. They look at user signals, bounce rates, and whether a user’s search journey ends on your page. If your AI-generated text is just a rehash of the top 10 results, you aren’t providing information gain. You’re just adding to the noise. Successful teams use automation to build better briefs and structure, but they keep a firm hand on the final output to ensure it meets the high bar set by recent core updates.

The stakes couldn’t be higher. Misunderstanding how Google views scale leads to wasted resources and dead domains. Stop thinking more is better. Instead, focus on how your automated workflows can produce something that feels indistinguishable from high-tier human journalism. That’s the only way to stay safe as the algorithms continue to refine their detection methods.

Optimizing for AI Overviews versus traditional organic links

Pumping out volume is a dead strategy. Precision is the only way to survive. While Google has gotten better at filtering out generic scale, AI Overviews have shifted the goalposts for every modern search campaign. You aren’t just fighting for a spot in the ten blue links. You’re fighting to be the data source for the LLM summary sitting at the top of the page. That’s the most valuable real estate available.

The transition to answer engine optimization

Standard SEO relies on authority and relevance. Answer Engine Optimization (AEO), however, cares about what I call “source-worthiness.” Your content has to be structured so an AI can parse and verify your claims without hitting a wall. If your ranking ai content strategy ignores how an algorithm summarizes data, you’ll be invisible to the AI Overview.

This requires an answer-first approach. Put the core value in the first two paragraphs. It’s an inverted pyramid style. When an LLM crawls the page, it needs a clear, declarative statement to cite immediately. We’re moving away from the long-winded intros of the past. The AI needs to find the core data points quickly.

Setting up a human-in-the-loop review protocol

You’ve built the structure to capture AI Overviews, but maintaining those rankings requires a protocol that treats the machine as a researcher rather than a replacement. If you’re using ai writing tools for seo, you’re already ahead on speed. But speed without a filter is just a fast track to the bottom of the search results. The goal isn’t just to publish; it’s to publish something that an LLM would want to cite as a primary source.

The first step in a solid human-in-the-loop (HITL) protocol is the fact-verification sprint. Don’t trust the AI’s numbers blindly. I’ve seen drafts cite statistics that sounded perfect but were actually a hallucinated mix of two different reports from five years ago. Your reviewer needs to touch every claim, link, and data point. It’s not just about accuracy; it’s about maintaining the trust you’ve built with your audience.

Next, you need to inject what I call information gain. This is where you, the expert, provide value that the training data can’t. AI can tell you how to change a tire, but it can’t tell you about the specific frustration of doing it in a rainstorm with a stripped lug nut. That messy, specific reality is what Google’s E-E-A-T guidelines actually prioritize. When I use seo blog writing software like GenWrite, I let it handle the heavy lifting of competitor analysis and keyword placement. Then, I spend my time adding the opinionated layer that only a human possesses.

the expert review checklist

| Step | Focus Area | Goal |

|---|---|---|

| 1 | Fact-Check | Verify every stat, date, and external link |

| 2 | SME Injection | Add 2-3 personal anecdotes or proprietary data points |

| 3 | Voice Polish | Remove robotic transitions and repetitive phrasing |

| 4 | Formatting | Ensure readability with proper H3/H4 hierarchy |

Once the content is tight, don’t hide your process. Transparency is a massive trust signal. A simple note stating the article was drafted with generative AI and verified by a human expert goes a long way in the current search environment. Some creators worry about whether AI content will lose its rankings in the coming years, but the reality is that quality always wins. The risk isn’t the technology; it’s the lack of human oversight.

So, who is doing the reviewing? Ideally, it’s someone with deep niche knowledge. If you’re writing about tax law, don’t let a generalist editor sign off on the final draft. You need a subject matter expert to ensure the nuances aren’t lost in the machine’s attempt to find the most likely next word. This protocol doesn’t slow you down as much as you’d think, but it definitely keeps you from getting hit during the next core update.

The ‘hallucination tax’ and how it kills your domain authority

I once saw a travel guide that listed a local food bank as a “must-see tourist hotspot.” It sounds like a joke, but it actually happened when a big tech company let an unvetted AI model loose on their blog. It wasn’t just embarrassing. It showed everyone how fast AI content falls apart when nobody bothers to check the facts.

Publishing a hallucination is worse than a typo. It’s a “hallucination tax” that eats your domain authority. You pay this tax in two ways: you lose your readers’ trust, and you lose the algorithm’s confidence. Once Google decides you’re unreliable, getting back to page one is a nightmare. It’s expensive, slow, and sometimes impossible.

The liability of invented facts

Take the case of a legal assistant who used AI to cite a “Data Privacy Act of 2024” that didn’t even exist. In a regulated industry, that’s a disaster. If you’re writing about money, health, or the law, these made-up facts cause real harm. Google is smart enough to see when a site ignores reality. If you’re flagged as a source of bad info, your rankings won’t just dip. They’ll disappear.

It’s not just about the words, either. AI loves to make up URLs. It cites sources that don’t exist and leaves your site full of dead links and 404 errors. This tells search engines your site is a mess. It’s a dead giveaway of low automated content quality that no amount of keyword stuffing can fix.

Why search engines remember your mistakes

Search engines want to give people answers they can actually use. If your content frequently includes

Can you actually automate 100% of your blog safely?

Pure automation is a trap. If you think you can fire your editors and let an LLM run the show, you’re about to watch your domain authority evaporate. It’s a race to the bottom. The dream of a 100% automated blog is mostly a ghost story told by people trying to sell low-quality software. In reality, total automation creates a generic, factually shaky mess that search engines eventually sniff out and bury.

Why raw output fails the trust test

The consequences of blind automation aren’t theoretical. We’ve seen agencies try to scale by using a basic ChatGPT setup to publish medical and legal advice without any human oversight. It was a disaster. The content wasn’t just inaccurate; it was dangerous. Because it lacked human verification, it became unrankable almost overnight. Google doesn’t reward speed. It rewards reliability. If your content can’t pass a basic sanity check from a subject matter expert, it has no business being on the first page.

The 2026 winner’s playbook

The most successful sites in 2026 won’t be the ones hitting ‘publish’ on 500 unedited drafts a day. They’ll be the ones using a hybrid approach. This means you use an ai blog post writer to handle the heavy lifting,the data gathering, the keyword mapping, and the initial structure. But you leave the high-value synthesis to a person. AI is great at patterns. It’s terrible at having a unique perspective or sharing a lived experience. That’s where the human must step in.

Building a sustainable workflow

Tools like GenWrite are built for this exact balance. The platform manages the repetitive parts of search engine optimization for blogs by handling competitor analysis and link building. It gets you 80% of the way there. But that final 20% is where the value lives. If you skip the human layer, you’re just creating more noise. The community is already debating whether AI content rankings in 2025 will survive the next wave of spam updates. The consensus is clear: low-effort content is on a timer.

The reality of information gain

Search engines now look for ‘information gain’,meaning your post needs to say something that isn’t already in the top ten results. An AI, by definition, is trained on what already exists. It can’t invent a new case study or a fresh take on a dying industry. It can only remix. To rank, you need to inject your own data, your own failures, and your own voice. Use AI to build the skeleton, but you have to provide the soul. This isn’t just about avoiding penalties. It’s about building a brand that people actually want to read.

Closing or Escalation

Moving beyond the hybrid model means accepting that the era of ‘good enough’ prose is over. You can’t just expect to hit a button and watch the traffic roll in without a strategy that accounts for how algorithms actually evaluate value. It’s about moving from simple production to what I call Human Signal Optimization. This shift is what separates those who succeed at ranking ai content from those who vanish after a core update.

This isn’t just another buzzword. It’s a fundamental change in how you use your time as a creator. Instead of spending hours wrestling with a first draft, you use tools to handle the scaffolding so you can focus on the unique data points that search engines actually crave. You’re effectively moving from being a writer to being a curator of high-value insights.

Building your content moat

The reality is that any large language model can summarize a general topic. If you want to stay ahead, you’ve got to bake in elements that a machine cannot invent or hallucinate. I’m talking about original interviews, internal case studies, or proprietary data from your own experiments. That is your content moat.

But consistency is the real growth hack here. Building topical authority across a cluster of high-information-gain articles is far more effective than chasing individual keyword rankings and hoping for the best. It’s a long game, and it requires a workflow that doesn’t lead to burnout. You need a system that maintains quality while you focus on the strategy.

Scaling with precision

This is where an AI-powered blog creation tool like GenWrite becomes your secret weapon. It handles the heavy lifting,keyword research, competitor analysis, and even the technical bits like link building and image addition. By automating the end-to-end process, you’re free to act as the editor-in-chief rather than the manual laborer.

And you’ll need that extra time. The ongoing discussion regarding the future of google rankings for ai content suggests that the quality bar is only going to get higher. You aren’t just competing with other humans anymore; you’re competing with a massive flood of automated text. To win, your brand voice must be unmistakable.

I’ve seen many teams fail because they treated AI as a ‘set it and forget it’ solution. Don’t fall for that trap. Results aren’t always instantaneous, and even the best-optimized clusters can take months to mature. But when you combine the speed of GenWrite with your specific industry expertise, you create a feedback loop that search engines find hard to ignore.

So, where do you go from here? You start by auditing your current output. Are you just repeating what’s already on page one, or are you adding something new? The future of SEO belongs to those who use AI to scale their reach while relying on their unique human perspective to earn their spot. It’s time to stop worrying about whether Google will rank AI and start making sure your content is actually worth ranking.

If you’re tired of generic AI posts that don’t rank, GenWrite handles the research and SEO heavy lifting so you can focus on adding the human expertise that Google loves.

Frequently Asked Questions

Does Google actually penalize content just because it’s written by AI?

Nope, Google doesn’t have an ‘AI penalty.’ They care about whether your content is helpful and accurate, not what tool you used to draft it. If you’re just pumping out generic, unedited text, that’s where you’ll run into trouble.

Why does my AI content get indexed but never actually rank?

Indexing just means Google found your page, but ranking requires proving your content is better than the competition. Most AI-generated posts lack the unique insights or personal experience that tell Google your page is worth a top spot.

How can I make my AI drafts sound more human and authoritative?

You’ve got to inject your own case studies, specific data, and personal opinions into the AI’s output. It’s really about using the AI to build the skeleton while you provide the muscle and personality that readers actually want.

Is it possible to fully automate a blog without getting hit by updates?

Honestly, 100% automation is a risky game that usually ends in a rankings drop. You’ll want a ‘human-in-the-loop’ to verify facts and ensure the tone matches your brand, which is exactly why tools like GenWrite are designed to support that hybrid approach.

What should I change to show up in AI Overviews?

Focus on being concise and structured so AI models can easily cite your content as a source. Use clear headers, direct answers to common questions, and verified data to make your site the go-to reference for your topic.