Is your seo automated software actually creating toxic keyword patterns?

Introduction

You wake up to a 40% drop in organic traffic, and the manual action notification in Search Console confirms your worst fear: your content is being flagged as “low-effort.” This is the nightmare scenario for anyone who scaled too quickly using legacy seo automated software. It’s not that automation is inherently bad,it’s that most tools treat your blog like a factory line, churning out repetitive templates that scream “bot” to modern search filters.

The allure of infinite scale is hard to resist. I’ve seen site owners trade their long-term domain authority for a week of high-volume traffic, only to be crushed by over-optimization penalties because their content lacked a human-centric pulse. We’re moving past the era where you could trick a crawler by repeating a phrase six times in the first 200 words. Modern search systems prioritize “helpful content,” which means experiential insights and unique data. When an automated blog post creator ignores these nuances, it creates a toxic pattern of shallow, redundant pages that do more harm than good.

The hidden cost of repetitive templates

When you use a basic tool to spin out 500 “how-to” articles, you’re not just creating content; you’re creating a liability. These tools often rely on the same linguistic structures and keyword densities, making your site look like a mirror of a thousand other low-quality blogs. This leads to a drop in rankings as search engines identify the work as “low-effort” and redundant. Honestly, the reality is that search engines are now incredibly good at spotting when a site is just a shell for keyword-stuffed templates.

At GenWrite, we approach this differently. It’s about moving away from the “keyword list” mentality. Instead of stuffing phrases, an ai seo blog writer should be mapping out the user’s actual intent. Why are they searching? What problem are they trying to solve? If your software can’t answer those questions, it’s just generating noise. We focus on seo content quality by adding relevant links and images and analyzing competitor content to ensure your post actually offers something new.

Balancing speed with substance

It’s tempting to think more is always better. But 50 pieces of high-quality, research-backed content will always outperform 5,000 pages of generic fluff. I recently tracked what actually happened when I used an ai blog writer for 30 days, and the results were clear: the win isn’t in the volume, but in the precision of the automation.

And that’s the real challenge. You want the speed of AI but the depth of a human researcher. If you don’t find that balance, you’re just building a house of cards that a single algorithm update will blow down. So, are you building a library of value, or just a pile of digital waste? The answer depends entirely on how you use your ai blog content creator.

The danger of keyword cannibalization in automated pipelines

About 40% of organic impressions can just disappear when a site starts fighting itself for the same search intent. It’s not a small glitch. It’s a fundamental breakdown in automated pipelines that value page count over actual meaning. If you scale without a plan for differentiation, you’re just building a maze that leaves search algorithms guessing.

The structural trap of intent overlap

Most automated seo tool risks come down to a lack of what I call “content coordinates.” Humans know when they’re repeating themselves. Software? Not so much. If a system is told to target “best accounting software for small business” and “top accounting tools for entrepreneurs,” it’ll likely spit out two versions of the same thing.

Google sees this and gets confused. Instead of one page hitting the top spot, both might end up stuck on page two, trading places between #12 and #15. You’re basically fighting your own shadow. You end up with a mountain of content that earns zero traffic because the pages are eating their own authority.

Diluting authority through redundancy

This gets ugly in niche industries. Imagine a fishing blog with separate guides for cooking Brook Trout, Lake Trout, and Tiger Trout. The keywords look different, sure. But the intent—how to prep a fish—is identical. By splitting the info, you stop any single page from getting the backlinks and engagement it needs to win.

Keyword cannibalization forces your pages into a bidding war. It’s a waste of crawl budget. If Google spends its time indexing 500 redundant neighborhood pages on a real estate site, it might never find the listings that actually make you money. Redundancy is just index bloat.

Precision over volume in automation

Don’t stop automating. Just do it better. You need an seo friendly content generator that understands topical clusters. At GenWrite, we don’t just dump pages based on a list. We look at competitors and intent to make sure every post fills a gap rather than overlapping with what’s already there.

It’s about semantic depth, not just swapping keywords in a template. We built GenWrite to look at the existing ecosystem so you aren’t just adding noise to an already crowded result page.

“More is better” is a lie in modern SEO. A site with 50 distinct, high-quality pages will beat one with 5,000 repetitive ones every time. Real growth happens when every page has a specific, non-competing job to do.

Q: Can an automated seo tool cause my site to be penalized?

Cannibalization ruins your internal structure, but the fear of a total site wipeout is what keeps most webmasters up at night. Let’s be blunt: an seo automated software suite won’t get you banned just because it is code. Google doesn’t have a bias against silicon. It has a bias against uselessness. If you use automation to flood the index with low-effort, repetitive pages, you aren’t a victim of an “AI ban.” You’re a spammer who got caught.

Google’s public stance is clear. They reward quality content regardless of how it’s produced. But does AI-generated content hurt SEO ranking? Only if it’s indistinguishable from the sea of generic noise already clogging the web. When software is used to scale low-quality output, it triggers the helpful content system. This isn’t a manual penalty in the traditional sense. It’s an algorithmic demotion that says your site provides no unique value.

I’ve seen this play out in real time. One site owner replaced their unique meta descriptions with generic, tool-generated text. They watched their traffic drop from 40 clicks a day to zero within weeks. The software didn’t fail; the strategy did. The new text was hollow and failed to meet the search intent that google ranking factors prioritize. By stripping away the human nuance, they signaled to the algorithm that the site was no longer a premium resource.

Another case involved a website targeting “SEO training Houston.” They used a basic bot to spin thousands of words of content. The result? The entire site was de-indexed. They only recovered after deleting the trash and replacing it with high-quality, authoritative writing. This is the reality of over-optimization penalties. If your content exists solely to rank and not to inform, you’re building on sand.

So, can you use automation safely? Yes, if you use it to handle the heavy lifting of research and structure rather than the thinking. This is why using a smart content generator like GenWrite is a different game. It doesn’t just vomit text onto a page. It analyzes what’s actually working for competitors and builds a foundation that respects search engine guidelines.

Automation is a tool, not a shortcut. If you treat it like a “set and forget” money printer, you’ll eventually lose your rankings. But if you use it to produce better content faster, you’ll win. The risk isn’t the software. The risk is your willingness to publish mediocre work.

When the algorithm detects ‘hollow content’

Google doesn’t hate automation because it’s non-human; it hates it because it’s often hollow. The logic behind rankings has shifted. Simply filling a page with words won’t maintain visibility anymore. When a tool relies on a rigid template, it produces text that passes a grammar check but fails the ‘so what?’ test. This usually shows up as keyword shoehorning—the forced insertion of phrases where they simply don’t belong. It’s a clear sign of poor seo content quality and one of the main reasons modern search filters get triggered.

The structural tax of keyword shoehorning

Most ai writing assistant errors happen when the software prioritizes a density target over the logical flow of information. If a system is told to hit a specific keyword frequency, it’ll jam that phrase into every other paragraph. This creates a clunky, repetitive rhythm that readers immediately flag as spam.

But the damage goes deeper than user experience. Search algorithms now parse semantic clusters and the relationships between ideas. When a keyword is forced into a sentence without the supporting context it requires, it creates a discordance. It’s a signal that the content wasn’t built to answer a question, but to manipulate a ranking. You’ll see this addressed in any smart content generator faq, where users wonder why their high-density pages aren’t ranking. The answer is simple: the keywords lack the conceptual glue needed to make them authoritative.

Navigating the fact-checking debt

Hollow content also creates what I call fact-checking debt. Low-tier automation generates statements that sound confident but lack data or unique insight. It relies on the average of whatever information is already on the web. This forces editors to spend more time verifying claims than they would have spent writing from scratch.

If you ignore the specifics of how AI content impacts Google rankings, you end up with a pattern of generic rehashing. If your blog just repeats the top three search results in a different order, you aren’t adding value. You’re adding noise. This lack of original data or a unique perspective is what drives high bounce rates. Users land on the page, realize they’ve read it all before, and leave.

Building for substance over scale

At GenWrite, we solve this by baking competitor analysis directly into the generation phase. Instead of just looking at keywords, the system identifies what’s missing from the current conversation. This avoids the ‘rehash’ trap common in bulk generation tools. Automation scales production, but it doesn’t guarantee authority without these guardrails.

The goal isn’t just to publish more. It’s to ensure every piece pays down its fact-checking debt before it goes live. By focusing on actual insight rather than structural templates, you can use a tool like GenWrite to build a library that earns its place in the SERPs. Traffic grows when you stop chasing volume and start answering the user’s intent.

Q: Why does my traffic drop even when I use a smart content generator?

You’ve likely seen the pattern: you launch a series of posts, the initial indexing looks promising, and then the line on your Search Console starts a slow, agonizing crawl downward. It’s frustrating because you’ve checked all the boxes. You consulted a smart content generator faq to find the right tech, you optimized the headers, and the keyword density looks perfect. So why isn’t the traffic sticking? The reality is that search engine optimization has moved past simple pattern matching toward a more sophisticated understanding of user satisfaction.

Google’s algorithms are increasingly focused on how long a person stays on your page and whether they found what they were actually looking for. When you rely on basic automation, you often end up with content that satisfies the “keyword” but fails the “intent.” Think about it: if you search for “best accounting software for small business,” are you looking for a generic 500-word essay on what accounting is? No. You want a side-by-side comparison, pricing tiers, and honest pros and cons. If your generator just churns out surface-level definitions, users will bounce within seconds.

That high bounce rate signals to Google that your page isn’t a quality result, which directly impacts your google ranking factors. It doesn’t matter how “smart” the tool is if the output doesn’t respect the reader’s time. This is exactly why we built GenWrite to handle more than just text generation; it’s about creating a narrative that actually holds attention. Automation doesn’t always lead to a crash, but it creates a structural fragility that generic tools often ignore.

Another common culprit for these sudden dips is when your automated pipeline starts creating internal competition. You might find that your new posts are actually stealing authority from your older, established ones. When this happens, you need to fix keyword cannibalization to ensure your most important pages aren’t fighting each other for the same spot in the SERPs. If Google can’t figure out which of your pages is the definitive answer, it might just stop ranking all of them.

And then there’s the “vibe” of the content. Have you looked at the rhythm of the text? If every sentence follows the same repetitive structure, it feels robotic. Bored users don’t convert, they don’t click internal links, and they certainly don’t come back. You have to treat your tools as high-level research assistants, not a “set it and forget it” button. Use the automation to handle the heavy lifting of competitor analysis, but ensure the final product offers a unique perspective that adds something new to the conversation.

The programmatic SEO trap: crawl budget and spider traps

The mismatch between intent and content isn’t the only reason traffic stalls; often, the technical architecture collapses under the weight of its own automation. When you deploy a system to generate thousands of pages based on search filters or layered navigation, you risk creating a spider trap. This happens when your site’s logic allows for an infinite combination of URLs,think color, size, price, and brand filters all stacking on top of one another. To a search engine crawler, this looks like an endless maze of near-identical content that offers zero incremental value.

The mechanics of index bloat

Index bloat is a silent killer for large-scale sites. If an automated system generates a unique URL for every possible filter combination, Googlebot might find itself crawling “Red Suede Sneakers Size 10 Under $50” one minute and “Suede Sneakers Red Under $50 Size 10” the next. These aren’t just redundant; they’re toxic. They eat into your crawl budget, which is the finite number of pages a search engine will fetch on your site within a given timeframe.

When your budget is spent on these low-value permutations, your high-priority, high-converting pages may go months without being re-indexed. It’s a classic case of automated seo tool risks where quantity directly sabotages quality. While tools like GenWrite’s AI blog generator focus on structured, purposeful content creation, haphazard programmatic setups often fail to implement proper canonicalization or robots.txt directives to prevent this sprawl.

Spider traps and infinite loops

A spider trap occurs when the site structure provides no clear “exit” for the crawler. This often happens with calendar widgets or multi-select faceted search. If the software doesn’t hard-cap the number of parameters allowed in a URL, the crawler keeps digging deeper into a hole of its own making. This doesn’t always hold for sites with massive authority, but for most, it’s a fast track to being de-indexed.

| Issue Type | SEO Impact | Technical Root Cause |

|---|---|---|

| Faceted Navigation | Extreme Crawl Waste | Unfiltered parameter combinations |

| Session IDs | Duplicate Content | Unique URLs for every visit |

| Infinite Pagination | Index Bloat | No ‘rel=next/prev’ or canonical logic |

This technical debt is often compounded by ai writing assistant errors where the generated text for these thousands of pages is practically identical. If the “Red Shoes” page and the “Blue Shoes” page share 95% of the same boilerplate text, search engines view them as thin content. This leads to keyword cannibalization, where your own pages fight each other for the same search term, eventually dragging the entire domain’s authority down.

Protecting your crawl budget

Fixing these traps requires more than just deleting pages. You have to change how the bot interacts with your site. Implementing “Noindex, Follow” tags on deep filter combinations is a start, but it doesn’t stop the bot from crawling them in the first place. A better approach involves using the URL Parameters tool in Search Console or strictly managing your internal linking structure to ensure bots only see the paths you want them to follow.

And it’s worth noting that more isn’t always better. Sometimes, a site with 500 high-quality, well-linked pages will significantly outperform a programmatic site with 50,000 auto-generated thin pages. Automation should be a scalpel, not a sledgehammer. By focusing on intent-driven content rather than every possible permutation of a database, you preserve your site’s health and ensure that search engines prioritize your most valuable assets.

Model collapse and the degradation of automated facts

Picture a digital marketing agency that decides to scale its output by generating 1,000 articles a month using a basic scraper. Initially, the articles are decent because they’re based on human-written source material. But as the web fills with this synthetic text, the AI starts learning from other AI-generated blogs. Within months, the technical advice in those articles shifts from nuanced expertise to a series of generic, repetitive, and eventually false claims. This isn’t just a technical glitch; it’s a fundamental decay of information.

The feedback loop of synthetic data

This phenomenon, known as model collapse, occurs when AI models are trained on their own synthetic output. It’s like a photocopy of a photocopy; each iteration loses clarity until the original image is unrecognizable. In the world of search, this means the ‘facts’ your AI writing assistant errors might produce start to drift away from reality. The model begins to forget the nuances of the data,the rare but vital edge cases,and focuses only on the most probable, often bland, middle ground.

The stakes for your site’s authority are high. Google ranking factors are increasingly tuned to reward ‘information gain’,the presence of new, unique, or deeply insightful information that doesn’t exist elsewhere. When a site falls into a model collapse loop, it stops providing value. It becomes a mirror reflecting other mirrors, and search engines quickly identify this lack of original signal. If you’re publishing a copy of a copy, you’re losing the very signal that search engines need to justify your position.

Why E-E-A-T fails in a vacuum

Experience and Expertise are impossible to fake when the data source is degraded. A human expert knows the specific friction points of a product, while a collapsing model might simply hallucinate benefits that don’t exist. This fact-checking debt grows until the content is not only useless but potentially toxic to your brand’s reputation. Trustworthiness erodes when automated facts begin to deviate from reality because the training data was poisoned by previous hallucinations.

And yet, the solution isn’t to abandon automation entirely. The key is to ensure your workflow incorporates primary signals,real-world data, competitor analysis, and human oversight. Using a sophisticated platform like GenWrite helps break the loop by grounding the generation process in actual search intent and live data rather than letting it spin in a vacuum of recycled text. You need to inject primary signal back into the workflow to maintain high seo content quality.

The erosion of trust

Trustworthiness is a major pillar of E-E-A-T. If a user follows a guide on your site and finds the instructions are factually wrong because the AI misinterpreted a previous AI’s summary, that user is gone forever. More importantly, Google’s algorithms are becoming adept at spotting the linguistic fingerprints of low-quality synthetic loops. This doesn’t mean all AI content is bad, but it does mean that unverified, looped content is a liability.

We have to acknowledge that some level of synthetic data is unavoidable in modern training sets. But the difference between a high-performing site and one that gets buried is the intentionality of the content. If you aren’t adding a layer of unique perspective or verified data, you’re just contributing to the noise. And in the current search environment, noise is the fastest way to lose your rankings.

Q: How do I identify if my content is competing against itself?

The failure of factual integrity in automated content often leads to a secondary, structural rot: keyword cannibalization. This happens when your own pages fight for the same spot in search engine results. It confuses algorithms and splits your ranking power across multiple URLs. If your system generates pages without a centralized understanding of what already exists, you’re essentially bidding against yourself for the same user attention.

Analyzing search intent coordinates

Identifying this overlap requires a move toward “Content Coordinates.” Instead of just tracking keywords, I map every page against three specific axes: the primary keyword, the search intent (informational, navigational, or transactional), and the target audience segment. When you plot your existing library this way, the clusters of redundancy become obvious. If two pieces of content share the same three coordinates, they’re redundant.

For example, a site might have three separate blog posts: “Guide to SEO Audits,” “How to Audit Your Site,” and “SEO Audit Best Practices.” If all three target the same informational intent for the same novice audience, they aren’t supporting each other. They’re competing. Tools like GenWrite solve this by using advanced keyword research to ensure every new piece of content occupies a unique coordinate in your site’s ecosystem, preventing the mess that manual or low-quality automated pipelines often create.

Diagnosing the performance dance in search console

The most reliable diagnostic tool for this is the Google Search Console Performance report. Filter your data by a specific query and then switch to the “Pages” tab. If you see multiple URLs for a single query with similar impression counts but volatile, alternating average positions, you’ve found a conflict. Search engine optimization isn’t just about adding more content; it’s about making sure your site structure is logical.

This “ranking dance” suggests Google doesn’t know which page is the definitive authority. You’ll often see one URL drop from position 4 to 15, while another rises from 20 to 6. This internal friction prevents either page from reaching the top three. It’s a symptom often seen in high-volume blog automation questions where the system creates content based on keyword density rather than topical distinctness. Results vary, but the evidence usually shows that a single, authoritative page will outperform three mediocre ones every time.

Using third-party overlap reports

Professional tools like Ahrefs or Semrush provide an “Organic Keywords” report that makes this visible at scale. By applying a “Multiple URLs” filter, you can see every instance where your domain has more than one page ranking for the same term. It’s a fast way to find the “bloat” in your content strategy.

But don’t assume every overlap is toxic. Sometimes, having two pages rank,perhaps a product page and a blog post,is a win because it occupies more search real estate. The danger lies in “hollow” overlap, where two nearly identical informational articles dilute your link equity. In those cases, the solution isn’t just deletion. It’s often a strategic merge or a hard pivot in the intent of one page to serve a different stage of the buyer’s journey.

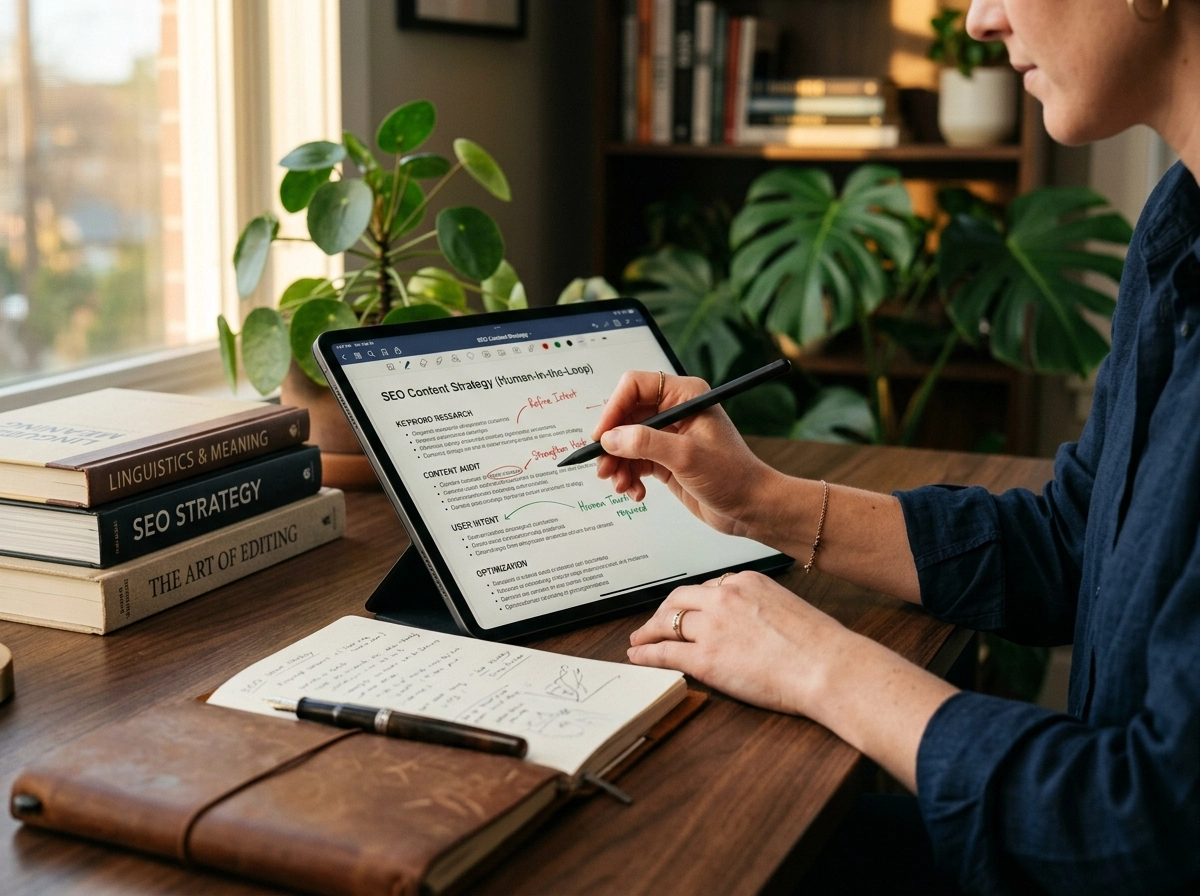

The ‘human-in-the-loop’ strategy for modern AI handlers

Once you’ve mapped out where your content is cannibalizing itself, you’re faced with a choice: do you keep playing whack-a-mole with individual pages, or do you change how you use your tools? The audit you just performed probably revealed a hard truth. When you rely solely on seo automated software to drive your site’s growth, you aren’t just saving time,you’re delegating your brand’s logic to an algorithm that doesn’t understand your business goals.

I’ve seen plenty of teams fall into the trap of becoming passive observers of their own CMS. They treat their AI blog generator like a microwave,pop in a keyword, wait three minutes, and serve the result. But the reality is that the most successful SEOs I know have shifted their identity. They’ve stopped being content producers and have started acting as content strategists. It’s a move from the factory floor to the architect’s office.

Shifting from production to strategy

Being a ‘human-in-the-loop’ isn’t about doing the manual labor; it’s about providing the high-level intent that the machine lacks. You don’t need to type every word, but you do need to define the boundaries. For example, while a tool like GenWrite can handle the heavy lifting of competitor analysis and bulk generation, I always recommend that the human handler owns the ‘hook’ and the ‘so what.’

Why does this matter? Because search engines are getting better at spotting content that’s technically perfect but intellectually empty. If your software identifies a long-tail keyword but doesn’t have the context of your latest product update, the content will feel dated. You’re the one who bridges that gap. You’re the one who ensures seo content quality isn’t just about keyword density, but about actual utility.

The expert-led workflow

So, what does this look like in practice? It usually involves a three-step dance. First, you use your automation tools to build the skeleton,the structure, the keyword placement, and the basic research. Second, you step in to inject the ‘E’ in E-E-A-T (Experience and Expertise). This might mean rewriting an introduction to include a personal anecdote or a specific case study that a crawler could never find.

Tackling the blog automation questions

I often hear common blog automation questions centered around how much human intervention is ‘enough.’ There’s no magic percentage, but a good rule of thumb is that if you can’t summarize the unique value of a post in one sentence, it’s not ready. Use the software to handle the ‘fact-checking debt’ by letting it pull data, but spend your time verifying those claims and adding your perspective. This doesn’t always hold for every single niche, but for high-stakes industries, it’s the only way to survive an algorithm update.

You’re essentially managing a fleet of digital writers. You wouldn’t hire five freelancers and never look at their work, right? The same logic applies here. By positioning yourself as the final editor, you ensure that your site doesn’t just rank for a week, but builds the kind of authority that creates long-term traffic. It’s about leveraging the speed of AI while maintaining the discernment of a human expert.

Q: Is programmatic SEO worth the risk in 2025?

Programmatic SEO in 2025 isn’t a gamble on technology. It’s a gamble on your ability to manage technical debt. If you treat an automated seo tool as a ‘set and forget’ machine, you’re essentially taking out a high-interest loan against your domain authority. You get the traffic now, but you’ll pay for it in manual cleanup later. The math rarely favors the lazy.

Take the case of a startup that generated 400 pages using a basic formula. Within months, those pages accounted for nearly half their traffic. It looked like a massive win. But as search engines refined their understanding of value, the ‘thin content’ flags started appearing. The team spent the next quarter merging pages, adding custom data, and deleting duplicates. The cost of that manual labor exceeded the initial revenue those pages generated. They didn’t save time; they just deferred the work.

So, is it worth it? Yes, but only if you move past the ‘bulk’ mindset.

the high cost of cheap automation

Most people fail because they use a smart content generator faq approach to fill gaps rather than provide value. They create thousands of ‘X for Y’ pages that offer nothing but recycled definitions. That’s a toxic pattern. Google doesn’t hate automation; it hates redundancy. When you scale, you scale your mistakes. If your template has a minor logic error, you don’t have one mistake,you have five thousand.

This is where the ‘content debt’ becomes a literal business liability. You end up hiring expensive consultants to undo the work of a cheap tool. It’s a classic trap of false economy. And the automated seo tool risks aren’t just about traffic drops. They’re about brand reputation. If a potential customer lands on a ‘hollow’ page, they don’t just leave; they lose trust in your entire product.

building sustainable programmatic pipelines

The shift in 2025 is toward high-fidelity automation. This means using an AI blog generator that doesn’t just spin text but actually handles deep competitor analysis and keyword research. You need a system that understands intent, not just string matching. The goal is to produce pages that feel bespoke even if they were generated at scale.

I’ve seen companies succeed when they treat programmatic pages as ‘vessels’ for unique data. If you have proprietary data or unique insights, automation is the only way to deploy that at scale. But if you’re just scraping and rephrasing, you’re building on sand. Modern search engine optimization requires a level of oversight that most ‘growth hackers’ ignore.

identifying the tipping point

You should ask yourself if you have the resources to audit what you build. If you can’t review 10% of your generated output for accuracy and tone, don’t generate it. The reality is that programmatic SEO remains the most powerful way to capture long-tail traffic. But it’s no longer a shortcut. It’s an engineering challenge.

Use tools that prioritize quality and structure over raw word count. If you don’t, you aren’t building an asset. You’re just generating noise that you’ll eventually have to pay someone to delete. The risk is high, but the debt of doing nothing is often worse.

Optimizing for the ‘Who, How, and Why’ framework

Recent performance data shows that nearly 70% of top-ranking informational pages now feature explicit author profiles and transparency disclosures. This shift marks a departure from the “anonymous authority” era of the early 2010s. Search engines aren’t just looking for relevant keywords anymore; they’re looking for the fingerprints of accountability. This brings us to the core of modern seo content quality: the Who, How, and Why framework. If your automation strategy ignores these three pillars, you’re essentially building on sand.

Establishing the ‘who’ through accountability

The “who” is perhaps the most misunderstood element of recent search updates. It’s a common misconception that AI content must be hidden or masked to rank. In reality, transparency is often your best defense against being flagged as spam. When I see a publisher add a clear author byline alongside a disclosure that an AI blog generator was used for data aggregation, I see a site that values its reputation. This human oversight provides the necessary E-E-A-T signals that algorithms crave. But it’s not just about a name at the top of a page. You need to link that name to a real footprint,social profiles, a portfolio, or an “About Us” page that proves the person behind the screen understands the subject matter.

Defining the ‘how’ of automated production

Google’s guidelines aren’t anti-automation; they’re anti-low-effort. The “how” asks if your content was created to be helpful or simply to fill space. If you’re using a tool like GenWrite, the “how” involves sophisticated keyword research and real-time data integration to answer specific blog automation questions. It’s the difference between a bot guessing what people want and a system that maps out the existing information gap. But you should still be honest about the process. Mentioning that your site uses automation for link building or image addition doesn’t hurt your ranking. It actually clarifies your intent. And it’s worth noting that results aren’t always immediate, as search engines often need time to re-evaluate revised bylines and updated transparency statements.

The ‘why’ determines long-term survival

The final question is the most brutal: why does this content exist? If the answer is solely “to rank for these five keywords,” you’re in trouble. High-value sites often find themselves pruning up to 20% of their legacy AI pages because they realize the “why” was purely mechanical and offered no unique value to the user. To fix this, your automated content needs to offer something new,a unique angle, a better comparison table, or a more recent dataset that reflects current google ranking factors. So, before you hit publish on a bulk run, ask if the page actually solves a problem or if it’s just noise. If it doesn’t add value, even the most advanced tools won’t save it from the next core update.

Closing or Escalation

Transparency is just the first layer of defense. If you’ve established who is behind your content, you still have to face the harder reality: what they’re actually saying needs to matter. The industry’s long-standing obsession with content velocity is hitting a brick wall, and it’s happening faster than most marketing teams realize. If you’re still measuring success by the sheer number of URLs you push live each month, you’re playing a game that ended years ago.

Modern search engines don’t just reward volume; they penalize the noise that volume often creates. When you rely heavily on seo automated software without a rigorous quality-first discipline, you risk falling into the trap of over-optimization. This isn’t just about stuffing keywords into headers. It’s about creating a footprint that looks more like a bot’s frantic attempt to rank than a helpful resource for a human.

Moving from velocity to value

So, how do you fix a site that’s already started to see the tell-tale dip of over-optimization penalties? It starts with a shift in your primary metrics. Stop looking at the publishing calendar and start looking at user engagement signals. If your average time on page is plummeting while your page count is rising, your automation strategy is likely diluting your authority.

| Metric | The Old Way (Quantity) | The New Way (Quality) |

|---|---|---|

| Success Metric | Monthly page output | Average time on page |

| Keyword Goal | Total keywords indexed | Conversion rate per landing page |

| Content Health | Number of backlinks | Depth of original insight |

You need to audit your existing library for toxic patterns. This usually involves a process of content pruning,deleting or merging thin, repetitive pages that compete for the same intent. One marketing team I worked with recently saw a 30% jump in organic traffic just by deleting 40% of their lower-performing, automated pages. By removing the weight of those ‘hollow’ links, the site’s overall authority was finally able to breathe.

The role of intentional automation

This doesn’t mean you should abandon automation altogether. That’s a common misconception. The trick is using tools that prioritize the human reader over the search crawler. Using a sophisticated AI blog generator shouldn’t be about flooding the zone; it’s about precision-guided relevance. The software should handle the heavy lifting of research and structure, but you (or your editors) must provide the final layer of context and nuance that an algorithm can’t replicate.

And let’s be honest: the stakes are high. If you ignore these patterns now, you aren’t just risking a temporary drop in rankings. You’re building a foundation of technical debt that will take years to pay off. Every low-quality page you add today is a page you’ll have to audit, rewrite, or delete tomorrow.

What happens next? The most successful sites in the next few years won’t be the ones with the most pages. They’ll be the ones that used automation to scale their best ideas, not their worst impulses. The era of the content factory is effectively over. Now, we’re in the era of the authority architect. Are you building something that will stand, or just adding to the clutter?

If you’re tired of cleaning up low-quality content, GenWrite handles the research and optimization so you can focus on the human expertise that actually ranks.

Common Questions About Automated SEO

Can an automated SEO tool cause my site to be penalized?

Google doesn’t penalize AI itself, but it does target low-effort, manipulative, or redundant content. If your tool churns out thousands of thin pages that don’t help the user, you’ll likely see a drop in rankings because that’s exactly what SpamBrain is designed to catch.

Why does my traffic drop even when I use a smart content generator?

It’s usually a mismatch between keywords and search intent. Just because a tool hits your target keyword doesn’t mean it actually answers the user’s question, which leads to high bounce rates and tells Google your page isn’t the right result.

How do I identify if my content is competing against itself?

You should check your search console for pages ranking for the same primary keywords. If you see multiple URLs fighting for the same term, you’ve got a cannibalization issue that’s diluting your authority.

Is programmatic SEO worth the risk in 2025?

Honestly, it’s only worth it if you’re willing to pay the ‘content debt’ later. Most sites end up spending more time fixing penalized, low-quality pages than they saved by automating the initial creation.