One simple shift that made our ai seo writing assistant 10x more effective

The shift from generator to research assistant

Stop asking AI to write your blog posts. It’s a weird thing for a tool builder to say, but the ‘magic button’ era of content is dead. If you’re still using AI as a ghostwriter for 1,000-word drafts, your rankings are probably flatlining. The real shift, the one that actually works, is moving from generation to research assistant.

Most researchers have already made this pivot. They don’t just dump text onto a page; they use these tools for literature reviews and data synthesis. Think of it like having a high-speed research lab at your disposal. When companies like Rivian use models to get employees up to speed on complex technical topics, they aren’t looking for a final draft. They want the ‘what’ and the ‘why’ so they can build the ‘how’ themselves.

The architect vs. the ghostwriter

When you use an ai seo writing assistant as a structural architect, the quality of your output changes overnight. Instead of a flat, generic article, you get a piece that addresses actual pain points. This is where most seo blog writing software fails. It misses the intent because it’s too focused on the word count.

I’ve seen agencies like MERGE ditch raw text generation. They use AI to summarize client meetings or find holes in their own logic. For content writing, this means asking the AI to analyze the top 10 competitors, find what they missed, and suggest a unique angle.

Building a better seo workflow

To improve content quality, your process needs to involve the AI at the skeletal level. Start with keyword-driven blog writing that identifies the underlying problem the user is trying to solve. At GenWrite, we’ve found that the best results come when the tool handles the heavy lifting of competitor analysis while the human provides the creative spark.

Here are a few seo workflow tips to make this shift:

- Use the AI to find conflicting viewpoints in a niche to add depth.

- Ask for a content structure and internal linking plan before a single sentence is written.

- Request a summary of “People Also Ask” questions to make sure you’re covering the full scope of a topic.

This is more than just a speed play. It’s about surviving an internet flooded with ai content saas garbage. Standing out requires humanizing AI content through better research.

Why the research-first approach wins

The stakes are high. If you ignore this shift, you’re just contributing to the noise. But if you use an ai seo content generator as your primary researcher, you can scale without sacrificing authority. Our automated on-page SEO writing process is built on this very philosophy. We don’t just generate; we analyze.

Check out our pricing to see how we help teams transition from manual drudgery to high-level strategy. You can also learn more about how we handle seo optimization for blogs. Let the AI handle the data so you can actually be the expert.

Why the ‘all-at-once’ prompt is killing your rankings

Treat AI like a vending machine and you’ll get garbage. The jump from research to writing breaks when you demand a full draft in one go. This “all-at-once” approach triggers a logical decay that Google sees a mile away.

The mathematical trap of structural hallucination

LLMs prioritize patterns over truth. When you force length, the model enters a state of structural hallucination. It writes beautiful, fluent prose that’s factually empty. It chooses rhythm over reality every time.

Confidence is just a mask. AI is 34% more likely to use words like “definitely” when it’s making things up. This bias tricks you into posting trash that destroys your AI content transparency and authority.

The “lost in the middle” phenomenon

Long prompts have a specific technical flaw. Models track the start and end of your instructions well enough, but they fall apart in the middle of long-form generation.

This leads to circular logic and filler. Your middle sections turn into a graveyard of generic advice. You won’t craft content that ranks if 40% of the piece is fluff. It’s not always a total crash, but it makes the draft nearly impossible to save.

The hallucination cascade

Errors don’t stay in one place. They compound. If the AI messes up in paragraph two, it uses that error as the foundation for paragraph three. It treats its own fiction as fact.

This is a ripple effect of nonsense. By the end, the AI isn’t even following your instructions—it’s just following its own mistakes. Long-form generation is basically a hallucination tax where confidence hides a slow rot in logic.

Breaking the cycle with modularity

Stop asking for whole blogs. Break the work into segments. We built GenWrite to handle SEO optimization this way to avoid the usual AI drift.

Modular generation keeps the AI focused on specific data. It stops the model from getting buried under its own previous text. When you control the context window, you control the quality.

High-performance writing needs steering. If you aren’t fixing the path every few hundred words, you aren’t writing. You’re just making noise that’ll never hit page one.

Ranking for AEO requires a level of precision that long prompts can’t hit. Answer engines want direct, accurate data. Drafts full of hallucinations get ignored by modern algorithms.

Efficiency isn’t speed. It’s accuracy. A fast draft that takes hours to fact-check is a failure. Modular creation is the only way to scale without burning your reputation.

Setting up your modular prompt architecture

Logic drift isn’t a model failure. It’s an instruction failure. When you ask an LLM to research, outline, and write 2,000 words in a single shot, you’re asking a high-speed engine to run without a transmission. It’ll move, sure, but it’s going to grind.

We solved this by moving to a state machine approach. This modular architecture treats writing as a sequence of isolated, predictable states. Each step requires specific inputs and produces one high-quality output before the next phase starts.

The intent and research layer

Everything starts with human intent—the “why.” Don’t jump to drafting. Use the first module to analyze search intent and technical requirements. You’re looking for the reader’s structural expectations, not just a list of keywords.

Using AI SEO tools here changes the game. At GenWrite, we start with competitor analysis to see what top-ranking pages actually do. This data acts as a constraint. It’s much harder for an AI to hallucinate when it’s boxed in by real-world data.

Constructing the skeleton

Once research is solid, move to the skeleton. But don’t just ask for headers. I use ai writing prompts that force the model to justify every H3 and H4 based on the research. This is where you catch logic gaps. If the outline is thin, don’t draft. Loop back to research. It’s a binary check: either it meets the threshold or it doesn’t. This looping logic separates professional automation from generic bulk generation.

Section-by-section execution

People fail at drafting because they’re too ambitious. AI performs best in 300 to 500-word bursts. I break the outline into discrete chunks and feed them in one by one. Each prompt includes the section heading, research data, and tone. I also remind the model of the previous section to maintain flow. It’s not perfect—transitions might need a manual nudge—but it kills the rambling effect.

The final human-in-the-loop audit

Finally, the draft hits the refinement state. This is the HITL (Human-in-the-loop) Canvas model. We layer human expertise over the AI foundation, checking for technical accuracy and brand voice. GenWrite automates the links and images, but the final “vibe check” is ours. By this point, the heavy lifting is done. We aren’t fixing broken logic; we’re just polishing a precision-built asset. It’s more rigorous, but the rankings usually prove it’s worth it.

Extracting the expertise before the first word is written

Imagine you are staring at a search engine results page (SERP) for a high-value keyword like “SaaS churn reduction.” The top three articles all cover customer feedback loops and onboarding flows with predictable precision. But as you scroll, you realize none of them mention the specific psychological friction of pricing transparency during the cancellation process. This missing piece is your semantic “white space”,the gap where competitors have failed to satisfy a deep, underlying user intent. Identifying these voids is how you jump from being another voice in the crowd to the definitive source on a topic.

Mining the People Also Ask goldmine

The “People Also Ask” (PAA) section is essentially a real-time map of human curiosity. Instead of treating these as a checklist of subheadings, we use AI to analyze the recursive nature of these queries. If a user asks about “how to reduce churn,” and the PAA follows up with “is high churn normal for early-stage startups,” the intent has shifted from tactical to benchmarking. By feeding these sequences into a research agent, you can elevate your content creation by addressing the secondary anxieties that searchers don’t even realize they have yet.

At GenWrite, we don’t just scrape these questions; we look for the patterns in how search engines cluster them. If the PAA questions for your target keyword consistently lean toward technical implementation rather than strategic overviews, your outline should reflect that weight. It’s about building a blueprint that mirrors the actual path a user takes through a topic, rather than a generic top-down summary that ignores the user’s immediate needs.

Identifying the semantic white space

Content gap analysis shouldn’t be a simple comparison of word counts or keyword density. It’s an exercise in finding what’s been left unsaid. You can use specific AI SEO prompts to compare the top ten ranking pages and ask the model to identify themes that are missing or underdeveloped across the entire set. This process often reveals that while everyone is talking about the “what” and the “how,” almost no one is explaining the “who” or the “when.”

Building an outline this way ensures your content editing for SEO happens before the draft even exists. You aren’t trying to wedge keywords into a finished piece; you’re designing the architecture to support those keywords naturally. This proactive approach is the reason why ranking faster with AI is possible. It’s not just about speed,it’s about the precision of the initial structure. When the skeleton of your article is built on actual search data and identified gaps, the resulting draft feels like a direct answer to a complex problem, which is exactly what search engines are looking to reward.

Designing the hierarchical outline (the 10x multiplier)

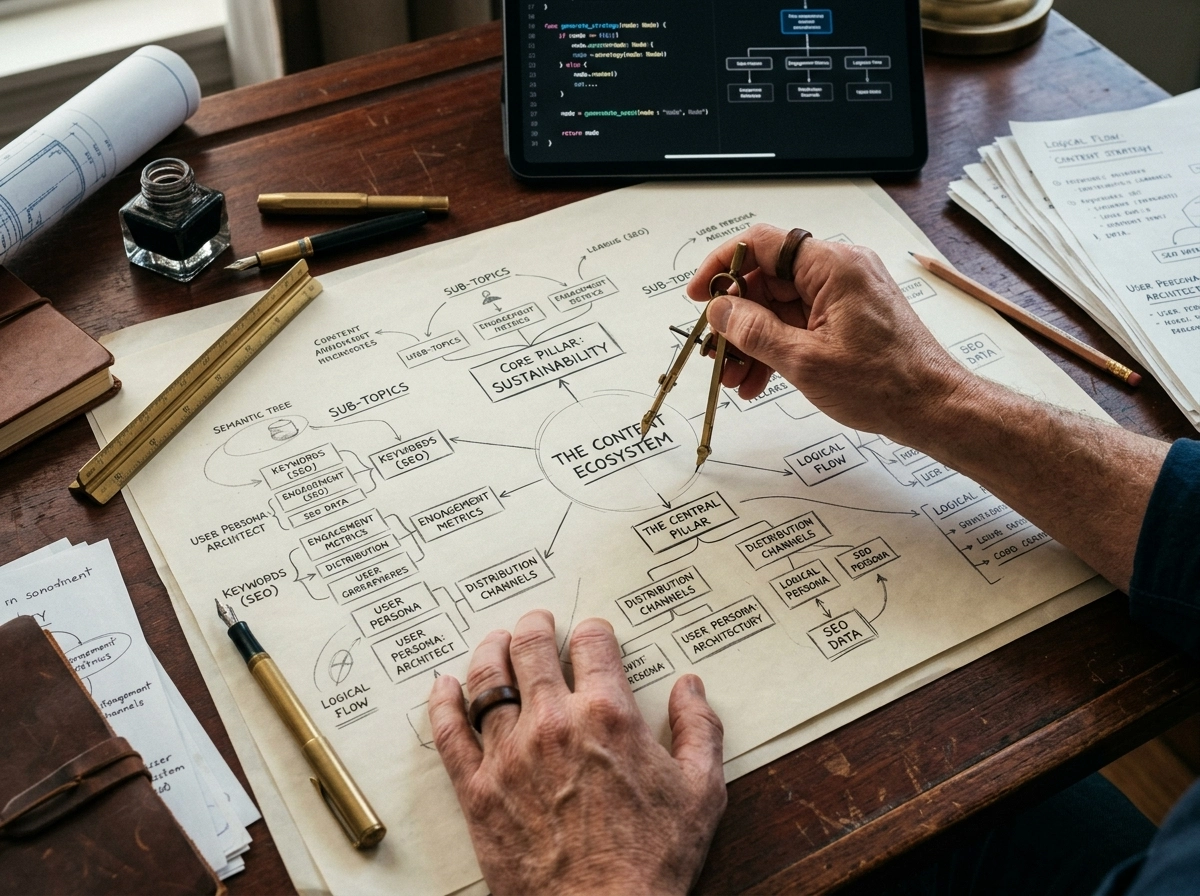

Research indicates that structured data for blogs, when organized through strict semantic hierarchies, improves AI retrieval accuracy by nearly 60%. This isn’t just about making things look tidy for a human reader. It’s about building a machine-readable roadmap that an ai seo writing assistant can navigate without losing the thread of the argument.

Once you’ve extracted the core expertise from SERP gaps, you can’t just dump it into a list. I’ve seen too many teams treat an outline as a suggestion rather than a logic gate. When we built GenWrite, we realized that the outline is the 10x multiplier because it dictates how the LLM allocates its reasoning tokens. If your structure is flat, the output becomes repetitive and shallow. You’ll end up with three paragraphs that all say the same thing in slightly different ways.

Mapping entities to semantic headers

Each H2 and H3 should map to a specific entity or concept. Avoid vague catch-all phrases that provide no context. Instead of “Benefits,” use “Economic advantages of automated content creation.” This clarity allows the model to anchor its research to a specific knowledge node.

This is where keyword optimization becomes a structural decision rather than a cosmetic one. By embedding semantic variants directly into your headers, you signal to both Google and generative engines exactly what information lives in each block. It’s the difference between a sprawling essay and a precise database.

Aligning with retrieval-augmented generation

Modern search is moving toward direct answers. Question-based headings like “How does a hierarchical outline improve LLM output?” align perfectly with how search engines extract answers for featured snippets. It’s essentially pre-formatting your content for content automation that values speed and accuracy.

Don’t ignore the depth. A good outline should go three levels deep. If you’re only using H2s, you’re leaving too much room for the AI to hallucinate or drift off-topic. We’ve found that forcing a sub-structure (H3s and H4s) keeps the writing assistant on a tight leash, ensuring every paragraph earns its place. It prevents the model from glossing over the technical details that actually matter to your audience.

The trade-off of rigid structures

There’s a risk here: too much rigidity can make the prose feel robotic. I’ve noticed that when outlines are overly prescriptive, the transitions can suffer. You have to balance strict hierarchy with enough “thematic breathing room” for the AI to connect the dots naturally. It’s a delicate calibration.

If you’re worried about the quality of the resulting text, you can always run a check using an ai content detector to see if the structure is producing overly predictable patterns. The goal is a high-performing architecture that still feels like it was written by someone with a pulse.

Drafting by section to avoid the ‘AI-ism’ trap

Once you’ve got that hierarchical outline, the temptation is to hit ‘generate’ and walk away. Don’t do it. If you feed the whole structure into one prompt, the model loses the plot by the third heading. It starts repeating itself, or worse, it gets lazy and skips the nuance we worked so hard to map out.

I’ve found that drafting in discrete blocks is the only way to keep the logic tight. Think of it like building a house. You don’t pour the foundation, frame the walls, and shingle the roof in one motion. You finish one phase, check the level, then move on. When you use specific ai writing prompts for each H3, you maintain total control over the narrative arc.

This modular approach lets you apply a “brand voice checklist” to every section. Is this part too dry? Does it sound like a textbook? If I’m using an AI blog generator like GenWrite, I’m looking for that sweet spot where automation meets human-level insight. You can prompt the model to be “witty but direct” for the intro, then switch to “technical and authoritative” for the data-heavy middle sections.

But here’s the real secret to improve content quality: the “Un-AI” edit. Models love flowery words that act as tells. They’re obsessed with formal phrasing that no human actually uses in a Slack message or a casual conversation. By drafting section by section, you can catch these patterns before they pile up. It’s much easier to rewrite a single awkward transition than to overhaul a 2,000-word draft that feels like it was written by a robot trying to pass a Turing test.

You’ll also notice that tips to integrate AI into SEO content writing work better when you work this way. It sounds counterintuitive, right? More prompts should mean more time. But because each block is shorter, the model is less likely to hallucinate. You spend less time fact-checking garbage and more time refining the expert-level hooks that actually keep readers on the page.

What happens if you ignore this? You end up with “gray” content. It’s technically correct but completely soulless. It won’t rank because Google’s helpful content systems can sniff out low-effort bulk generation from a mile away. If you’re looking for the best AI SEO writer experience, you have to be the editor-in-chief, not just the person who presses the button.

Honestly, the friction of moving block by block is a feature, not a bug. It forces you to actually read what’s being produced. You might find that section four contradicts section two. If you’d generated the whole thing at once, you might miss that logic gap until a reader points it out. While expensive SEO content writing software often promises one-click miracles, the reality is that high-end quality requires a bit more hands-on modularity. Results vary based on the topic, but the control you gain is worth the extra steps.

Integrating semantic keywords without the fluff

Once you’ve broken your draft into discrete blocks, the next hurdle is weaving in the semantic signals that tell search engines you actually know what you’re talking about. It’s no longer enough to sprinkle a primary phrase three times and call it a day. Modern search intent relies on entity relationships,the way specific concepts like ‘latent semantic indexing’ connect to ‘search intent’ or ‘information gain.’ If your AI output misses these connections, it’ll feel hollow to both readers and algorithms alike.

To effectively optimize ai writing, you have to treat your page like a miniature knowledge graph. This means identifying the secondary and tertiary entities that naturally cluster around your main topic. If you’re writing about ‘renewable energy,’ your content shouldn’t just repeat that phrase; it needs to mention ‘photovoltaic cells,’ ‘grid parity,’ and ‘intermittency.’ These aren’t just keywords. They’re the proof of expertise that search engines use to categorize your depth.

Shifting from keyword strings to entity maps

Most legacy SEO strategies focus on exact-match strings, but that’s a losing game with modern LLMs. Instead, look at how you can group related ideas to build a clear hierarchy. This is where a content automation platform like GenWrite becomes an asset, as it maps these relationships before the first paragraph is even generated. By identifying these clusters early, you avoid the robotic repetition that usually triggers spam filters.

It’s a mistake to think that more keywords equals better performance. The goal is relevance density. When you’re performing content editing for seo, look for gaps where a specific technical term could replace a vague descriptor. Instead of saying ‘the tool makes things faster,’ say ‘the algorithm reduces latency.’ This precision helps search engines understand the exact niche you’re targeting, which is the fastest path to building topical authority.

Implementing the pillar-cluster model on-page

Structure your sections so they mirror a pillar-cluster model internally. Your H3 headings should act as the ‘sub-pillars,’ with the supporting text acting as the cluster content that feeds back into the main theme. This creates a logical flow that search bots can crawl with ease. It also ensures that your AI doesn’t drift into unrelated tangents, a common issue when generating long-form text without a strict semantic map.

And don’t ignore the power of FAQ blocks. Research indicates that pages featuring integrated FAQ sections often see higher AI citation rates,averaging about 4.9 citations compared to 4.4 for pages without them. This happens because structured questions and answers provide a clean data format for both search engines and LLMs to digest. It’s a simple layout change that yields significant dividends in how your content is surfaced in AI-driven search results.

The technical edge of structured data

Ranking faster with ai requires more than just good prose; it requires data that machines can read. Integrating these semantic keywords into your schema markup and meta-descriptions ensures that the ‘fluff-free’ approach carries over into the backend. When you use GenWrite to handle these technical layers, you’re ensuring that the semantic depth you’ve built into the article is actually recognized by the crawlers.

Ultimately, the shift toward semantic SEO is about proving value through specificity. If your content provides a dense network of related, accurate information, it’ll naturally outperform generic competitors. It’s about making sure every sentence serves a dual purpose: answering the reader’s question and signaling your authority to the machine. This balance is what separates basic AI drafts from high-performing organic assets.

The 40-60 word rule for featured snippets

Keywords are only half the battle. If those semantic terms sit buried in a three-hundred-word block, they’ll never see the light of a featured snippet. You need to treat your content structure like a physical object that fits into a specific box. That box is usually about forty to sixty words long.

Search engines don’t want to hunt for answers. They want a “one-two punch” where the question is the heading and the answer is the very first paragraph. Data shows that snippets hovering around forty-five words appear roughly 21% more often than any other length. It’s the “inverted pyramid” of the modern web,you provide the conclusion first, then the context.

The mechanics of the 45-word answer

Writing this way feels unnatural at first. We’re taught to build up to a point, but Answer Engine Optimization (AEO) demands you start at the finish line. When I use GenWrite to handle writing assistant optimization, I make sure the prompt forces this specific brevity. But don’t just cut words. Every syllable must pull its weight.

A perfect snippet answer usually follows a three-part structure. Start with a direct “Yes/No” or “X is Y” statement. Follow that with a brief “because” or “how” clause. Finally, add a secondary sentence that provides one layer of depth.

And that’s it. If you go over sixty words, you risk getting truncated by the algorithm. If you stay under forty, you might lack the semantic density to prove you’re an authority. It’s a narrow window that requires ruthless editing.

Integrating the rule into your seo workflow tips

You shouldn’t write the whole blog this way. That would be unreadable for humans and likely trigger spam filters. Reserve this surgical precision for your H3 sections that target “What is” or “How to” queries. It’s part of a broader seo workflow tips strategy where you balance deep-dive analysis with snackable data points.

But this rule doesn’t always hold for every niche. Some highly technical medical or legal queries might require eighty words to be accurate. Still, for 90% of informational blogs, the 45-word target is your north star. It’s about being the most helpful result in the shortest amount of time.

Refining the prompt architecture

To get this right, your instructions should include a command to “answer the heading in exactly two sentences.” This prevents the AI from rambling into generic filler. It also makes implementing structured data for blogs much easier. When the text is already concise, wrapping it in schema like FAQPage or HowTo feels seamless.

The reality is that “position zero” is no longer about who wrote the most. It’s about who answered the fastest. If a reader has to scroll to find the “point,” you’ve already lost the snippet. Focus on that 40-60 word sweet spot to turn your blog into an answer engine.

Human-in-the-loop: the non-negotiable final mile

You’ve just hit the “generate” button on a 2,000-word guide that technically hits every target. The headers are logical. The snippet answers are exactly 55 words long. It looks ready to ship, but something feels off. It’s too smooth, too predictable,it lacks the “scar tissue” of actual experience. This is the moment where most writers fail by hitting publish too soon.

The veracity audit as a safety net

AI isn’t a truth engine; it’s a probability engine. When it cites a statistic about click-through rates or mentions a specific software update, it’s calculating what word should come next, not verifying reality. I’ve seen drafts where an LLM confidently invented a feature for a popular CRM that simply doesn’t exist. This doesn’t always happen, but it happens enough to be a risk.

If you want to improve content quality, you must perform a manual veracity audit. This means checking every single date, percentage, and proper noun. It takes ten minutes, but it prevents the factual errors that destroy your site’s authority. But don’t just check the facts; check the logic. If a paragraph contradicts a point made earlier, that’s a red flag only a human will catch.

Mapping the cognitive intent

Before you refine the prose, you have to find the “human hook.” AI can summarize what people are searching for, but it struggles to understand why they are searching for it at 2:00 AM. Are they afraid of losing their job? Are they frustrated by a tool that won’t work? Identifying that specific pain point and weaving it into the introduction and transitions is what I call cognitive intent mapping.

By addressing the reader’s emotional state,not just their search query,you transform a generic article into a piece of content that actually converts. And you don’t need a thousand words to do this. Sometimes a single, well-placed sentence that acknowledges the reader’s specific struggle is enough to keep them on the page.

Refining for content creation efficiency

We use GenWrite to handle the heavy lifting of research and initial drafting because it maximizes content creation efficiency. But the final mile is where you strip away the mechanical phrases that signal a lack of effort. I’m talking about those robotic transitions that make every AI article look the same.

Look for words like “intricate” or “holistic.” These are linguistic fillers that add no value. Replace them with specific observations. So, instead of saying a process is “streamlined,” explain that it reduced a three-day workflow to four hours. That specificity is what builds trust with a skeptical audience.

Injecting proprietary data

The ultimate way to future-proof your rankings is to add what the AI cannot scrape: your own data. Did you run an experiment last month? Did you have a conversation with a client that changed your perspective? This proprietary insight is the only thing that separates your site from the millions of others using the same LLMs.

But don’t just add data for the sake of it. Use it to challenge a common industry assumption. If the AI says “long-form content always wins,” and your data shows that 800-word punchy pieces are driving your best leads, put that contradiction front and center. That tension is what makes a piece of content feel human.

The final polish: content editing for seo

Effective content editing for seo isn’t just about keyword density. It’s about ensuring the internal link structure feels natural rather than forced. It’s about checking that your images have alt-text that actually describes the visual, rather than just repeating the H1. This manual layer is non-negotiable.

Take that extra thirty minutes. Read the draft aloud. If a sentence makes you lose your breath, it’s too long. If a paragraph feels like a wall of text, break it up. This final mile is what separates a disposable draft from a permanent ranking asset.

Solving the ‘logic drift’ in long-form guides

Recent testing indicates that LLM reasoning performance can improve by up to 20% when a prompt includes a simple directive to “take a deep breath” or “work through this step-by-step.” This isn’t just a psychological trick for algorithms; it’s a necessary intervention for the “logic drift” that plagues long-form content. When an AI processes 3,000 words, its internal attention mechanism often prioritizes the immediate context of the last 200 words over the primary objective defined at the start.

the entropy of context in large articles

Logic drift is rarely a failure of the model’s intelligence. It’s a systems problem where vague context forces the machine to fill in the blanks with statistical guesses. If I don’t provide a rigid structural anchor, the AI defaults to the most probable next word based on its general training data, rather than your specific intent. This is why a technical guide on API integration can suddenly start sounding like a beginner’s blog post halfway through.

To prevent this, you have to treat context as a decaying asset. My team uses writing assistant optimization techniques that refresh the core goal every 500 words. We’ve found that inserting a “checkpoint” prompt,where the AI must summarize what it has already covered and how the next section connects to the main thesis,virtually eliminates the drift. It ensures the model hasn’t forgotten that it’s writing for a CTO, not a college student.

building a resilient seo workflow

Refining your ai writing prompts to include these guardrails changes the output from a disjointed list of facts into a cohesive narrative. It’s about moving away from the “all-at-once” generation style and toward a modular approach. When we built GenWrite, we focused on this exact problem: ensuring the AI doesn’t lose the thread while managing complex competitor data and keyword requirements.

implementing the ‘state-check’ technique

One of the most effective seo workflow tips I can offer is the use of state-checks. Before you let the AI draft a new H3, ask it: “What is the primary user intent we are solving, and how does this upcoming section serve that intent?” This forces a re-evaluation of the entire document’s logic. It’s a small friction point that saves hours of manual editing later. But don’t just ask for a summary; ask for a critique of the previous section’s relevance to the main keyword.

Results vary based on the complexity of the topic, but the evidence shows that structured, multi-step prompting prevents the “hallucination tax” that usually comes with bulk generation. And if you don’t anchor the AI in the specific problem it’s solving, it’ll wander into generic territory every time.

Scaling from one pillar to a full platform

Once you’ve tightened the logic and fixed the structural drift in your long-form guide, you’ve essentially built a proprietary knowledge base. Why let it sit on a single URL? Most creators treat a blog post as a destination, but the real pros treat it as the raw material for an entire ecosystem. It’s the difference between building a single house and designing a modular blueprint you can use for a whole neighborhood.

moving beyond the single-post mindset

Think about your content creation efficiency for a second. If you spend hours researching and refining a 2,000-word pillar, you’ve already done the hard work of thinking. You’ve identified the pain points, solved the problems, and structured the logic. You’ve essentially done the heavy lifting for your future self, yet most people ignore this goldmine. Starting a LinkedIn post or a newsletter from a blank page after that is just a waste of energy. Instead, you can feed that specific “source code” back into your workflow.

But how do you keep the quality from degrading as you scale? I’ve found that using “Projects” or specialized workspace features in your LLM of choice is the best way to maintain consistency. You upload your brand style guide and the final pillar post as reference files. Now, when you ask for a five-day email sequence based on that post, the AI isn’t hallucinating or pulling from generic training data. It’s pulling from your specific insights. This is how you start ranking faster with ai,not just on Google, but in the minds of your audience across every platform they inhabit.

the project-based repurposing hack

Using an ai seo writing assistant like GenWrite helps bridge this gap by ensuring the foundation, the blog itself, is structurally sound enough to be sliced into smaller pieces. You can take a single tip from your guide and turn it into a punchy social thread or a script for a 60-second video. Does every piece of content need to be a masterpiece? Probably not. But every piece should stem from that core pillar to ensure you aren’t contradicting yourself across different channels.

The reality is that most people fail with AI because they use it to generate new ideas constantly. The real power lies in using it to re-express your best ideas. When you stop looking at your blog as a standalone task and start seeing it as the anchor for a multi-platform strategy, the ROI on your time shifts dramatically. Think of it as a force multiplier for your brand’s voice. It’s not about doing more work; it’s about making the work you’ve already done work harder for you.

Final verdict: why structure beats speed every time

Speed is a vanity metric in content production. If you generate 100 articles in an hour but spend 100 hours fixing the logic, you haven’t saved time; you’ve just shifted the labor to a more expensive stage of the process. The modular shift isn’t just a technical preference. It’s the only way to reach a state where your drafts are 90% ready upon delivery. Teams that adopt this scaffold-first approach see their editing time drop from 30 minutes to just 10 within months. That’s how you optimize ai writing without sacrificing your brand’s integrity.

Using AI as a blunt instrument leads to inconsistency. When logic drifts or the voice wavers, you risk a revenue loss of up to 33% because of damaged brand equity. Readers sense when a machine is rambling. But when you build on a detailed, hierarchical outline, the AI acts as a precise executor rather than a confused author. We’ve seen this methodology result in a 93% reduction in total production time. It turns the content cycle from a frantic sprint into a repeatable system.

The math of modularity

Think about the friction you face now. Most editors spend their day stripping out fluff or fact-checking hallucinations. A modular architecture prevents these errors before they happen. You aren’t just telling the machine to write; you’re giving it a map it can’t ignore. This is the core philosophy behind GenWrite. It isn’t about pushing a button and hoping for the best. It’s about using writing assistant optimization to ensure every paragraph serves a specific SEO and reader goal.

Execution over excitement

Stop chasing the ‘one-click’ dream. It doesn’t exist for high-quality content. The real gain comes from the 10x multiplier of a well-designed prompt sequence. If you want to improve content quality, start by slowing down your planning phase. The 10 minutes you spend on a deep research scaffold will save you two hours of revision later. It’s time to treat your AI workflow like an assembly line, not a magic trick. The next step is simple: take your best-performing manual outline and break it into five discrete modules. See what happens when the AI only has to focus on one narrow objective at a time.

Stop wasting time on generic AI drafts that don’t rank. GenWrite automates the research and structural heavy lifting so you can focus on adding the human expertise that Google actually values.

People also ask

Why does my AI-generated content sound so repetitive?

It’s usually because you’re asking the AI to write too much at once. When you force it to generate a full article in one go, it runs out of unique things to say and starts looping. Breaking your prompts into smaller, section-specific tasks keeps the AI focused and fresh.

How do I stop the AI from hallucinating facts?

Don’t ask the AI to invent information. Instead, feed it your own data, research notes, or specific SERP snippets as the source material for each section. If you don’t give it guardrails, it’ll just guess based on probability.

Does Google penalize AI-written content?

Google doesn’t care if a human or a machine writes the content, as long as it’s helpful and accurate. The problem is that raw, unedited AI output is often generic and lacks the unique perspective that builds trust. You’ll always need a human to add the final polish.

What is the best way to win a featured snippet?

Keep your answer concise—ideally between 40 and 60 words. Structure your content so the direct answer appears right after the heading, as this makes it easy for search engines to grab and display your text in those top spots.