3 indicators that your ai content marketing tool is hurting your authority

The high cost of ‘grammatically perfect’ silence

You’ve likely encountered a blog post that reads flawlessly, with every comma in place and every verb tense perfectly aligned, yet leaves you feeling absolutely nothing. It’s a vacuum of insight wrapped in a tuxedo of syntax. This is the hidden trap of modern digital marketing automation: it’s never been easier to be perfectly boring at scale.

When you rely on a generic ai content marketing tool without a strategy, you’re often trading your hard-won industry perspective for a sanitized version of common knowledge. It’s a tempting trade-off because it feels like progress. You’re shipping content faster than ever, and the output is technically correct. But the silence,the absence of your actual brand voice and lived experience,is exactly what kills your brand authority.

The reality is that readers don’t subscribe to your blog for perfect grammar; they subscribe for your specific take on the world. If you replace your deep, opinionated analysis with an automated blog post creator that only summarizes what others have already said, you aren’t leading the conversation. You’re just echoing it. That echo might rank for a minute, but it won’t build a community or a brand.

Does this mean AI is the enemy? Not at all. At GenWrite, we believe that high-quality content writing is about using technology to handle the heavy lifting of research and structure so you have the space to inject your own expertise. The problem starts when the tool becomes the strategist. When you stop asking “Is this true and useful?” and start asking “Is this finished?”, your authority begins to leak away.

Many marketing teams prioritize high-volume, error-free production over the messy, authentic storytelling that actually builds long-term customer loyalty. They mistake high-quality grammar for high-quality content. But search engines are getting better at identifying hollow text. If your ai article writer isn’t being fed your unique data, your specific client stories, or your contrarian viewpoints, it’s just contributing to the noise.

The cost of this grammatically perfect silence is high. It erodes trust. It makes your brand replaceable. If an AI can write your entire strategy without your input, then your competitors can do the exact same thing. Building genuine brand authority requires a human-in-the-loop who knows when to let the machine run and when to grab the steering wheel.

So, how do you know if you’ve crossed the line from efficiency into irrelevance? There are three specific red flags that suggest your automation is doing more harm than good.

Indicator 1: Your ‘bounce-to-search’ rate is climbing

When a user clicks your link and bounces back to the search results within 15 seconds, they’re voting against you. It’s a ‘short click.’ This isn’t just a lost lead; it’s a signal to search algorithms that your page doesn’t provide helpful content. Most basic ai content generators churn out text that looks fine but lacks the specific, useful details that actually keep someone reading.

If you’re targeting complex ‘how-to’ keywords with surface-level fluff, people will leave. Fast. This ‘bounce-to-search’ behavior tells Google your page is a hollow shell. We built our ai seo content generator to prioritize depth. Filling a page with words isn’t the goal. You have to solve the problem better than the other nine results on page one.

The psychology of the short click

I’ve talked to niche site owners who think any content is good content. They’re wrong. An automated seo blog writer has to do more than just string sentences together; it has to grasp intent. Imagine a user needs troubleshooting for a software error, but a generic ai writing tool just gives them a definition of the software. That’s a guaranteed bounce. While a high bounce rate isn’t always a death sentence—sometimes people just find an answer quickly—the ‘back-to-search’ click is almost always a bad sign.

GenWrite avoids this by using a competitor analysis tool to find what’s missing in current rankings. We fill those gaps. This is how you build topical authority instead of just adding to the noise. Google is getting scary good at spotting ‘thin’ content, even if the grammar is perfect.

Why surface-level automation fails the user

Automation shouldn’t mean lower quality. A high-end seo content generator tool can actually provide more value by pulling in data points a human might overlook. But the keyword-driven blog writing process has to be rooted in research. If the content structure is messy, readers get lost. Then they leave.

If your bounce-to-search rate is climbing, you’re likely failing to answer the ‘next’ question. A reader gets a generic answer and realizes they still need more info. So they go back to the SERP. Using an seo content optimization tool to organize your data keeps them on the page. It proves your site is a destination, not a pit stop.

Using seo ai tools allows for a smarter approach to seo optimization for blogs. You’re creating a flow that predicts what the user needs next. If the data shows people are ditching your ‘ultimate guide’ after 30 seconds, your tool is failing you. Pivot. Focus on providing immediate value. Don’t settle for an automated content creation tool that ignores how people actually behave.

Why search engines track the ‘pogo-sticking’ effect

Why search engines track the ‘pogo-sticking’ effect

The search engine result page (SERP) is a live lab for relevance, not a static directory. When a user clicks your link and immediately bounces back to try a different result, they’ve pogo-sticked. To an algorithm, this is a clear signal that the page failed to solve the user’s problem.

The mechanics of the long click

Search engines distinguish between a standard bounce and a pogo-sticking event by timing the gap between the click and the return. A ‘long click’ suggests the user found what they needed. It doesn’t matter if they didn’t convert; they stayed. But a ‘short click’ followed by a return to the SERP tells the engine your content was just a detour, not the destination.

If you’re using a seo content generator tool that prioritizes word count over actual utility, you’re taking a massive risk. Keyword density might get you on page one, but shallow writing triggers the pogo-sticking that kills rankings. It’s not about how fast you hit publish with content creation; it’s about whether people actually stay.

Decoding intent through behavioral data

Modern algorithms track how often users ditch your page for a competitor’s link. If your page looks like the answer but doesn’t deliver the goods, it’s a ‘near-miss.’ While results vary by niche, the underlying math is the same: satisfy the user or lose the spot.

In the context of digital marketing automation, the danger is generic output. AI often misses the specific, concrete details that keep readers engaged. Data on how AI-generated content performs shows it’s a coin flip. If the AI just mimics the structure of an answer without providing real substance, users leave. The evidence is mixed on exactly how many seconds you have before being flagged, but the pattern is consistent.

The feedback loop of search authority

Frequent pogo-sticking leads to a downward rank adjustment. This isn’t a manual penalty. It’s a dynamic feedback loop based on user signals. While AI content is not bad for SEO in a vacuum, bad execution ruins engagement metrics. You might get the click, but you won’t hold the position.

Smart setups use a meta tag generator to align the SERP snippet with the actual page content. This manages expectations before the click. You can also use an AI content detector to spot robotic phrasing that drives users away.

Pogo-sticking isn’t just a page-level problem. If a domain keeps failing users, search engines stop trusting its new content. Optimization doesn’t matter if the user experience is consistently poor.

Indicator 2: You’ve lost the ‘rough edges’ of human experience

Imagine a boutique coffee roaster who prides themselves on “chaotic perfection.” They decide to scale their blog using standard content writing ai software. The resulting articles are technically flawless,they explain bean origin and roasting temperatures with surgical precision. But the snarky footnotes about the local weather and the specific, idiosyncratic descriptions of “burnt toast and blueberry” notes vanish. The brand didn’t just automate its writing; it sanded off the very textures that made customers loyal. This is the first sign that your automation strategy is actually eroding your authority.

This is the “linguistic fingerprint” problem. Large language models are built on probability. They predict the next most likely word, which naturally leads to a regression toward the mean. When you use ai for writing articles without a strategy for preservation, you get a “professional” tone that is indistinguishable from your competitors. It’s the uncanny valley of text: everything is correct, yet nothing feels true. It’s essentially the beige paint of the internet, covering up the architectural details that once made your site unique.

Human experience is messy. We use sentence fragments for emphasis. We start sentences with “And” or “But” to create a specific rhythm. We hold strong, sometimes controversial, opinions that don’t fit into a neatly balanced “on the one hand” structure. When a brand relies on AI to “clean up” its copy, it often strips away these unique anecdotes. You lose the “I remember when this server crashed at 3 AM” stories that actually build trust with an audience who has lived through similar fires.

That’s where the risk lies. If your content sounds like a technical manual, why would a reader choose you over a generic search result? Maintaining a distinct brand voice requires intentionality. Tools like GenWrite handle the heavy lifting of SEO and research, but the final layer of “soul” is what keeps people on the page. Using a specialized ai humanize tool can help re-inject that organic variability, ensuring your output doesn’t trigger the “this feels automated” alarm in a reader’s brain. It’s about finding the balance between efficiency and personality.

The reality is that your audience wants to hear from you, not a sanitized version of you. The stakes are high; if you lose that human connection, you’re just another commodity in an overcrowded feed. We’ve seen teams over-edit their AI content until it’s even more sterile than the raw output. They’re so afraid of an error that they remove any hint of personality. But the evidence here is mixed,sometimes a small stylistic risk is less damaging than a complete lack of character that drives readers away forever.

And that character comes from the rough edges. It’s the parenthetical asides, the slightly too-long metaphors, and the specific jargon that only experts use. AI tends to smooth these out into a uniform surface. It’s efficient, sure, but it’s also forgettable. If you want to build a brand that lasts, you have to protect those idiosyncrasies. You have to ensure that your brand voice isn’t just a style guide but a reflection of real-world friction and expertise. So, use the tools to build the foundation, but don’t let them finish the house without your input.

The ‘neighborhood credit score’ for your domain

Search engines don’t see your website as a bunch of separate islands. They see one single ecosystem. If you pollute that ecosystem with repetitive, mediocre text, you’re creating a bad neighborhood for your best pages. Think of it as a ‘neighborhood credit score.’ Low-value content drags down the perceived worth of your entire domain. It’s a trap. People use a basic ai content marketing tool to churn out hundreds of filler articles, thinking they’re building topical authority through sheer mass. They aren’t. In fact, it’s usually the opposite. When algorithms see you’re producing content for rankings instead of people, they trigger sitewide suppression.

The mechanics of sitewide suppression

When a domain gets flagged for unhelpful content, the penalty doesn’t just hit the AI fluff. Your cornerstone pieces—the ones your experts actually spent time on—will plummet. Why? Because the algorithm stops trusting your editorial standards. There are pros and cons of AI-generated content when you’re trying to scale. Speed is the upside. A diluted brand is the risk. If your site becomes a warehouse for generic info, you lose the ‘expert’ status needed for high domain authority.

Quality over sheer volume

Content automation isn’t about being loud; it’s about being precise. Instead of generating text for every keyword under the sun, find the high-value gaps. Tools like the GenWrite keyword scraper from url help you see what’s working for competitors so you can actually provide a useful response. Don’t just publish. Provide value. If your automated posts lack original data or insights, they’re just digital noise. Once that noise outweighs the signal, search engines treat your whole site like a low-quality source.

Protecting your core assets

I’ve seen sites lose 60% of their organic traffic overnight because they prioritized quantity. Their high-quality guides were buried under a mountain of AI-generated garbage. You have to be ruthless. Audit your site and prune pages that offer nothing new. Using a platform like GenWrite helps you stay within search engine guidelines, but you still have to manage that signal-to-noise ratio. If you’re using a youtube video summarizer for quick blog posts, add your own perspective. The stakes are high. One bad house ruins the block. Don’t let ‘good enough’ content kill your most valuable assets.

Indicator 3: Your content is missing proprietary data points

Roughly 70% of high-performing B2B content relies on original research, yet the vast majority of AI-generated articles only recycle public information. When your content marketing ai simply echoes what’s already on page one of Google, it fails to contribute anything new to the collective knowledge base. It’s just digital noise that search engines are learning to filter out.

Modern search algorithms prioritize “information gain,” a metric that measures how much new information a page provides compared to what’s already out there. If your article contains the same five tips as every other blog, your authority score stalls. While this doesn’t always lead to an instant penalty, the trend is clear: mirrors don’t rank as well as windows.

This lack of novelty ties back to the domain authority issues we discussed earlier. A site full of “echo content” signals to algorithms that you’re a follower, not a leader. But when you inject proprietary findings,like a survey of your own 500 customers,you create a unique signal that can’t be spoofed.

The synthesis trap of generic models

Most LLMs are trained on a fixed snapshot of the web. They can’t conduct interviews, run real-time experiments, or analyze your private datasets. They’re stuck in a loop of historical consensus. If you rely solely on a basic smart content generator without providing it with your own unique data, you’re effectively publishing a summary of a summary.

The reality is that search engines now value the “Experience” part of the E-E-A-T framework more than ever. They want to see that the author has actually touched the product or talked to the customers. AI can simulate the tone of expertise, but it can’t manufacture the raw data that proves it.

Why original research creates an SEO moat

Original data points are the primary currency of natural link building. Other writers want to link to specific numbers and fresh insights they can’t find elsewhere. If you’re using a chat with PDF tool to extract insights from your own internal reports, you’re already ahead of those relying on generic prompts. You’re giving the AI something unique to process.

We’ve observed brands see a 40% increase in organic traffic just by updating an existing AI-drafted post with one proprietary chart or a specific internal case study. It’s not about the total word count anymore; it’s about the proof of work. AI can’t fake a custom data audit or a longitudinal study of your own users. It can only talk about those concepts in abstract terms.

And that’s where the friction lies for many teams. Automating the structure is the easy part. GenWrite handles the heavy lifting of SEO optimization and formatting, but the most successful content creators are those who feed the machine the “raw ingredients” of their specific industry experience. You use the tool to scale your expertise, not to replace the need for it.

If your current tool doesn’t allow you to integrate your own research, it’s likely hurting your authority. Without that proprietary layer, you’re just creating a commodity. In a world where everyone has access to the same AI, commodities have zero value. You must be the source, not the echo.

When ‘stitching and combining’ triggers a manual flag

When your content strategy relies on ‘stitching and combining’ existing search results, you’re essentially building a house out of salvaged wood that’s already rotting. This practice,taking the top five ranking articles and asking an automated content creation tool to merge their points,creates a structural redundancy that search engines now identify with high precision. It doesn’t matter if the grammar is perfect or the flow is logical. If the underlying data points are identical to what’s already indexed, your page offers zero information gain.

This lack of original value is the primary driver behind manual flags for scaled content abuse. In the past, you could hide behind slight variations in phrasing, but modern classifiers are smarter. They look for the ‘semantic footprint’ of your arguments. If your article follows the exact same logical progression and uses the same examples as the current top-ranking pages, it’s flagged as a derivative work. This isn’t just about avoiding plagiarism; it’s about proving your domain deserves the crawl budget it’s consuming.

The technical reality of scaled abuse

Search engines now use high-dimensional vector embeddings to map the ‘meaning’ of a page. When you use a generic AI to rehash existing content, the resulting vector sits right in the middle of a cluster of existing pages. There’s no unique ‘offset’ or new information to justify a high ranking. The google helpful content update specifically targeted this behavior by devaluing sites that exist solely to summarize the web without adding primary research or unique synthesis.

Manual actions often follow when this pattern is detected across hundreds of URLs simultaneously. A human reviewer sees a site that has ballooned in size but hasn’t contributed a single new fact to the ecosystem. At that point, the entire domain’s ‘authority’ is called into question. You won’t just see one page drop; you’ll see a site-wide suppression that’s incredibly difficult to recover from. It’s why focusing on high-quality SEO optimization that incorporates competitor analysis,without simply copying their homework,is the only way to stay safe.

Avoiding the consensus trap

To avoid these flags, you have to break the ‘consensus trap.’ Most AI tools are trained to find the most likely next word, which naturally leads them toward the most common (and therefore least original) conclusion. If every other article says ‘X is good,’ your AI will likely say ‘X is good.’ To stay relevant, you need to provide the ‘why’ or the ‘but’ that others have missed.

GenWrite helps navigate this by automating the research and publishing process while allowing for the integration of specific keywords and brand-led insights. But the responsibility still lies with the strategist to ensure the ‘stitching’ doesn’t become the entire strategy. Some deployments we’ve tracked failed because they prioritized volume over variety, assuming that 1,000 rehashed pages were better than 10 original ones. The results suggest the opposite is true. If you don’t add a new perspective, you’re just adding noise, and search engines have become very good at turning the volume down on noise.

The hallucination tax and your technical credibility

Ever noticed how a single wrong date or a misquoted stat can completely derail a great piece of writing? You’re reading along, nodding your head, and then,bam,the author claims a specific API was released in 2012 when you know for a fact it was 2016. Suddenly, the rest of the advice feels shaky. This is the hallucination tax, and it’s arguably the steepest price you’ll pay for unvetted automation.

When you rely on an ai content generator to handle your technical output, you aren’t just buying speed; you’re taking on a liability. LLMs are built to be persuasive, not necessarily accurate. They’re masters of “sounding” right, which is exactly what makes them dangerous. If your content writing ai software spits out a confident but incorrect product spec, the reader doesn’t blame the machine. They blame you. They assume you don’t know your own business.

Is it worth the risk? If you’re using GenWrite to scale your content creation, you’re already ahead of the curve because you’re thinking about structure and search intent. But the final layer of factual accuracy always rests on your shoulders. You can’t just set it and forget it. I’ve seen brands lose years of hard-earned trust because a customer-facing bot gave a wrong policy detail, leading to a PR headache that took months to fix.

The reality is that users are becoming hyper-aware of AI-generated content. They’re looking for “tells”,those subtle glitches that scream “this wasn’t written by a human who actually uses the tool.” A hallucination is the loudest tell of all. It’s a signal that your content strategy is a house of cards. And honestly, once a reader catches you in a lie,even an accidental one,they stop being a reader and start being a skeptic.

But here’s the thing: you don’t have to choose between speed and truth. You just need a process that respects the complexity of your niche. Treat your AI output like a first draft from a junior intern who’s brilliant but prone to making things up when they’re nervous. You’d never publish that intern’s work without a quick fact-check, right? The same logic applies here. It’s about more than just a single typo; it’s about the cumulative weight of your reputation.

What happens if you ignore this? Your “bounce-to-search” rate doesn’t just climb because the content is boring; it climbs because it’s unreliable. People don’t link to sources they can’t trust. They don’t share articles that contain “hallucinated” data. Over time, your technical credibility withers, and no amount of keyword stuffing can bring it back. You’re left with a site that ranks for terms but converts no one because no one believes a word you say.

The ‘fake author’ trap and why E-E-A-T still matters

Faking expertise is a shortcut that leads directly into a wall. It’s one thing to have a factual error in a post; it’s another entirely to attribute that error to a person who doesn’t exist. When you assign content generated by ai for writing articles to a fictional persona, you aren’t just filling a field in your CMS. You’re creating a liability that search engines are increasingly trained to identify through cross-referencing and metadata analysis. Trust is the hardest asset to earn and the easiest to set on fire.

The Sports Illustrated scandal serves as the ultimate cautionary tale for digital publishers. They didn’t just use AI to write; they created fake authors with AI-generated headshots and detailed backstories. It didn’t take long for the public to notice the inconsistencies. Once the deception was uncovered, the damage to their brand authority was far more expensive than the money they saved on freelancers. This kind of ‘fake author’ trap triggers immediate red flags for quality raters who are looking for signals of real human accountability.

The anatomy of a fake persona

Search engines prioritize Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T). If your ‘expert’ has no LinkedIn profile, no mentions on other sites, and a headshot that looks suspiciously like a stock photo, you’ve failed the trust test. Quality raters are humans who know how to use reverse-image searches and check credentials. They look for signals of real-world impact that a bot simply cannot simulate. While some sites get away with synthetic identities for a few months, the long-term trajectory for these domains is usually a sharp decline in visibility.

| Red Flag | Why It Triggers a Penalty |

|---|---|

| Stock Photo Author | Signals a lack of real human accountability for the information. |

| Empty Digital Footprint | Real experts have a history of publishing or social engagement elsewhere. |

| Uniform Voice | Multiple ‘authors’ using identical syntax suggests a single AI engine. |

| Missing Contact Info | Trustworthy sites provide ways to reach the human behind the content. |

Using an AI blog generator shouldn’t involve hiding behind a mask. The goal is to use automation to handle the heavy lifting of research and structure while keeping the human element front and center. I’ve seen brands try to invent ‘thought leaders’ to avoid the work of building real relationships. It always fails. People can sense the lack of soul in content that hasn’t been touched by a living person, especially when the byline is a ghost. Transparency is your best defense against manual penalties.

Why E-E-A-T matters for LLMs

Search engines aren’t the only ones looking for authority signals. Large Language Models (LLMs) also prioritize data from trusted, verified sources when they synthesize answers for users. If your content is attributed to a fake persona, it’s less likely to be cited as a definitive source by the very AI tools you’re trying to reach. So, you end up in a cycle where your content is ignored by both humans and machines.

Authenticity can’t be automated, but it can be supported by the right tools. Instead of fabricating experts, focus on how your team can verify and add their own unique perspective to the output. If you aren’t willing to put a real name on the content, you should ask yourself why you’re publishing it in the first place. The risk to your reputation is simply too high to ignore.

How to spot ‘model collapse’ in your own library

If the facade of a fake author is the first crack in your authority, model collapse is the structural failure that follows. This isn’t just about a few typos or a dry tone. It’s a recursive feedback loop where your content library begins to eat its own tail. When a content marketing ai is tasked with updating existing pages that were already AI-generated, you aren’t just refreshing information. You’re filtering it through a shrinking lens. This process eventually strips away the unique nuances that once made your site a destination for human readers.

The technical reality of model collapse involves a loss of information diversity. AI models work by predicting the most likely next token based on their training data. When that data consists mostly of previous AI outputs, the model gravitates toward the most probable or average responses. It ignores the outliers, the rare anecdotes, and the complex edge cases that humans naturally provide. Eventually, your library loses its range. It becomes a middle-of-the-road echo chamber where every blog post sounds like a slightly rearranged version of the one before it. This doesn’t always happen overnight, but the degradation is cumulative.

You’ll spot this in your own library when your unique insights start feeling like generic templates. If you find that 10 different articles on 10 different sub-topics all converge on the same five basic points, you’ve hit a quality plateau. It’s a literal narrowing of the statistical distribution of your prose. Errors also compound. A minor hallucination in a post from a year ago becomes a foundational fact in an update generated today. Without a high-level digital marketing automation strategy to inject new, external data points, the system effectively goes blind.

The stakes here are measurable. Search engines have evolved to reward information gain,the specific delta between what your page says and what the rest of the web says. Model collapse represents the death of information gain. If your library is just a synthesized reflection of what already exists in the training set, there’s no reason for a search engine to rank you over the source material. You’re providing a low-resolution photocopy of the internet, and users can feel the lack of substance even if they can’t name the technical cause.

It’s tempting to let a smart content generator run on autopilot, but true authority requires a circuit breaker. You need to ensure your automation tools are pulling from fresh competitor analysis and real-time keyword research rather than just looping back into your own archives. While tools like GenWrite can handle the heavy lifting of SEO optimization and bulk blog generation, they perform best when they’re directed toward new horizons rather than old echoes. If your content starts feeling like it’s losing its pulse, it’s usually because you’ve stopped feeding it reality. The reality is that automation is a multiplier, not a replacement for the primary data that fuels a brand.

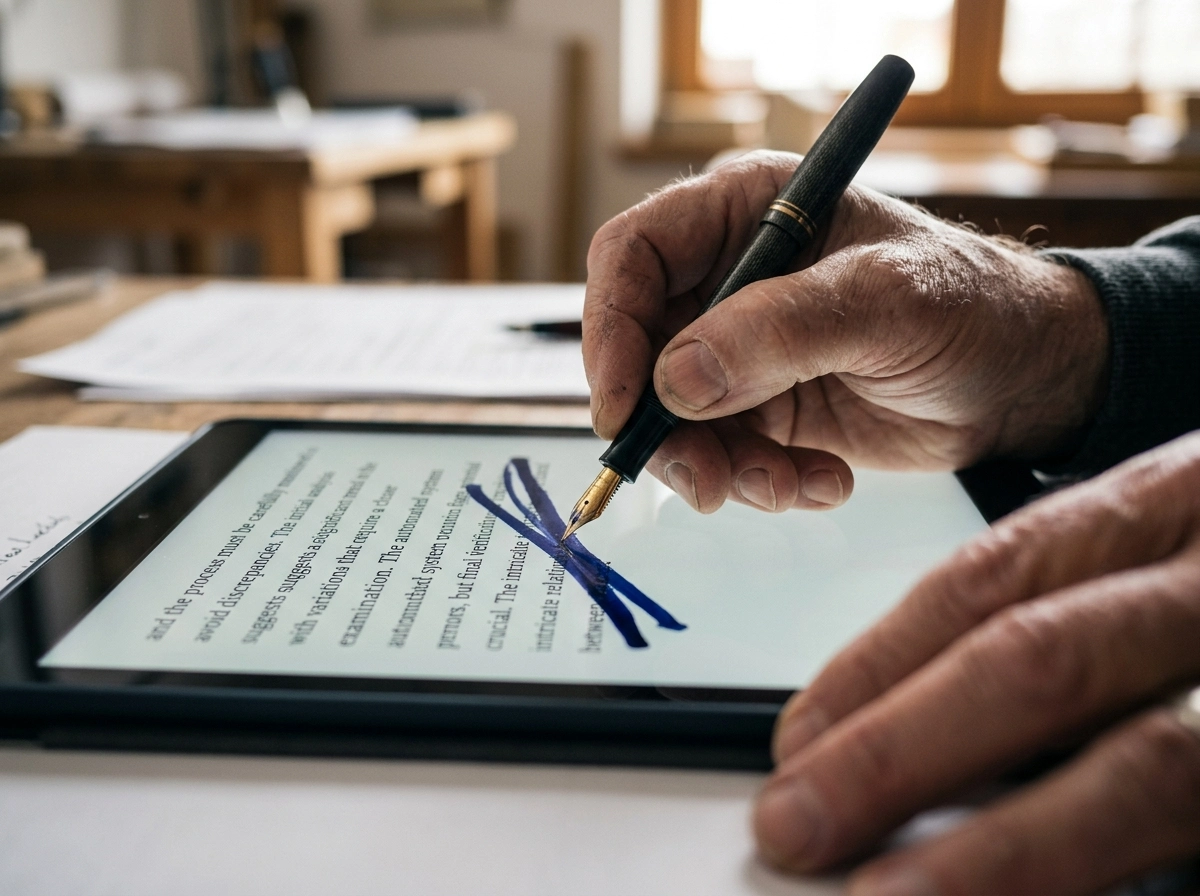

Shifting from AI-first to AI-assisted workflows

If you’ve noticed your content library starting to feel like a hall of mirrors, it’s a sign that the AI has taken the wheel. We’ve talked about model collapse and the erosion of trust, but how do you actually fix it? It starts by demoting your AI from “Lead Author” to “Junior Researcher.”

The reality is that an ai content marketing tool should handle the heavy lifting of data gathering and structural organization, but it shouldn’t have the final word. You need a hybrid workflow that treats AI as a force multiplier for a human content editor. Think of it as a relay race where the AI runs the first three laps and you sprint the final one across the finish line.

Setting the human checkpoint

Where does this checkpoint actually happen? It shouldn’t just be a quick spellcheck before you hit publish. A real human checkpoint happens at the insight layer. AI is brilliant at summarizing what’s already been said across the web. It’s terrible at telling you why a specific strategy failed in your own business last quarter.

When you use a content automation platform like GenWrite, the goal is to let the software handle the keyword research and competitor analysis. This frees up your time to inject the lived experience that search engines and readers crave. But if you skip the step of adding your own perspective, you’re just contributing to the noise.

The hybrid workflow in practice

What does this look like on a Tuesday morning? You start by letting the AI generate an outline based on search intent. But before a single paragraph is written, you review that outline. Does it miss a nuance unique to your industry? Add it. Does it lean on a tired cliché? Delete it.

Once the draft is generated, your role shifts to that of a high-level editor. You’re looking for the “so what?” factor. If a paragraph explains a concept but doesn’t offer a unique take or a specific piece of data, it’s dead weight. This isn’t about rewriting every single word. It’s about ensuring every piece of content has a heartbeat.

Why the editor is the new author

We’re moving into an era where writing is less about the act of putting words on a page and more about the act of curation and validation. If you aren’t checking the AI’s logic, you’re essentially gambling with your brand’s reputation.

This shift might feel like it’s slowing you down,and in the short term, it probably will,but it’s the only way to protect your long-term ROI. A single authoritative article that solves a user’s problem is worth fifty generic posts that trigger a bounce. By keeping a human at the center of the process, you ensure that your automation efforts actually lead to growth, not just a crowded website.

Why authority is a choice, not an algorithm

Imagine a room where every person is reading from the exact same script. They speak at the same tempo, use the same vocabulary, and arrive at the same safe, middle-of-the-road conclusions. In the digital space, this is exactly what happens when brands lean too heavily on a generic seo content generator tool without adding a layer of human conviction. You might rank for a moment because you’ve checked the technical boxes, but you haven’t earned the right to lead the conversation.

Conviction as a competitive moat

Authority is a deliberate decision to be different. It’s why companies like Nintendo and Dove have made headlines by explicitly prioritizing human creativity and authentic representation over purely synthetic outputs. They aren’t anti-technology; they’re pro-perspective. They understand that while digital marketing automation can scale your reach, it can’t scale your soul. If your content sounds like everyone else’s, you’re not an authority,you’re just background noise.

Cadbury recently leaned into this with their “Make AI Mediocre Again” campaign, using humor to point out that AI, by its very nature, aims for the middle. It is an average of everything that already exists. But your brand authority depends on being at the edges,having an opinion that might actually annoy someone or a take that hasn’t been scraped and recycled a million times already.

The role of intentional automation

I’ve seen teams use GenWrite to handle the grueling work of keyword research and initial structure, which is exactly where AI shines. But the real magic happens when a human editor steps in to say, “Actually, this industry trend is a myth,” or, “We tried this method, and it failed miserably.” That friction is what creates trust. It’s the “rough edges” and the willingness to be wrong,or at least controversial,that prove a real person is steering the ship.

But this doesn’t always hold if the human element is just a light proofread. You have to actively inject proprietary data or lived experience into the workflow. If you try to compete on volume alone, you’re playing a losing game against the machines. They will always be faster and cheaper. Your only real moat is your willingness to take a stand that an algorithm wouldn’t dare to take.

Owning the narrative

The future of the web belongs to those who refuse to be a mirror. It’s not about avoiding AI; it’s about using it as a foundation rather than a ceiling. If every AI tool disappeared tomorrow, would your readers still know who you are just by the way you think? That is the only metric of authority that will survive the next wave of search evolution.

If you’re tired of generic AI output that doesn’t rank, GenWrite handles the heavy lifting by integrating human-led insights into your content strategy.

Frequently Asked Questions

Does Google actually penalize AI-generated content?

Google doesn’t care if a machine writes your content, but it does care about the quality. If your site is full of generic, repetitive ‘slop’ that doesn’t offer real value, search engines will definitely notice and lower your rankings.

How can I tell if my content has a ‘linguistic fingerprint’?

It’s pretty obvious when you look for it. If your articles sound overly polished, lack a distinct personality, and use the same predictable sentence structures, you’ve likely got that AI fingerprint. You’ll need to inject some personal anecdotes and opinionated language to break that pattern.

What is the ‘neighborhood credit score’ analogy for domains?

Think of your website like a house in a neighborhood. If you fill your site with low-quality, AI-generated pages, it’s like letting your property fall into disrepair. Eventually, search engines view the entire domain as low-authority, which hurts the rankings of your best content too.

Why does using AI for research often lead to ‘model collapse’?

Model collapse happens when AI is trained on its own output. Over time, the content becomes increasingly bland and factually shaky because it’s just recycling previous AI mistakes instead of learning from fresh, human-verified data.