Why we moved our ranking strategy to a niche-specific ai article generator

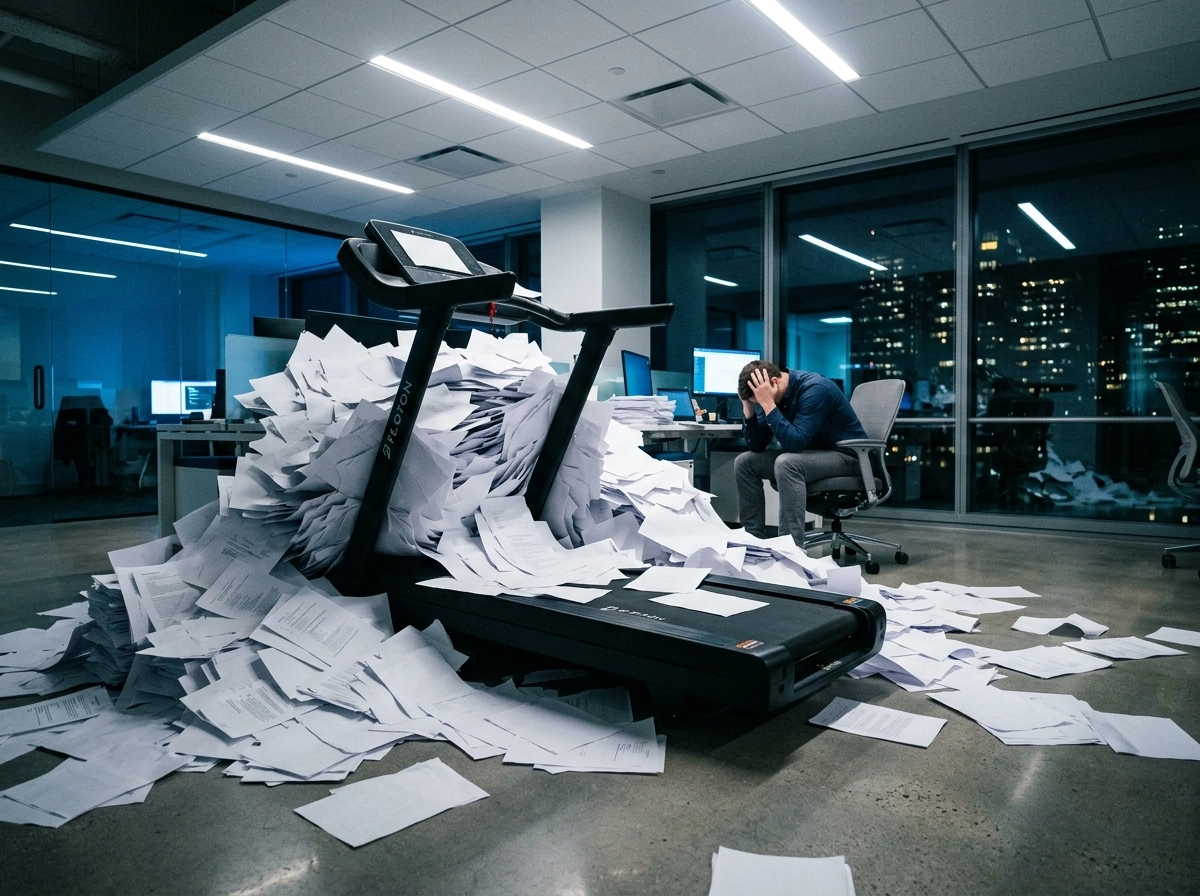

The volume trap that stalled our organic growth

Picture a startup selling ultra-light backpacking gear. They decide to flood the market by pumping out forty articles a week. They cover everything: packing bags, mountain views, you name it. For two months, the dashboard looks like a rocket ship. Traffic’s up, founders are popping champagne, and the team feels like they’ve cracked the code. Then, the line flatlines. By month four, that expensive organic traffic starts to vanish. It’s not a glitch. It’s a structural collapse that happens when you lean too hard on generic content.

I’ve seen this movie before. Search engines give new pages a “test drive” to see how people react. But when a hiker clicks on a post and finds a shallow summary that just states the obvious, they’re gone in seconds. This automated blog post creator mistake isn’t just about bad writing. It’s about a total lack of value. If someone’s looking for how a specific stove handles 10,000 feet of elevation, an AI article saying “stoves help you cook” is just noise. It’s useless.

The correlation trap and generic pitfalls

Marketers love a good correlation trap. They see a few likes and think their ai seo content generator is building a legacy. It isn’t. Real growth needs more than a word count or a green light on a SEO plugin. You need a topical authority strategy that actually gets the industry. Volume is a sugar high. Eventually, the floor drops out if there’s no depth.

I’ve watched SaaS founders hire agencies that promise the world through high-frequency posting. They end up with 500 “perfect” posts that don’t convert a single person. Google is getting scary good at spotting “thin” content, even if it’s 2,000 words long. If you don’t have a unique angle or real data, you’re just cluttering the internet. We realized a seo friendly content generator has to do more than type; it has to actually analyze the competition.

We changed how we do things at GenWrite because the old “spray and pray” method is dead. SEO isn’t a static checklist. It’s a living thing. You can’t just automate the typing. You have to automate the research and the logic behind the words. If you treat content like a cheap commodity, don’t be shocked when search engines treat it as trash. The volume trap is a real threat. The only way out is to care more about being specific than being loud.

Generic prompting vs the professional meal-kit approach

You’ve probably spent hours massaging a prompt only to end up with a result that feels like a lukewarm high school essay. It’s a common trap. When we first started using AI, we treated it like a conversational partner—a smart buddy who could help us brainstorm. But that’s exactly where the problem lies. General-purpose AI is designed for breadth. It doesn’t have the sharp focus required for SEO optimization for blogs.

The meal-kit philosophy of content

Think of generic prompting like going to a grocery store without a list. You might find some good ingredients, but you’ll probably forget the salt, and you’ll definitely spend too much time wandering the aisles. A professional meal-kit approach is different. It provides a structured content creation workflow where every ingredient is pre-measured and designed to fit a specific recipe. This structure is what separates a hobbyist from a real production engine.

When we moved to a specialized ai article generator, we weren’t just looking for better sentences. We were looking for a repeatable system. Unlike a chatbot that starts from scratch every time, a dedicated automated blog post creator follows a rigid, high-performance blueprint. It understands that a blog post isn’t just text. It’s a structural asset that needs automated on-page SEO writing to survive the current search environment.

Why structure beats chatting

Generic AI models are trained on the entire internet, which means they’re incredibly good at being average. If you ask for a blog post on sustainable gardening, you’ll get the same five tips every other site has already published. But a niche-specific tool like GenWrite uses domain-specific data to ensure the output isn’t just grammatically correct—it’s actually useful. It’s the difference between a general practitioner and a heart surgeon. One knows a bit of everything; the other knows exactly where to cut.

The reality is that an ai content saas that lacks a structural foundation will eventually hit a traffic ceiling. We saw this firsthand. Our content felt fine, but it lacked the content-structure and internal linking necessary to build topical authority. By switching to a tool built for keyword-driven blog writing, we stopped guessing what worked and started following a proven conveyor belt of production. Results vary based on how competitive your niche is, but the structural advantage is undeniable.

Moving beyond the prompt

Relying on the perfect prompt is a gamble because prompts are fragile. One small change in phrasing and the AI’s logic shifts. A professional setup uses SEO AI tools that bake the prompting into the software logic itself. This allows you to focus on high-level content writing strategy instead of fighting with an interface. It moves the needle from manual labor to high-level oversight.

You’re choosing an ai seo article writer that knows how to handle the heavy lifting of AEO website ranker requirements. When the system handles the layout, the technical SEO, and the initial research, your role shifts from writer to editor-in-chief. That’s how you scale without losing your mind or your rankings. It’s about building a system that rewards your time rather than consuming it.

Identifying the ‘content gaps’ our manual audits missed

Manual audits miss about 40% of high-intent topics. Why? Because humans naturally gravitate toward high-volume keywords and ignore the subtopics that actually drive conversions. It’s not that we weren’t trying. It’s just that our perspective was limited. We’d look at the top ten competitors and try to copy them. But copying isn’t a niche site content strategy; it’s just a race to the middle.

The bias of volume over intent

We used to spend weeks mapping out keywords with the biggest search numbers. It felt right at the time. But we missed the ‘information gain’ that Google actually cares about. Algorithms don’t have our biases. They scan competitor schema to find specific subtopics that are statistically relevant but missing from the top pages. These are the real knowledge gaps.

Once we plugged in seo content software, we saw exactly where we were failing in the middle of the funnel. We had plenty of ‘what is’ and ‘how to’ guides. We were completely ignoring the ‘is this worth it’ queries. Those have lower volume, sure, but the conversion rates are much higher. It takes time for search engines to re-index these new clusters, but the advantage is obvious.

Uncovering hidden topical clusters

Everything changed when we traded guesswork for hard data. For example, GenWrite found a cluster about ‘compatibility issues’ that every single competitor had ignored. It wasn’t just one article. It was an entire authority pillar we’d missed while staring at manual spreadsheets.

A keyword-driven content strategy powered by machine learning gives you a microscopic view of your niche. You find the friction points. Maybe users are dropping off because they need a specific comparison tool or a ‘step zero’ guide. You won’t find that level of detail just by skimming a few blog posts.

Technical precision in content mapping

Manual audits also miss the technical bits that help you rank. You can write great prose and still fail if the structure is off. Tools like a meta tag generator or automated auditors fix the disconnect between good writing and SEO visibility.

It’s much simpler to use AI to write blog posts that are built for SEO from day one. Retrofitting a messy content calendar is a nightmare. By moving to a niche-specific generator, we stopped playing catch-up. We started building a library that covers the whole user journey, as we’ve noted in our blogging updates.

How we rebuilt our workflow for AEO visibility

After we pinpointed the gaps our manual audits missed, we realized the problem wasn’t just missing topics. It was a structural failure. We were still writing for a traditional search engine model while the world moved toward Answer Engine Optimization (AEO). We stopped viewing our blog as a collection of articles and started treating it as a knowledge graph. This shift was the only way to survive the transition where LLMs, not just users, are the primary consumers of our data. We moved away from a traditional page-level focus and began treating every blog post as a structured data set designed for machine consumption.

Implementing a schema-rich architecture

The first step involved a total overhaul of our page architecture. Traditional SEO treats schema as an afterthought, but for AEO, it’s the foundation. We integrated deep-level schema markup that defines relationships between entities, not just the basic Article or FAQ tags. By using a sophisticated content creation ai, we could automate the generation of these data layers. This allowed us to map out how a specific topic connects to broader entities. We found that when we explicitly defined these relationships in the code, AI search engines were much more likely to synthesize our content into a direct answer. It isn’t just about providing information; it’s about providing it in a format that an LLM can digest without ambiguity. We started using GenWrite to handle the bulk of this technical heavy lifting, ensuring every post was pre-linked and pre-tagged for entity recognition before it ever went live.

Measuring the shift in visibility

Tracking success required a complete departure from standard metrics. We didn’t ignore clicks, but we stopped letting them dictate our strategy. Instead, we began monitoring citation frequency. This metric tracks how often our domain is cited as a source in generative responses across platforms like Perplexity, ChatGPT, and Google’s AI Overviews. Honestly, the results were humbling at first. We realized many of our high-traffic pages were being ignored by AI because they lacked the structural clarity needed for synthesis. Entity authority is the new domain authority. It’s not about how many backlinks you have, but how much the web knows you are an expert on a specific topic.

We developed an internal dashboard to track AI Visibility Percentage. This doesn’t always correlate with traditional SERP rankings. Sometimes a page ranked high for a keyword wouldn’t be cited by an LLM because the content was too conversational and lacked hard data points. To fix this, we adjusted our AI writing tools for superior SEO to prioritize claim-dense writing. This style focuses on making verifiable assertions followed by structured evidence, which makes it much easier for an AI to extract and credit the information. Content must be built for synthesis to remain visible in this new environment.

Refining the entity authority

We started using a niche-specific ai text generator for blogs to produce deep-dive technical content that established our brand as a primary source. This involved a recursive writing process: we would generate a pillar post, identify the entities mentioned, and then build out supporting node posts that further defined those entities. This web of information creates a feedback loop for search engines. When an AI crawler hits our site, it sees a perfectly mapped topical map where every term is defined, linked, and validated by schema. It’s a rigorous approach, and it’s significantly more demanding than just churning out 1,000-word articles based on a single keyword. But the reality is that the old way is dying. If your content isn’t machine-readable, it’s invisible. We’ve accepted that we aren’t just writing for humans anymore; we’re writing for the models that help humans think.

The 60-minute hybrid engine in action

The transition from AEO infrastructure to actual publishing happens when we stop treating the machine as a ghostwriter and start treating it as a research assistant. We call this the 60-minute hybrid engine. It’s a rigid, time-boxed workflow that ensures we aren’t just dumping text onto a page but are actually building something worth reading. This isn’t about shortcuts; it’s about allocating human energy where the machine is most likely to fail.

The first ten minutes focus on structural curation. Instead of asking for one perfect outline, we let the AI generate 30 distinct topic variations and structural frameworks. I don’t look for the most clever one. I look for the one that aligns with our specific brand POV. If the machine suggests a generic guide, we pivot. If it suggests a nuanced take on technical friction, we move forward. This prevents the evaluation bottleneck that often kills content teams trying to scale because it forces a decision early in the process.

Once the structure is locked, the next 20 minutes involve the actual drafting and technical grounding. We use an ai article writer to handle the heavy lifting of formatting, basic SEO research, and initial phrasing. But we don’t just hit generate and walk away. We use specific AI writing tools to cross-reference our internal data points against the draft as it’s being built. This ensures the foundational layer of the article is technically sound and optimized for search intent without eating up a human’s entire morning.

The human injection phase

The final 30 minutes are where the real value is added. This is the non-negotiable part of the process where a human expert takes the wheel. We aren’t just checking for typos. We’re injecting firsthand insights, personal anecdotes, and industry-specific context that no LLM can simulate. I’ve found that search engines are increasingly penalizing content that lacks this experience factor. If an article doesn’t have a specific, opinionated stance by the end of this half-hour, it isn’t ready for the site.

And this is exactly why we built GenWrite. We wanted a tool that didn’t replace the writer but empowered them to focus solely on that high-value injection phase. You can learn more about our philosophy on the GenWrite about page, where we detail how we bridge the gap between automation and expertise. By offloading the busywork of keyword placement and image sourcing, our team spends their time on the 20% of the work that drives 80% of the results.

Why the clock matters

Speed is a byproduct, not the primary goal. The 60-minute limit forces us to be decisive. It prevents the endless polish trap that keeps good ideas in a draft folder for weeks. When you have a set time to finalize high-ranking blog posts, you learn to prioritize what actually moves the needle. We’ve seen that articles produced this way often outperform those that were manually labored over for days because they maintain a tight, focused narrative that doesn’t wander into irrelevant tangents.

But this system isn’t perfect. Sometimes the initial AI output is off-base, and we have to scrap the 10-minute curation phase and restart. That’s a feature, not a bug. It’s better to lose ten minutes on a bad start than ten hours on a bad article. The reality is that the hybrid engine is about risk management as much as it is about production. It gives us the volume we need to stay competitive without sacrificing the authority that keeps us in the top spots.

The evidence for this approach is mixed if you don’t have a clear brand voice. If your brand doesn’t have a perspective, no amount of AI or human editing will save the content. But for teams with a strong POV, this workflow is the only way to scale without losing your soul to the algorithm. We aren’t just making content; we’re making arguments that happen to be optimized for search.

Automating topical authority without the hallucination tax

The friction in most AI workflows isn’t the generation,it’s the correction. Once you’ve established a hybrid workflow, the focus shifts from how you build the piece to how you trust the data. When you use a general-purpose model, you’re essentially hiring a brilliant intern who has read the entire internet but possesses zero real-world experience. They’ll confidently state a technical impossibility because it sounds grammatically plausible. This is the hallucination tax: the hidden cost of time spent cross-referencing every statistic, date, and technical claim against a reliable source. If you’re building a site that relies on topical authority, you can’t afford to pay that tax on every paragraph.

Grounding outputs in domain-specific logic

Niche-specific tools operate differently. Instead of relying solely on the probabilistic weights of a multi-billion parameter model, they ground the generation process in specific datasets. This might involve Retrieval-Augmented Generation (RAG) or proprietary data layers that prioritize factual accuracy over creative flair. For instance, a healthcare provider training a model on internal claims data will consistently outperform a generic system in diagnostic support. The specialist tool understands the nuances of industry jargon and specific qualifications that a generalist simply averages out into generic corporate speak.

I’ve found that GenWrite bridges this gap by integrating competitor analysis directly into the drafting phase. It doesn’t just guess what makes a high-ranking blog post; it looks at what’s currently performing and uses that as a structural anchor. This prevents the AI from drifting into the vague, repetitive fluff that usually triggers a manual rewrite. While no system is 100% immune to errors, the error rate drops significantly when the model is constrained by a specific SEO framework.

The technical cost of generic fluency

General models are designed for fluency. They want to please the user with a smooth-sounding answer, even if the underlying data is thin. In a technical context, this is dangerous. If you’re writing about complex recruitment qualifications or specialized software architectures, a single wrong term can destroy your credibility. Specialized tools treat data as a moat. By focusing on a narrow vertical, these systems can harness industry-specific knowledge that broad-web training misses.

So, how do you verify if your content still feels authoritative after the AI has done its work? Using a dedicated ai content detection tool can help identify patterns of robotic repetition that often signal a lack of depth. But the real solution is preventing those patterns at the source. When the generator is aware of the specific content gaps and keyword clusters required for your niche, the output requires less fixing and more polishing.

Why proprietary data is the ultimate moat

The long-term value of your content strategy isn’t just volume; it’s the durability of your information. Generic AI is a commodity. Anyone can generate 50 posts a day. But those who win the organic search game are those who use tools to surface insights that others can’t easily replicate. By moving away from general-purpose models toward a best ai writer designed for specific SEO outcomes, you shift your focus from managing hallucination debt to scaling actual expertise. It’s about building a library of high-ranking blog posts that stand up to human scrutiny and algorithmic evaluation alike. And that shift is what finally turned our traffic numbers around.

Measuring the move from traffic to citation frequency

AI referral traffic often converts at 14.2%, a staggering leap compared to the 2.8% average typically observed from standard Google search results. This discrepancy suggests that by the time a user clicks a citation within an AI’s response, the heavy lifting of persuasion and information gathering is already done. We’ve shifted our internal benchmarks because being a primary source in an AI-generated answer is proving more lucrative than holding a top-three spot for a high-volume, low-intent keyword.

The math of visibility is no longer a simple game of counting sessions. We tracked a specific domain that managed only 8,500 visits yet appeared in 23,787 AI citations across different models. This disparity proves that high traffic doesn’t always equate to authority in the eyes of a generative engine. If your content is structured correctly, the LLM treats you as a foundational source even if human click-through rates haven’t caught up yet. It’s about being the data that the model trusts to build its answer.

The ROI of citation frequency

Measuring success now requires looking at how quickly a piece of content moves from publication to being cited. Traditional organic traffic growth often takes months of backlink building and patience. But when we started using GenWrite to handle our niche-specific data points, we noticed AI search visibility spiking within days. This speed is a byproduct of the tool’s ability to align with the semantic requirements of modern search agents.

| Metric | Traditional SEO | AEO (Citation Focus) |

|---|---|---|

| Avg. Conversion Rate | 2.8% | 14.2% |

| Time to Visibility | 3-6 Months | 1-2 Weeks |

| Primary Goal | Click-through Rate | Synthesis & Citation |

We’ve found that the best ai writer isn’t necessarily the one that produces the most flowery prose, but the one that structures data so clearly that an AI can’t help but reference it. This isn’t just theory; we’ve seen a 35% increase in organic SEO traffic for niche recipe pages that dynamically incorporated trending ingredients. The AI picked up on these specific data points and cited the pages as the definitive source for those trends.

Why traffic volume is a lagging indicator

If you’re still obsessed with raw sessions, you’re likely missing the shift toward high-intent referral loops. The reality is that 500 visitors from an AI citation are often worth more than 5,000 visitors from a broad informational query. The intent is deeper. When an AI synthesizes your content, it’s effectively giving the user a recommendation.

But this approach doesn’t always hold for every content type. High-commodity news might still require the old volume-based model to survive. Yet, for businesses where expertise and factual accuracy are the main selling points, the citation is the new currency. We’ve stopped chasing the

Why niche tools integrate better with our CMS stack

Measuring success through citation frequency and traffic is great, but those wins often feel like one-offs if your tech stack is a mess. You’ve likely felt the frustration of finishing a great piece of content only to realize the “last mile” of publishing is a manual slog. That’s a mistake. Generic AI strategies fall apart because they produce a document, but they don’t produce a web page. When you move to a niche-specific seo content software, the goal shifts from just writing words to building a content engine that lives inside your CMS.

Breaking the content silo

Saving time is only part of the equation. The real headache in a standard content creation workflow is the siloed nature of the work. This happens when your research, drafting, and SEO optimization happen in three different browser tabs. You copy text from a doc, paste it into WordPress, then manually re-add your headers, links, and images. It’s tedious, and it’s where errors creep in.

When your tools don’t talk to your CMS, you lose the ability to synchronize SEO scores in real-time. Niche tools allow for a direct handshake. We’ve seen this work effectively when using logic similar to Orkes Conductor, where you build a sequence that triggers a series of actions (like checking for broken links or verifying schema) before the post ever goes live. It turns a manual checklist into an automated gatekeeper.

Why direct publishing changes the math

Direct publishing is more than a convenience; it’s a data integrity feature. When a tool like GenWrite pushes a post directly to your site, it preserves the metadata that generic LLMs often ignore. This doesn’t always hold true for every legacy system, but for most modern stacks, the integration is night and day. You aren’t just moving text; you’re moving a fully structured data object that’s ready for indexation. And while it might seem like a small detail, the way your CMS interprets these headers determines whether you rank for a featured snippet or get buried on page two.

Visibility engineering and schema

We’re seeing a massive shift toward specialized technical structures. This is the practice of structuring content specifically for machine readability from the moment of creation. If your AI tool doesn’t understand JSON-LD or how to nest H3 and H4 tags for maximum crawl efficiency, you’re leaving money on the table.

A niche-specific generator bakes this technical SEO directly into the output. It handles the tedious tasks like alt text for images and internal link distribution without you needing to prompt for it every time. You end up with a page that’s as readable for a search bot as it is for a human. This integrated approach ensures that the SEO scores you see during the editing phase are exactly what the search engines see once the page is live. The real value lies in closing the gap between intent and execution.

The part nobody warns you about: brand voice drift

Technical efficiency is a trap if your identity dissolves in the process. You can sync your CMS and automate the pipeline, but if the output sounds like every other site in your category, you’ve traded authority for volume. This is brand voice drift. It’s the slow, almost invisible erosion of your unique perspective until you become indistinguishable from a generic wiki.

I’ve seen this happen in controlled environments. In one exercise, teams tried to maintain a specific nonprofit’s community-driven tone using a standard ai article generator. The content was technically accurate. It followed the facts. But the warmth and urgency were gone. It read like a corporate manual. This happened because “approachable” is a useless instruction for an algorithm. It’s too subjective.

Why vague guidelines fail AI

Most brands rely on outdated PDF style guides that describe a vibe rather than a structure. These documents fail when they meet an LLM. If your guide says “be professional,” a general-purpose model will interpret that as “use long words and passive voice.” That’s the opposite of what actually works for engagement.

Your niche site content strategy depends on being the definitive voice in a specific corner of the internet. If you sound like a neutral observer, readers won’t return. They want the specific cadence and vocabulary of someone who actually knows the field. You have to feed the system patterns, not just adjectives.

Mapping communication patterns

To stop the drift, you have to move past adjectives. You need to analyze actual communication patterns,sentence structure, common idioms, and even the topics you refuse to cover. GenWrite handles this by allowing for more structured inputs that go beyond simple prompts. Using an AI blog generator that understands these nuances prevents the “graying” of your content.

But even the best tools require a reality check. I’ve found that drift often starts in the “middle ground” of content,the filler paragraphs where the AI defaults to safe, polite language. If you don’t explicitly tell the system to avoid these pleasantries, they will accumulate. Eventually, your brand’s sharp edges are sanded down.

The cost of neutrality

This doesn’t always hold true for every single post, especially very short news updates. However, for long-form authority building, the risk is real. You’re not just fighting for rankings; you’re fighting to remain recognizable. A specialized engine isn’t just about speed. It’s about ensuring that as you scale, you still sound like yourself.

If your content becomes too predictable, you lose the “hook” that keeps an audience loyal. Automation should amplify your voice, not replace it with a sanitized version. The reality is that most people won’t notice the drift until it’s too late and their bounce rates start climbing. By then, you have a massive library of content that feels like it was written by a committee of robots.

Where most teams get stuck during the AI transition

If brand voice drift is the subtle erosion of your identity, the automation bias trap is the sudden collapse of your authority. Imagine a marketing lead looking at a dashboard showing a 40% traffic drop after a month of high-volume AI posting. They followed the ‘best prompts’ and used the latest models, yet the results are cratering. This isn’t a failure of the technology, but a failure of strategy. It’s what happens when you prioritize output volume over the structural integrity of the information provided to your audience.

The trap of prompt glorification

Teams often get stuck in a cycle of prompt glorification, believing that a clever string of text can replace specialized domain knowledge. I’ve seen this play out in the SaaS world repeatedly. Look at the explosion of ‘PDF chat wrapper’ companies that launched recently. They almost all look identical because they rely on the same generic logic and the same generalist engines. When everyone uses the same ai text generator for blogs without a niche-specific data layer, the output becomes a sea of predictable sameness that search engines eventually ignore.

Lessons from the content debt audit

The real bottleneck isn’t how fast you can write; it’s the content debt you’re creating when you hit ‘publish’ too quickly. Take the OneCal turnaround as a primary example. They didn’t win by flooding the internet with more noise. Instead, they grew nearly 300% in five months by auditing their existing library, pruning the fluff, and restructuring for actual value. Most teams are too busy hitting the ‘generate’ button to realize they’re just digging a deeper hole with every automated post. Growth comes from precision, not just persistence.

Why the technical foundation still wins

Another massive blind spot is the technical foundation. You can have the most poetic content creation AI output imaginable, but if your schema is broken or your internal linking is non-existent, search engines won’t care. We often see teams get so caught up in the prose that they ignore the metadata that actually tells an LLM or a crawler what the page is about. High-quality text is only half the battle; the other half is making sure that text is discoverable and structured correctly within your CMS stack.

Overcoming the hallucination tax

Honestly, the evidence on ‘set it and forget it’ AI is mixed at best. While some niche sites see short-term wins, the long-term survivors are those who treat AI as a high-powered research assistant rather than a replacement for editorial judgment. If you aren’t fact-checking technical claims or verifying sources, you’re essentially paying a ‘hallucination tax.’ This tax is paid in lost credibility and declining rankings. You don’t need to write every word, but you must be the final authority on every claim your blog makes.

Building a content engine that compounds over time

Once you move past the initial hurdles of fact-checking and brand alignment, you’re no longer just publishing; you’re building an asset. Most teams view their blog as a linear timeline where the newest post is the most important. But a true content engine treats your site like a neural network. Every new piece of content should act as a supporting pillar for your existing high-ranking blog posts, creating a web of relevance that’s hard for competitors to untangle.

What does this look like after the first 90 days? It’s the ‘Office Space’ test. If a search engine crawler looks at your site and asks, ‘What would you say you do here?’ the answer needs to be immediate and undeniable. If you’re jumping from ‘best coffee beans’ to ‘how to fix a radiator,’ you’re diluting your authority. Using a niche-specific AI blog generator ensures that your expansion stays within the boundaries of your expertise, reinforcing your topical map rather than blurring it.

This is where organic traffic growth stops being a series of spikes and starts looking like a steady upward curve. But it doesn’t happen by accident. You have to connect your ‘intro’ content,the top-of-funnel ‘what is’ guides,to your ‘decision’ content, like case studies and product comparisons. When a reader lands on a blog post, they shouldn’t hit a dead end. They should be pulled deeper into a cohesive ecosystem that answers their next three questions before they even think to ask them.

The shift from volume to velocity

In the beginning, your goal is often just to fill the obvious gaps your competitors have left open. But as your engine matures, your strategy has to evolve. You stop looking for ‘low-hanging fruit’ and start creating the fruit. This means using your data to see which clusters are gaining traction and doubling down on them.

It’s worth admitting that this momentum isn’t always linear. You’ll have weeks where a core update shifts the ground beneath you, or a competitor tries to out-volume your niche. Yet, when you’ve built a foundation of interconnected, high-quality pages, your site becomes remarkably resilient. You aren’t relying on a single ‘hero’ post to carry your traffic; you have a fleet of ranked pages that validate each other.

Turning traffic into a citation network

The final stage of a compounding engine is when your content starts earning links and mentions without your active intervention. When you consistently produce the most detailed, structurally sound content in a specific niche, other writers start using your pages as references.

This isn’t just about SEO anymore; it’s about becoming the ‘source of truth.’ By automating the heavy lifting of research and formatting with GenWrite, you free up your mental bandwidth to focus on these high-level strategic pivots. You’re no longer stuck in the weeds of word counts. Instead, you’re the architect of a system that grows more valuable every time you hit publish.

Final verdict: choosing systems over models

Stop treating LLMs like a magic wand. They aren’t. A model is a raw commodity,a statistical engine that predicts the next token based on its training data. If you base your entire growth strategy on a generic chat interface, you’re competing on a level playing field where nobody wins.

The real winners in organic search aren’t using the newest model. They’re building defensible systems. A system is a repeatable process incorporating niche data and brand voice. It’s the difference between a home cook and a high-efficiency commercial kitchen.

Why models fail in isolation

If your foundation is weak, AI will only amplify your failures. I’ve seen teams use what they thought was the best ai writer to pump out thousands of pages, only to see their traffic tank three months later. They scaled noise.

They treated the model as the solution rather than the engine. A model doesn’t understand your specific affiliate conversion goals. It doesn’t know that your readers prefer blunt, data-heavy comparisons over flowery adjectives. Prediction is not the same as strategy.

The system advantage

Successful affiliate networks have already made the pivot. They’ve stopped writing for bots and started writing for the humans who actually pay them. This requires a seo content software stack that does more than just generate blocks of text. It needs to handle keyword research, competitor gap analysis, and internal linking automatically.

When you choose a system over a model, you’re choosing a workflow. You’re deciding that the process matters more than the individual output. This is where GenWrite excels. It doesn’t just give you a text box; it provides an end-to-end framework for building topical authority.

The stakes of sticking with generic tools

Misunderstanding this distinction is expensive. If you continue to rely on manual prompting, you’re building a house on sand. The moment a competitor deploys a niche-specific system, they’ll outpace your volume and outrank your quality.

This doesn’t always hold true for tiny hobby blogs, but for any business scaling content, the ‘hallucination tax’ of generic models is too high. You can’t afford to fact-check every sentence when publishing fifty articles a week. You need a system that gets it right the first time.

Don’t wait for a model update to save your rankings. It won’t. Look for the system that integrates into your CMS and enforces standards without constant babysitting. The future of SEO belongs to the architects of systems, not the prompters of models. What does your current workflow look like when you aren’t there to prompt it?

If you’re tired of generic AI content that doesn’t rank, GenWrite handles the niche-specific research and SEO heavy lifting so you can focus on building actual authority.

Frequently Asked Questions

Why does AI-generated content often lose its ranking after three months?

It’s usually because the content lacks unique value or firsthand insight. Search engines quickly identify raw, unedited AI output, and once the initial novelty wears off, those pages often get demoted for failing to provide a human perspective.

How do niche-specific AI generators differ from general chatbots?

Think of a general chatbot as a pantry of raw ingredients that you have to assemble yourself. A niche-specific tool is more like a professional meal-kit; it comes with pre-structured, SEO-optimized frameworks that already understand your industry’s specific intent.

Does using an AI article generator hurt my brand voice?

It can if you’re lazy with the output. You’ll need a human-in-the-loop to inject your team’s personality and fact-check technical claims, but tools like GenWrite help keep the structural consistency while you handle the creative nuances.

What is the best way to measure success with AI content?

Don’t just look at keyword rankings. Start tracking your ‘AI visibility’ and how often your content is cited as a source in generative search answers, as that’s where the real authority is built today.