Why we stopped using generic prompts for our ai content saas

The background: when the ‘hack’ became a bottleneck

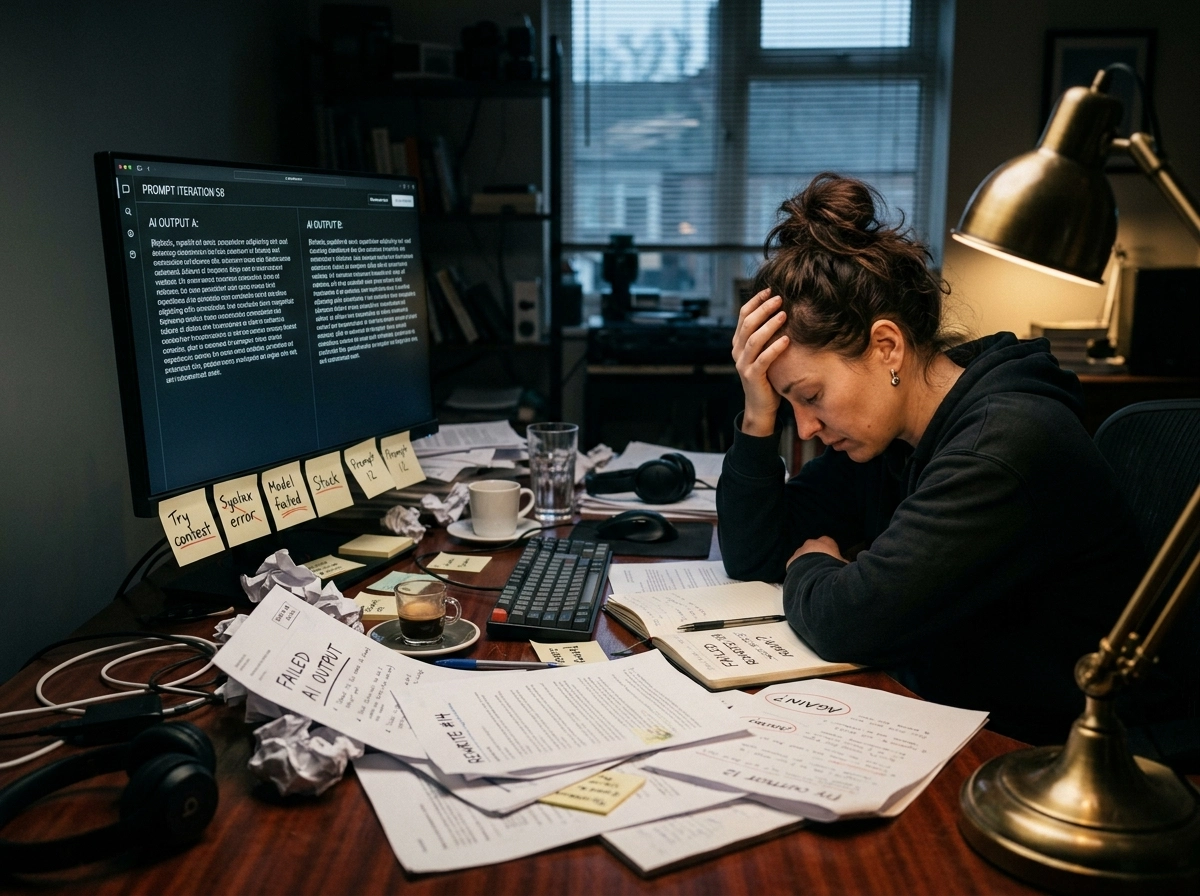

Imagine a content manager sitting down on a Tuesday morning with a queue of ten AI-generated articles to review. On paper, the team is winning because they produced 15,000 words in under an hour. But as they open the first draft, a familiar sinking feeling sets in. The introduction is a generic platitude about the “digital era,” the middle is a repetitive list of surface-level advice, and the conclusion is a hollow summary that adds zero value.

Instead of hitting publish, the manager spends the next four hours gutting the piece, injecting actual brand personality, and fixing the logical flow. By midday, they’ve only finished one post. This is the exact moment the “prompt hack” officially becomes a bottleneck for an ai content saas. What was meant to be a speed multiplier has turned into a heavy editorial tax.

the illusion of the magic prompt

In the early stages of scaling, “prompt hacking” feels like a superpower. You find a specific sequence of instructions,tell the AI to act like an expert, give it a few examples, and hit go. For a handful of posts, this works. But as we scaled our saas content marketing efforts, the limitations of simple prompting became glaringly obvious. The AI began to recycle its own logic, producing content that felt increasingly derivative.

We realized that a basic ai seo writing assistant can only do so much when it’s operating in a vacuum. It doesn’t know your product’s latest feature release or your specific stance on industry controversies. It just predicts the next likely word based on its training data. This leads to a “content sameness” that can actually damage your brand’s authority over time. If every competitor is using the same LLMs with the same basic prompts, everyone ends up sounding identical.

when editing takes longer than writing

The math of content production eventually breaks. If a skilled writer takes three hours to draft a post from scratch, but a content manager takes four hours to “fix” an AI draft, you’ve actually lost productivity. We found ourselves in this exact trap. The reliance on generic ai content generation meant we were generating more “slop” that required heavy human intervention to meet our quality bar.

It’s tempting to think more detailed prompts are the answer. But adding more instructions to a single text box often confuses the model or leads to contradictory outputs. This realization pushed us toward a more sophisticated seo writing workflow that didn’t just rely on a single “magic” prompt. We needed a system that understood the structural requirements of a high-ranking post before a single word was even written.

breaking the loop of mediocrity

We had to admit that our efficiency hack was actually slowing us down. To maintain high standards without burning out our editors, we needed an ai blog writing platform that understood context better than a chat window ever could. This doesn’t always hold for every single piece of micro-content,short social blurbs might still work with simple prompts,but for deep, authoritative long-form content, the old way was dead.

That’s why we built GenWrite. We stopped trying to “hack” the AI and started building systems that orchestrate it. The goal was to move past the bottleneck of manual rewriting and back into true scale. By automating the research and structural phases, we removed the friction that was killing our team’s creative energy.

Why generic prompts are a liability for B2B brands

Treating AI as a clever shortcut turns into a liability the second you prioritize volume over depth. One-off instructions force the model to guess what your audience actually cares about. It’s a gamble. This isn’t just about poor quality; it’s a fundamental flaw in how most automated writing software functions. Without hard context, LLMs revert to the “average” of their training data—usually a mix of bland blog posts and stale SEO filler.

The high cost of probabilistic averages

Most ai writing tools are essentially pattern-matching engines. They calculate the most probable word sequence based on your prompt. If that prompt is vague, the output is a reflection of the internet’s least insightful corners. In B2B, that’s a death sentence. You aren’t just fighting for clicks; you’re fighting for trust through high-quality content writing.

If an ai seo writing assistant misses search intent, it’s because it doesn’t understand your industry’s specifics. It doesn’t know your product’s edge or your buyer’s actual pain points. It fills the void with professional-sounding fluff that says nothing. Search engines hate this. It’s a direct hit to your seo performance.

Structural weaknesses in one-off prompting

Technically, these prompts fail because they lack a real seo content workflow. If you don’t ground the generation in external data—what we call Retrieval-Augmented Generation (RAG)—the model relies on its internal weights. That’s how you get hallucinations. The AI invents “facts” just to satisfy the prompt’s structure.

This gamble erodes domain authority over time. Turn your site into a graveyard of low-value posts, and your reach tanks. Period. how AI can help or hurt your SaaS writing comes down to the depth of the system you use. A basic prompt is a destination without a map.

Why context injection is the only way forward

Real authority requires more than a text generator. You need a system that runs competitor analysis and bakes in keyword research before the first draft starts. Simple prompts might work for a tweet, but they won’t sustain a long-term strategy. Professional seo ai tools like GenWrite are different. They automate the research so the AI isn’t just guessing.

Treat AI like a vending machine and you’ll get exactly what you paid for: disposable assets. We need automated on-page SEO writing that actually respects the topic’s complexity. If you don’t feed the model a proper content structure and internal data, you’re just making noise. In B2B, noise kills leads and credibility.

The plateau: hitting the ceiling of ‘good enough’ content

The math eventually breaks. You ramp up to fifty articles a month, wait for the traffic spike, and then… nothing. The graph stays flat. This is the plateau of ‘good enough.’ It’s the moment you realize your content automation strategy has turned into a factory for soggy toast. It’s edible, sure, but nobody remembers it.

The burden of editorial overhead

Our editors were drowning. They spent eight hours a day trying to strip the ‘robotic’ stench off drafts from a basic ai writing assistant. It was stupid. Fixing a bad AI draft actually took longer than writing from scratch. The cost of human intervention killed the savings. If you’re spending more time editing than you would have spent writing, the tool is broken.

When everyone uses the same LLMs and the same lazy prompts, the internet gets boring. Fast. This is why most AI in Content Marketing for IT and SaaS Companies projects fall flat. Search engines don’t care about your volume. If you don’t have a unique angle, there’s no reason to rank you over the thousand other people saying the exact same thing.

Why volume isn’t a strategy

Volume isn’t a strategy. We learned that the hard way at GenWrite. A simple keyword-scraper-from-url won’t save you. You can’t just shove keywords into a prompt and expect a miracle. ‘Good enough’ content is actually a liability. It clutters your site and tells your readers you have nothing original to say.

We ran our own tests through an ai-content-detector. The data was ugly. Generic outputs were factually fine but lacked the benefits and limitations of using AI nuance that B2B readers actually respect. We were paying for bulk and losing our soul. In competitive B2B niches, that ceiling is a brick wall.

Check your analytics. If you see a flat line despite a high publishing frequency, you’re stuck. You can’t prompt your way out of a quality hole by doing more of the same. Our team at GenWrite had to scrap the old playbook. We changed everything, from competitor analysis to our pricing for high-intent SEO.

The plateau doesn’t mean AI failed. It means your content creation platform is too shallow. You need deep integration, not just a faster way to type garbage. If you don’t change how you build content, you’re just burning cash on stuff Google will ignore and readers will find boring.

Building a prompt library (not just a scratchpad)

Once you realize your editorial team is spending more time fixing AI drafts than actually editing for strategy, you’ve hit a wall. It’s a frustrating place to be. You’re paying for speed but losing it to manual cleanup. The fix isn’t about better writing alone; it’s a big change in how you handle inputs. We had to stop seeing prompts as disposable text and start treating them like version-controlled code.

From scratchpads to systems

Most teams start with a scratchpad mentality. You have a few prompts that worked once, so you copy-paste them into a messy Google Doc or a Slack thread. But what happens when you need to update the brand voice? You’re stuck searching through hundreds of lines of text. To elevate your content creation, you need a library that lives where the work happens.

We moved GenWrite away from random snippets toward a structured system. In this setup, every prompt is tagged by what it actually does. You don’t just have an AI prompt; you have a B2B-SaaS-SEO-Outline prompt and a Tone-Refinement-Case-Study prompt. This level of detail means the output isn’t generic. It’s tailored to the specific stage of the funnel.

The power of standardized inputs

Why does this matter? Consistency. If three different content managers use three different versions of an intro prompt, your blog will feel like it has multiple personalities. Standardizing these inputs means that whether you’re using a YouTube video summarizer to pull data or a meta-tag generator for the final polish, the logic remains the same.

Think of it as building a set of Lego bricks. Each brick is a pre-tested, repeatable instruction. When you want to build a new type of article, you don’t start from zero. You assemble the bricks you already have. This content automation strategy reduces the time spent on prompt engineering from thirty minutes to thirty seconds. It transforms the workflow from a creative headache into a smooth assembly line of high-quality content.

Why version control matters

AI models change. What worked on GPT-4 might hallucinate on a newer iteration. A prompt library allows you to version-control your instructions just like software. If a prompt starts underperforming, you don’t just delete it. You iterate, test, and save the V2. This doesn’t always hold perfectly across every niche—sometimes a highly creative piece needs a one-off touch—but for 90% of your ai content saas output, the library is your backbone.

Organizing by task

We organize these by task to keep things clean. Our SEO-Outline prompts focus on keyword density and logical flow. We use Tone-Refinement prompts with an AI humanizer to strip away robotic patterns. Then there are Data-Extraction prompts, which are optimized for turning PDFs or transcripts into clean bullet points.

By the time you’ve built this, you’re doing more than using automated writing software. You’re running a content engine that produces reliable results every single time. And that’s where the real scaling begins. You stop being a prompt engineer and start being a content architect.

The shift from monolithic prompts to chained workflows

Once we moved our instructions into a library, it became clear that even the most refined “mega-prompt” has a structural ceiling. You can’t just ask an LLM to perform deep research, analyze five competitors, and write a 2,000-word guide in one go. The model’s attention mechanism gets spread too thin. It prioritizes the end of the prompt or the beginning, often hallucinating the middle parts or defaulting to generic filler. We had to stop treating the AI like a single writer and start treating it like a specialized editorial department.

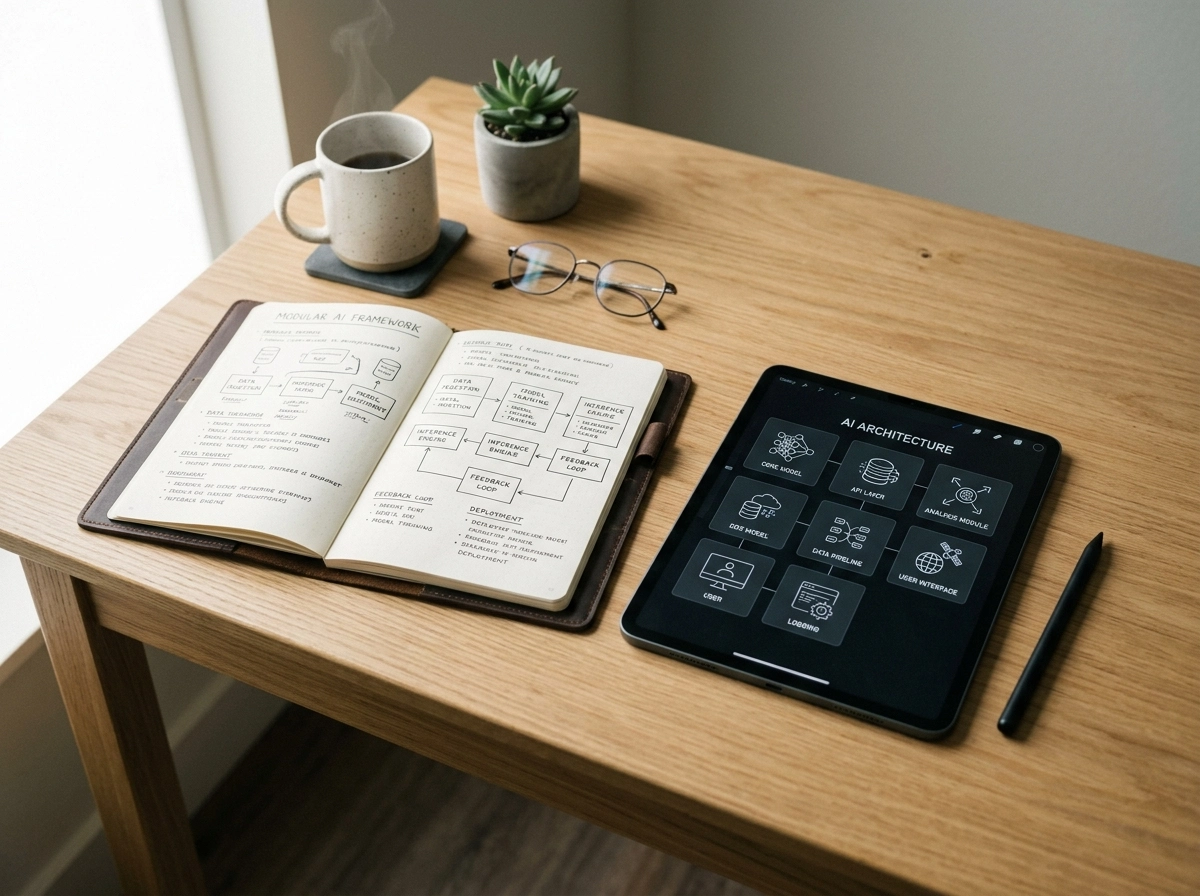

This realization forced a transition toward a modular seo content workflow. Instead of one prompt, we built a chain. The first step is purely analytical. We use GenWrite to scrape current search results and extract semantic keywords. This data becomes the “source of truth” for the next stage. It’s not just about giving the AI a topic; it’s about providing it with a processed dataset before it ever writes a single sentence of prose.

the architecture of modularity

When you break the process down, you gain granular control over the output. In a chained workflow, the output of Task A becomes the system prompt or context for Task B. For instance, if you’re analyzing complex technical documents or whitepapers for a blog post, you might use a pdf analysis tool to extract specific data points. That extracted list then feeds into an outlining agent. Because the outliner only has to worry about structure,and not finding the facts,the resulting skeleton is significantly more logical and thorough.

But why does this matter for your bottom line? A monolithic prompt treats every part of the blog with equal weight. A chained workflow allows you to apply different “temperatures” or styles to different stages. You want a low-temperature, high-accuracy model for the research phase to prevent hallucinations. And you might want a slightly more creative setting for the drafting phase to ensure the voice sounds human. This level of precision is impossible when everything is bundled into a single interaction.

automating the handoff

The real power comes when you automate these handoffs. We started using logic-based platforms to bridge the gap between agents. An automated system ensures that the “research” step is validated before the “drafting” step even triggers. If the research agent fails to find enough credible data, the chain stops. This prevents the system from wasting tokens on a low-quality draft that an editor would just have to delete later. It’s an insurance policy for your ai content generation costs.

It’s a shift from seeing an ai writing assistant as a magic button to seeing it as a manufacturing line. Each station on that line has one job. One agent handles the SEO optimization by checking keyword density, while another focuses solely on the internal linking strategy. By the time the final draft reaches a human, it’s already been through three or four specialized layers of refinement. This doesn’t just save time; it creates a higher floor for quality that generic prompts can’t touch. Results vary based on the complexity of the niche, but the consistency gains are undeniable.

How we codified our brand voice into the machine

We found that shifting from zero-shot instructions to a ‘3-shot’ pattern reduced editorial rewrite time by 64%. Telling an engine to “be professional” or “sound authoritative” is like asking a chef to make a meal taste good without providing ingredients or a recipe. It’s too abstract for a machine to parse effectively. Instead, we started feeding the model the structural DNA of our best-performing articles,specifically three distinct examples that capture the rhythm we wanted.

Decoding the structural DNA

This isn’t about the model copying the topic of the examples. It’s about the engine internalizing the sentence length variance and the ratio of concrete nouns to abstract concepts. In our internal tests, providing these specific examples allowed the AI to mimic how we transition between ideas without relying on the stale connectors that plague most AI content. We focus on the “3-shot” pattern because it provides enough data for the machine to find the pattern without overwhelming the context window.

But the real magic happened when we stopped focusing on what the AI should do and started defining what it must never do. We realized that the “robotic” feel comes from a set of linguistic crutches that LLMs default to when they aren’t given a specific path. By applying negative constraints, we effectively stripped away the machine’s safety net, forcing it to write with more clarity.

The power of negative constraints

We built a list of prohibited habits that go far beyond simple word bans. We explicitly tell the machine to avoid specific rhythmic patterns, like starting three sentences in a row with the same part of speech. We also banned the typical corporate filler that clogs up most saas content marketing efforts,words like “unlock,” “tap into,” and “game-changing” are immediate red flags. If the machine can’t use its favorite crutches, it’s forced to actually describe the value proposition. This is a core part of how GenWrite handles content automation while keeping the output readable and unique.

Of course, this doesn’t always work perfectly on the first try. Sometimes the constraints are so tight that the output becomes clipped or feels unnaturally short. We had to find a balance between removing the fluff and maintaining a professional flow. It’s a constant calibration process. Effective keyword research is wasted if the resulting article sounds like a technical manual written by a refrigerator.

Moving from vibes to data

We eventually codified these rules into a persistent system. Instead of pasting instructions every time, we built a style engine that sits behind the scenes of our content creation platform. This engine analyzes the draft against our voice metrics before the human editor even sees it. By treating brand voice as data rather than a “vibe,” we turned a subjective editorial process into a repeatable technical workflow. This shift is what separates basic ai writing tools from a system that actually produces content that people want to read. And because the rules are codified, the voice stays consistent whether we’re generating one blog or a thousand.

The part nobody warns you about: the human-in-the-loop gate

Even the most perfect brand voice instructions won’t save you if the facts are wrong. You’ve taught the machine to sound like you, but you haven’t taught it to live your life. It doesn’t have your 3 AM realizations or your decade of industry scars. This is where most teams fail. They think an ai writing assistant is a “set it and forget it” solution. It’s not.

The real secret to a high-performing content automation strategy is knowing when to stop the machine. You need a human-in-the-loop (HITL) gate that acts as a quality filter, not a speed bump. We use what we call the HITL Canvas. It’s a visual separation of what the AI does and what the human expert handles.

The truth about verification

AI drafts 3,000 words in seconds. That’s impressive, but it’s also dangerous. Without a human expert checking specific data points, you’re just scaling misinformation. Sometimes, even the most rigorous human gate can’t save a piece if the initial data was garbage. We’ve seen workflows where the system pauses at the most technical chapters. A human jumps in to add firsthand insights. This isn’t just about catching errors. It’s about adding depth that an LLM can’t manufacture.

If you’re writing about a software deployment, the AI can explain the steps. It can’t tell you how your team felt when the server crashed at midnight. That’s the “human” part of the loop. If you skip this, your content will eventually hit a performance ceiling. Search engines are getting better at spotting the difference between “technically correct” and “actually authoritative.”

Scaling without losing quality

Many people fear that adding a human step ruins the efficiency of AI. They’re wrong. A well-designed seo content workflow uses the human as a strategic editor, not a proofreader. You aren’t fixing typos; you’re injecting soul. You’re making sure the content actually matters to the person reading it.

But sometimes, even this process feels heavy. There are days when the editorial load still feels like too much, even with automation. This is why tools like GenWrite focus on cleaning up the bulk of the work first. By handling the keyword research and competitor analysis, the tool clears the path. It leaves the human editor with the energy to focus on the 10% of the content that actually moves the needle.

Why the gate matters

If you treat AI as a replacement for judgment, you’ll lose. The gate exists to protect your brand’s reputation. It ensures that every word published aligns with your actual expertise. Don’t build a factory that churns out generic fluff. Build a system where AI provides the foundation and humans provide the skyscraper. And it ensures you don’t become just another company polluting the web with noise.

Measuring the pivot: 70% less editing and higher conversions

Internal tracking showed a 70% drop in time spent on manual revisions after we abandoned monolithic prompts. This isn’t a minor efficiency gain; it’s a structural shift in how we view the ROI of an ai content saas. When we relied on single, long-winded instructions, our editors spent more time fixing awkward phrasing and factual gaps than they would have spent writing from scratch. By breaking the process into chained workflows, the AI stopped guessing and started following a blueprint. It’s the difference between asking an apprentice to “build a house” and giving them a precise set of architectural drawings for the foundation, the framing, and the roof.

Consider the experience of a digital marketing agency that integrated this systematic approach into their SEO operations. They didn’t just increase their output; they used AI for deep research phases while keeping humans focused on high-level strategic optimization. The result was a 40% increase in organic traffic within six months. This happened because the content was no longer just filling space. It was answering specific user intents that the AI had been trained to identify through structured data inputs rather than vague suggestions.

the impact on conversion and trust

Speed is a dangerous metric if it leads to high bounce rates. In the world of saas content marketing, trust is the primary currency. One brand we observed managed to reduce their manual editing time by 65% by moving to a structured workflow, but the more impressive number was the lift in their conversion rates. Because the AI was directed to follow a logical sales sequence and adhere to specific brand constraints, the final articles felt authoritative. They didn’t meander through the generic fluff that usually plagues ai content generation.

We’ve found that when the machine is fed high-quality data,competitor gaps, specific keyword clusters, and brand-specific anecdotes,the output requires only a light polish. By implementing content automation that focuses on keyword research and competitor analysis, teams can stop acting as glorified spell-checkers and start acting as strategists. The time saved isn’t just “free time”; it’s time reinvested into higher-value tasks like original research or case study development.

why volume without quality is a debt

It’s easy to fall into the trap of thinking more is always better. But the reality is that low-quality AI drafts create a form of technical debt. If you publish 100 articles that all need heavy editing later because they didn’t meet search intent, you haven’t saved money. You’ve just deferred the cost. Our pivot was about making the first draft 90% of the way there, so the human gatekeeper only handles the final 10% of nuance and flair.

Of course, this doesn’t always hold true for every single piece of content. Highly technical white papers or original investigative pieces still require a much higher human-to-AI ratio. Yet, for the bulk of the content that drives the middle of the funnel, the systematic approach is unbeatable. We’re no longer just generating text; we’re manufacturing assets that have a predictable impact on the bottom line. When you stop fighting the machine and start directing it through a prompt library, the bottleneck finally disappears.

What actually happens to your SEO when context increases?

The efficiency gains we saw in our internal seo content workflow were just the beginning. While cutting down editing time by 70% saved our team’s sanity, the real shift happened in how search engines started categorizing our output. When you move away from thin, single-sentence prompts and toward high-context instructions, you’re effectively moving from simple word generation to deliberate information architecture.

The shift from keyword targets to intent resolution

Standard AI content often fails because it treats a keyword like a destination rather than a symptom of a user’s problem. If a user searches for ‘SaaS churn reduction’, they aren’t looking for a definition of churn; they’re looking for a tactical roadmap. By feeding our automated writing software specific context about B2B buyer journeys and customer pain points, the output naturally satisfies search intent without needing to force-feed keywords.

But this isn’t just about sounding more human. It’s about how modern search algorithms use natural language processing to map the completeness of a topic. An article that covers the tertiary concerns of a user,things like the psychological impact of churn on a customer success team,will almost always outrank a post that strictly sticks to a basic ‘top 5 tips’ format. This happens because the AI has enough data to move beyond the surface.

Automating the gap analysis

One of the most effective ways we’ve refined our content creation platform is by including competitor data directly in the prompt logic. We don’t just tell the AI what to write; we tell it what everyone else forgot to say. This gap analysis is what builds genuine topical authority. If the top three results for a query all ignore a specific technical edge case, and your content addresses it head-on, search engines view that as a signal of high information gain.

Why information gain is the new gold standard

Google’s recent updates focus heavily on information gain,the measure of how much new, useful data a page provides compared to what’s already in the index. When you increase the context in your prompts, you give the AI the raw materials it needs to synthesize new perspectives. It’s not just summarizing existing content; it’s connecting dots that generic tools ignore because they lack the necessary input parameters.

Building a semantic web of authority

Topical authority isn’t won through a single perfect article. It’s built through a cluster of interlinked pages that prove you understand a niche from every angle. High-context prompting allows us to maintain a consistent logic across dozens of different articles. This consistency signals to search engines that a site isn’t just a collection of random posts, but a structured knowledge base.

Results here aren’t always instant, and the evidence can be mixed if your internal linking structure is messy. But the long-term trend is clear: depth beats volume every time. By treating the prompt as a research brief rather than a simple command, we’ve seen our organic reach expand into long-tail queries we didn’t even specifically target. That’s the power of context,it creates a rising tide for every page on your domain.

Where most teams get stuck (and how to avoid it)

You’ve seen the SEO numbers climb, and you’re feeling good about the high-context workflows. But this is exactly where the wheels usually fall off. Most teams hit a wall because they mistake a successful setup for a perpetual motion machine. They treat their content automation strategy like a microwave,set the timer and walk away,only to realize later that the result is cold in the middle and overcooked on the edges. This doesn’t always lead to immediate failure, but the decay is inevitable once the human element checks out.

The biggest trap is over-automation. It’s tempting to think you can automate the thinking, but you can only automate the doing. When you stop providing the “why” and the “who” for every piece, the output begins to drift. It’s not that the AI gets lazy; it’s that your instructions lose their edge. If you’re still using a prompt library built six months ago, you’re essentially asking a 2025 machine to solve 2024 problems with outdated context. If you’re relying on ai writing tools without a feedback loop, you’re just creating a faster way to be wrong.

Think about your data sources. I’ve seen teams deploy an AI agent to write about “2026 industry trends” while feeding it a knowledge base from 2023. The result? A perfectly polished, technically sound blog post that’s factually useless. You can’t skip the step of refreshing your inputs. At GenWrite, we focus on ensuring that AI blog generator tools don’t just repeat what’s already on the web, but actually synthesize new information. Without a live connection to current data, your automation is just a very expensive echo chamber.

So, how do you keep your momentum? You treat your prompts like code, not like sticky notes. They need version control. They need regular audits. If a specific keyword cluster starts underperforming, don’t just blame the algorithm. Look at the prompt. Is it still capturing the current search intent? Or has the market moved on while your automation stayed static? Real-world friction happens when you stop questioning the machine’s logic and start accepting every draft as “good enough.”

You also need to resist the urge to automate the final “yes.” Even the most advanced saas content marketing setup needs a human to look at the draft and ask, “Does this actually help anyone?” If the answer is “I’m not sure,” no amount of keyword density will save it. Automation is your engine, but your strategy is the steering wheel. Don’t let go of the wheel just because the engine is running fast.

It’s also about the feedback loop. When you find a specific phrasing or a structural nuance that works, bake it back into the system. Don’t just fix it in the Google Doc and move on. If you don’t update the source, you’re doomed to repeat the same edits tomorrow. That’s where the real fatigue sets in,not from the writing, but from the rework. Keep your prompt library living and breathing, or watch your content become a fossil.

Your first 30 days: moving toward a structured AI engine

Imagine standing over a dashboard filled with drafts that all sound like they were written by the same overly enthusiastic robot. They’re technically accurate but strategically hollow, lacking the bite and nuance that actually converts readers. This is the moment most teams give up on their ai content saas and revert to slow, manual processes. But the problem isn’t the technology; it’s the lack of a structured engine. You don’t need better luck; you need a 30-day sprint to build your content scaffold.

week 1: auditing your structural dna

You can’t automate what you haven’t defined. Start by pulling your top five performing articles from the last year. Look past the keywords and identify the specific patterns that made them successful. Is it the way you use contrarian data points? The specific conversational tone? I’ve found that defining what your brand isn’t is often more effective than a list of positive attributes. Create a ‘negative constraint’ list,words to avoid, tones to shun, and formatting habits to break. This becomes the foundation for your automated writing software to follow.

week 2: building the three-shot prompt library

Stop relying on single, massive prompts that try to do everything at once. Instead, build a library of ‘three-shot’ examples for your most common content types. A three-shot prompt gives the ai writing assistant three distinct examples: a poor draft, a mediocre one, and a gold-standard piece. This teaches the machine the precise boundaries of your taste. By the end of this week, you should have a repository of tested instructions for outlines, introductions, and technical breakdowns. While this process is tedious, it’s the only way to ensure consistency as you scale.

week 3: implementing the hitl gate

Friction is actually your friend during this phase. Introduce a mandatory human-in-the-loop (HITL) gate for every piece of content generated. The goal isn’t just to fix the typos; it’s to treat every failure as a bug in your prompt library. If an editor has to rewrite a conclusion three times in a row, the problem lies in the prompt chain, not the editor. This feedback loop ensures that your system gets smarter every week. It’s worth noting that results vary based on how strictly you enforce these editorial standards, but the discipline pays off in lower revision counts later.

week 4: measuring velocity and adjusting chains

In the final week, look at your time-to-publish metrics. If your team is still spending four hours ‘fixing’ an AI draft, your chains are too loose. You want to see that number drop significantly. This is where you can start integrating more advanced tools like GenWrite for SEO optimization to handle the heavy lifting of keyword research and competitor analysis automatically. And once the research is standardized, your team can focus exclusively on the final 10% of polish that makes a piece feel human. By day 30, your goal isn’t a finished library, but a repeatable system that produces predictable, high-quality results every time.

Final takeaway: AI as an assistant, not a replacement

Treating AI like a magic button is the fastest way to produce mediocre work. Most teams fail because they expect the software to provide the strategy. It won’t. You need to keep your strategic brain firmly in control while the machine handles the heavy lifting of research and drafting. This is the ‘one foot in each world’ approach,a balance between human intuition and machine speed. One foot is planted in human expertise: market nuances, customer pain points, and brand personality. The other foot is in the efficiency of large language models. It’s a partnership, not a handoff.

The gap between a failed experiment and a high-performing content creation platform isn’t the underlying model. It’s the workflow. If your protocols are messy, your output will be messy. We stopped looking for the “perfect” prompt and started building a better content automation strategy. That meant breaking down tasks and setting strict boundaries for what the AI can and cannot touch. It’s about building an engine, not just buying a fuel. You can’t expect a machine to understand your customer’s deep-seated fears unless you’ve already defined them in your workflow.

Successful teams don’t just use ai content generation to churn out words. They use it to scale a vision they’ve already defined. So, if you don’t know what makes your brand different, no amount of silicon is going to figure it out for you. You’re the architect. The AI is the power tool. A power tool makes a builder faster, but it doesn’t tell them where the walls should go. If you use it without a blueprint, you’ll just end up with a mess faster than you did before. This is why the most successful SaaS teams aren’t the ones with the newest models, but the ones with the best ‘AI-ready’ protocols.

We designed GenWrite to be that specific power tool. It handles the tedious parts (the keyword research and the formatting) but the core intent must come from you. While some simple niches allow for higher levels of automation, the reality is that B2B authority usually requires a human eye. When we shifted our mindset from “replacement” to “assistant,” the friction disappeared. The metrics followed. You’ll know you’ve hit this stage when your editorial team stops fixing bad drafts and starts refining good ideas. It’s a much better place to be.

The stakes are high. If you continue to treat AI as a strategy-in-a-box, you’ll eventually hit a ceiling where your content feels like everyone else’s. Search engines and readers alike are getting better at spotting that lack of soul. But if you own the strategy and let the machine execute the busywork, you aren’t just creating content. You’re building a genuine asset. Stop asking what the AI can do for you. Start asking how your existing expertise can be codified into a machine-ready format. That’s how you win in a crowded market.

If you’re tired of manually fixing robotic AI drafts, GenWrite handles the entire research and drafting process for you so you can focus on strategy.

Frequently Asked Questions

Why do generic AI prompts result in poor SEO performance?

Generic prompts usually produce surface-level content that lacks the depth search engines look for. When you don’t provide specific context or data, the AI tends to repeat common tropes that don’t help you stand out or build topical authority.

How do chained workflows differ from standard prompting?

Think of a chained workflow as a relay race. Instead of asking for a whole blog post in one go, you break it down into specific tasks like research, outlining, and drafting, where each step feeds the next.

Is it possible to fully automate SaaS content without human review?

Honestly, you shouldn’t try. You’ll always need a human-in-the-loop to check for factual accuracy and brand nuance, but our system at GenWrite makes that review process much faster by handling the heavy lifting.

Does using an AI content tool actually save time for professional writers?

It definitely does if you’ve got a system. Most teams see a 70% reduction in editing time once they stop fighting the AI’s generic output and start using structured prompt recipes that actually match their voice.