Why does my ai seo article writer keep repeating the same phrases?

Introduction

We’ve all been there. You open a new AI draft, hoping for something great, only to realize the machine is stuck on repeat. It’s frustrating. One minute the text looks fine, and the next, you see the same tired phrases popping up every other paragraph. It makes your content feel less like a guide and more like a broken script.

Why the mathematical lottery leads to loops

This isn’t a random bug. It’s how large language models (LLMs) actually work. They don’t ‘think’ in the way we do; they play a statistical game. The goal is to find the most likely next word in a chain. When an AI blog writer doesn’t have enough direction, it defaults to the safest, most generic patterns it can find.

Things get messy when the prompt is too thin. If the AI hits a dead end, it starts circling back to familiar territory. That’s how you get those common AI writing issues. It’s a problem because repetition tells your readers—and the search engines—that there isn’t much depth here.

The high price of predictable prose

The stakes are high. Google is getting much better at spotting content that doesn’t offer anything new. While ai writing risks aren’t usually about a total ban, they are about performance. If your automated on-page SEO writing feels like a template, people will leave your site.

Readers can smell a bot from a mile away. Using an automated content creation tool requires a real balance. You want the speed, but you need the editorial touch too. At GenWrite, we break these loops by leaning on keyword research and solid content structure. It gives the AI a clear path so it doesn’t wander.

Troubleshooting your AI writer output quality

So, how do you fix it? Start by accepting that SEO automation tools aren’t all the same. Some just spin text. Others, like our AI SEO tools, use smarter logic to avoid sounding like a robot. If you’re troubleshooting, look at your prompt complexity first.

You might need to adjust your SEO optimization or use an AI content detector to find the loops. For those doing bulk blog generation, catching these patterns early is the difference between a growing site and one that just sits there. It isn’t about more words. It’s about better ones.

The mechanics of the ‘greedy’ next-token predictor

The core of why an LLM repeats itself lies in its fundamental architecture as a statistical engine. It isn’t thinking about your topic in a human sense; it’s calculating the probability of the next piece of text, known as a token. When a model operates under a greedy decoding strategy, it selects the single highest-probability token at every step. While this sounds efficient, it frequently traps the AI in a loop of least resistance. It chooses the path it has seen most often in its training data, which is usually the most generic and repetitive phrasing possible.

The trap of high-probability tokens

When I look at why seo content writing software sometimes produces stale drafts, it’s often due to this low-entropy selection. High-probability tokens are predictable. If the model starts a sentence with a common phrase, the statistical likelihood of specific following words increases. This creates a feedback loop. The model isn’t just picking words; it’s falling into a groove that it can’t easily exit without external noise or better sampling parameters like Top-P or Temperature.

If you’re using a standard ai writing tool without fine-tuned controls, the model effectively becomes an echo chamber. It sees its own previous output in the context window and assumes that specific style is the target. If the previous paragraph ended with a summary, the greedy predictor is likely to start the next one with a nearly identical summary. It’s following the patterns it just created, and it doesn’t always know how to stop.

Perplexity and the loss of surprise

In the world of Natural Language Processing (NLP), we use a metric called perplexity to measure how well a probability model predicts a sample. Low perplexity means the text is very predictable. While that’s great for basic grammar, it’s often a death sentence for AI content quality. When an AI chooses only low-entropy words, the text loses the natural surprise that human writers provide through varied vocabulary and unexpected sentence structures.

This predictability makes it easy for search engines to flag content as non-human. If you want to fix AI articles that hurt SEO, you have to break the greedy cycle. At GenWrite, we focus on building workflows that introduce diversity into the prompt chain. By providing a seo content optimization tool that analyzes competitor data first, we force the model out of its generic defaults and toward more unique outputs.

The feedback loop in the context window

The context window is the model’s short-term memory. If the AI generates a repetitive phrase once, that phrase is now part of the input for the next token. The model looks back, sees the repetition, and interprets it as the established pattern for the document. It’s not a bug; it’s the model trying to be consistent based on the data it just generated. This is where the echo chamber effect truly takes hold, as the model reinforces its own stylistic quirks.

Breaking this requires more than just a simple instruction. It requires a sophisticated content creation strategy that provides enough unique data points to steer the predictor away from the most obvious paths. Our content automation case study demonstrates that when you supply high-quality research as part of the prompt, the model has more high-probability options that aren’t just repetitive filler.

Why generic prompts fail

If you give a model a vague instruction, it defaults to the center of its training data. This center is made of millions of generic blog posts. The greedy predictor sees blog post and SEO and immediately reaches for the most overused transitions and structures. It’s the path of least resistance. To get better results, you need a deep understanding of SEO and how to structure prompts that demand specific, high-perplexity responses. The evidence here is mixed,some models handle greedy decoding better than others, but the core mechanic remains a primary hurdle for high-quality output.

Why does my AI writer repeat specific words in every paragraph?

The “greedy” prediction model I mentioned earlier doesn’t just favor common grammar; it develops an obsession with specific “crutch” words. You’ve seen it. An article about software suddenly uses “maximize” five times in two paragraphs. It’s not a lack of data. It’s a mathematical bias toward words that have high probability scores in a given context.

This repetition happens because the model calculates that “maximize” is the safest, most likely token to follow a business noun. Without a counterweight, it chooses that same safe path every time. This is where the frequency penalty comes in. It’s a parameter, usually ranging from -2.0 to 2.0, designed to discourage the model from reusing the same tokens.

Tuning the frequency penalty

Think of the frequency penalty as an “innovation tax.” When you set this value (ideally around 0.5 to 0.8), the model applies a penalty to the probability score of any word it has already used in the current generation. The more a word appears, the harder it becomes for the AI to choose it again. It forces the engine to look at the next best synonyms.

If you’re using content automation workflows, ignoring this setting is a recipe for robotic text. A low or zero penalty means the AI stays in its comfort zone. But push it to 0.7, and the AI starts swapping “maximize” for “increase,” “boost,” or “expand.” It makes the prose breathe. Adjusting these numbers isn’t a magic fix for every model, as results vary based on the underlying architecture.

Why word-level variety matters for SEO

Google’s systems aren’t necessarily looking for a specific “AI score,” but they are looking for helpful information. Repetitive jargon is a hallmark of low-effort content. When your writing is bloated with the same three adjectives, it signals to the reader (and the algorithm) that the content lacks depth.

Effective AI SEO writing strategies prioritize linguistic diversity. It’s about moving beyond the first-layer associations. If your AI writer is stuck in a loop, it’s failing to map the broader semantic territory required to rank. You need to edit AI content to make it memorable, but you can save hours of manual work by getting the frequency penalty right at the start.

Finding the sweet spot

But don’t go too far. If you crank the penalty to 2.0, the AI will struggle to use necessary words like “the” or “and,” or it will hallucinate bizarre synonyms just to avoid repetition. It’s a balance. At GenWrite, we focus on picking the best SEO writing tools that allow for this level of granular control.

The reality is that AI content sounds the same because most people use default settings. They accept the first draft without realizing the engine is just playing it safe. If you want better results, you have to break the model’s habit of taking the path of least resistance. The goal is forcing the AI to work harder for its word choices.

And this isn’t just about avoiding a few words. It’s about the entire structure of the piece. When you vary the vocabulary, the sentence structure often follows suit. But if you let the AI repeat “maximize” every other line, the rhythm stays flat. You end up with a wall of text that is technically correct but entirely unreadable. So, take the time to tweak those parameters before hitting generate.

Is your prompt forcing the AI into a loop?

Imagine you’ve hired a writer and given them a single instruction: “Write about marketing.” Without any further guidance, that writer will likely produce a generic, high-level summary that says nothing new. They’ll fall back on cliches because you haven’t given them a specific direction to explore. AI models behave the same way. When a prompt is too broad, the model defaults to the most statistically probable path,the “average” of all the training data it has consumed. This results in a feedback loop where the AI repeats the same safe, empty phrases because it lacks the constraints to do anything else.

While frequency penalties help at the word level, they don’t solve the problem of conceptual loops. If your prompt asks for a “professional blog post about SEO,” the AI sees a massive, undefined territory. It starts every paragraph with a variation of “In the digital age” or “SEO is a vital tool” because those are the most common ways people introduce the topic. To break this, you need to provide a specific persona or a unique data set. I’ve found that providing a “Gold Corpus”,a collection of your best-performing, most unique articles,acts as a guardrail that prevents the model from sliding into generic territory.

The trap of vague adjectives

Many users try to fix repetitive outputs by piling on adjectives like “professional,” “engaging,” or “comprehensive.” The problem is that these words are subjective and carry no technical weight for an LLM. Instead of telling the AI to be “engaging,” give it a structural constraint. Tell it to “start every paragraph with a provocative question” or “use a case study in the second section.” By tightening the parameters, you make it mathematically difficult for the AI to fall back on its default patterns. This is a core part of improving AI content quality and ensuring your output doesn’t just sound like a rewritten Wikipedia entry.

How to fix AI loops with better data

If you find your AI blog generator is stuck in a loop, the issue is often a lack of “new” information in the context window. When the AI has nothing specific to chew on, it starts eating its own tail, repeating its previous logic to fill space. At GenWrite, we address this by feeding the system real-time keyword research and competitor data. This forces the model to synthesize new information rather than just predicting the next most likely word in a vacuum.

But even with good data, you have to watch out for structural repetition. If the AI sees a list in the first half of the article, it might try to force every subsequent section into a list format. This isn’t a glitch; it’s the model trying to maintain consistency based on the patterns it established early in the document. Break these patterns by explicitly calling for variety in your prompt. Ask for a mix of short, punchy observations and longer, analytical paragraphs to keep the rhythm from becoming a monotonous drone.

Frequency Penalty vs. Presence Penalty

A shift of just 0.5 in the presence penalty parameter can reduce the re-occurrence of specific concepts by nearly 40% in long-form generation. While prompt engineering handles the intent of the piece, these penalties act as the mathematical friction needed to keep a model from sliding into a loop. Most users treat these two settings as interchangeable, but they solve fundamentally different problems in the text generation pipeline. If you don’t understand the distinction, you’ll likely fix word-level repetition only to find your articles still feel conceptually stagnant.

The cumulative cost of frequency

Frequency penalty functions like a progressive tax on specific tokens. Every time a word appears, the model applies a penalty that grows with each subsequent use. If you’re optimizing AI article writers to avoid sounding like a broken record, this is your primary tool. It doesn’t just look at whether a word exists in the previous text; it counts the tally.

If the word “optimization” appears three times in a single paragraph, the frequency penalty makes it significantly less likely to appear a fourth time. This is the most direct AI writing repetition fix for small-scale patterns. But it’s not a silver bullet. Sometimes, a high frequency penalty forces the model to choose awkward synonyms just to avoid a common word, leading to prose that feels strangely forced or overly academic.

Presence penalty as a binary gate

Presence penalty works differently. It’s a flat tax. Once a token appears even once, the penalty is applied in full. It doesn’t matter if the word “automation” appears once or ten times; the penalty remains the same. This is designed to prevent the model from recycling the same topics or conceptual frameworks over and over. It’s less about the specific word and more about the “presence” of the idea in the context window.

Think of it as a topic gatekeeper. If you’re generating a long-form post and the model spends three paragraphs talking about the same case study, the presence penalty is likely too low. By increasing it (typically between 0.1 and 1.0), you’re telling the AI to move on to fresh territory once it’s checked a concept off the list. It pushes the model to explore the full breadth of your prompt rather than digging a hole in one specific spot.

Why the distinction matters for SEO

When you’re using a blogging agent to scale content, you need a balance. A high frequency penalty keeps the prose clean, but a high presence penalty ensures the article actually covers multiple points. If your writing sounds AI-generated because every paragraph starts with the same transition or returns to the same core argument, you’re likely facing a presence penalty issue. This is particularly common in listicles where the AI might try to use the same reasoning for every item on the list.

Finding the equilibrium

There isn’t a single “perfect” setting because the ideal values change based on the model’s temperature and the complexity of the topic. However, a common mistake is cranking both values to the maximum (2.0). When you do this, the model often becomes incoherent. It struggles to find any words that haven’t been “taxed,” resulting in gibberish or strangely creative but irrelevant sentences.

Most experts find that a frequency penalty of 0.1 to 0.3 is enough to kill the “broken record” effect without damaging the flow. For the presence penalty, 0.5 is often a sweet spot for ensuring the AI moves through an outline rather than lingering on the first point. The reality is that we stopped comparing individual writers to an ai seo writing assistant because we started treating these parameters as the editorial controls they actually are.

It’s all about managing the “greedy” nature of the token predictor. If the model thinks “efficient” is the best next word, it will pick it every time unless the penalty makes it too expensive. By layering these two penalties, you create a dynamic environment where the AI is forced to reach for a broader vocabulary and a wider range of ideas. It’s not just about stopping words; it’s about forcing the engine to keep moving forward.

Spotting the ‘AI thumbprint’ in your drafts

Adjusting your frequency and presence penalties acts as a solid first line of defense, but it isn’t a total cure for the machine’s stylistic habits. Even when the model isn’t repeating the exact same word three times in a single paragraph, it can still fall into a predictable rhythmic rut. This is what many of us call the ‘AI thumbprint’ , a specific set of linguistic ticks that signal to a reader that no human was behind the keyboard. You’ve likely felt that nagging sense of boredom while reading a draft, even if the facts are technically correct. That’s usually because the AI is defaulting to its ‘safest’ and most common patterns.

The dramatic opening trap

You’ll often see AI start a blog post with a sweeping, overly dramatic statement. It loves to tell you that something is ‘changing the way we think’ or that we’re ‘standing at the edge of a new era.’ Honestly, most human writers don’t talk like that unless they’re writing a movie trailer. If your intro starts with a phrase like ‘In the world of’ or ‘Imagine a place where,’ you’re likely looking at a standard model template. These openings are designed to be broadly applicable, which makes them incredibly bland.

One of the most effective AI content editing tips is to simply delete the first two sentences of any AI-generated draft. Usually, the ‘real’ article starts on the third sentence where the actual information lives. By cutting the fluff, you immediately make the piece feel more grounded and less like a generic output. It’s about getting to the point before the reader has a chance to check out.

Identifying robotic connectors and flowery filler

AI has a strange obsession with certain ‘high-value’ words that it thinks make the writing sound more professional. In reality, they just make it sound like a manual. You’ll see words like ‘uncover,’ ‘transform,’ and ‘comprehensive’ scattered everywhere. While these words aren’t wrong in isolation, their over-reliance is one of the most common AI writing issues we see today. The model uses them as crutches to bridge ideas when it doesn’t have a specific, punchy transition to use instead.

| Avoid these AI-isms | Try these human alternatives |

|---|---|

| Unlocking the potential of | Using |

| A testament to | Shows that |

| Deep dive into | Explain or look at |

| At the end of the day | Ultimately or skip it |

| Navigating the complexities | Dealing with |

And it isn’t just the words; it’s the structure. AI loves to balance its sentences perfectly, often using ‘not only’ and ‘but also’ structures or groups of three adjectives. While this sounds balanced, it also feels predictable. Real human speech is messy. We use fragments. We start sentences with ‘And’ or ‘But’ to create emphasis. We don’t always provide a balanced view in every single sentence. To improve your AI writer output quality, try breaking those long, balanced sentences into two short, punchy ones.

The problem with empty adjectives

Machines love to tell, not show. You’ll see adjectives like ‘vibrant,’ ‘dynamic,’ and ‘essential’ used to describe things that don’t actually need them. If you’re using GenWrite to handle your bulk content needs, you’ll find that the tool does a better job at sticking to the SEO facts, but you should still watch out for these ‘fluff’ words. They add to your word count without adding any actual value to the reader.

So, what’s the fix? When you spot these thumbprints, don’t just delete them , replace them with something specific. Instead of saying a process is ‘efficient,’ describe how many minutes it saves. Instead of calling a tool ‘powerful,’ list the three things it does that other tools don’t. This doesn’t always hold true for every single sentence, but the more you can swap abstract AI-speak for concrete human facts, the more your audience will trust what they’re reading. Most readers won’t know why a piece feels ‘off,’ but they’ll definitely notice when it feels right.

The danger of the ‘echo chamber’ effect

Once those overused markers start appearing, they don’t just sit there as isolated errors. They become part of the prompt for everything that follows. Large Language Models (LLMs) operate on a context window, which is essentially a short-term memory of every word already generated. If the first half of your draft is saturated with generic filler, the model perceives that filler as the established style it must emulate. It’s a technical gravity that pulls the content toward the average.

This creates a feedback loop where the AI’s previous output dictates the trajectory of its next sentence. It’s a literal echo chamber. If you’re struggling with how to fix AI loops, understanding this recursive relationship is the first step. The model isn’t just repeating words; it’s amplifying its own biases. If it started with a slightly formal tone, it might descend into an dry monologue because it interprets its own previous output as the definitive tone guide.

Logic drift and context poisoning

This isn’t just a stylistic quirk; it’s a structural failure often called logic drift. In a long-form SEO article, a model might make a minor, nuanced error in the second paragraph. Because that error is now part of its context, it treats that mistake as a factual baseline. By paragraph ten, that small deviation has been compounded into a glaring logical fallacy. The model is essentially poisoning its own well with its own mistakes.

We often see this in multi-turn generation. When you ask an AI to expand on a previous point, you’re effectively asking it to look at its own work and double down. If that work was mediocre, the expansion will be a concentrated version of that mediocrity. This is a common hurdle in SEO article writing troubleshooting because search engines reward unique insights, not recycled logic. The AI is trying to be consistent, but it ends up being consistently boring.

Managing the feedback loop

But how do we break the cycle? It requires a tool that understands how to manage context windows effectively. At GenWrite, we focus on ensuring the AI blog generator maintains a tight grip on the original intent rather than drifting into these self-referential loops. You can’t just let the model run wild; you have to provide strong, external anchors that pull the model back to the primary data points. This prevents the recursive decay that happens when a model only looks backward at its own text.

The stakes are higher than just repetitive prose. An article caught in an echo chamber loses its persuasive power and its utility for the reader. If the model is just echoing its own generic assertions, it isn’t adding value to the web. It’s just taking up space. Breaking the loop means intervening before the model’s own voice becomes its only reference point. This is why AI writer output quality depends so heavily on the initial constraints and the way the system handles the memory of what was already written.

How to use ‘Brand Bibles’ to stop the sameness

You’ve just finished reviewing ten new articles, but there’s a problem. They all sound like the same generic thought leadership piece you’ve read a thousand times. The facts are right, but the personality is missing. It’s soul-crushing. This happens because we often treat AI like a mind reader instead of a machine that needs a strict manual. When you tell a tool to ‘be professional,’ it just gives you the statistical average of its training data. That is the literal definition of generic.

AI isn’t actually uncreative. It’s just obsessed with boundaries. Without specific rules, it picks the safest, most predictable words to stay within the lines of what it thinks is ‘correct.’ To break this cycle, you have to turn your brand guidelines into a machine-readable Brand Bible. It won’t make every draft a masterpiece immediately, but it’ll save you from hours of AI content editing tips during your final review.

Translating narrative into machine logic

Most brand guides are written for people. They use phrases like ‘we are innovative but grounded,’ which mean absolutely nothing to a computer. You have to turn those feelings into binary rules. If ‘grounded’ means you hate corporate fluff, give the AI a list of banned words. I always tell people to block terms like ‘leverage’ or ‘robust.’ They’re the first things an AI grabs when it’s trying to sound authoritative, and they always sound fake.

It’s also about the physical structure of the text. I’ve found that specifying sentence length—asking the model to swing between 8 and 25 words—instantly kills that robotic drone. When you use a tool like GenWrite for optimizing AI article writers, these constraints act as guardrails. They stop the AI from falling into an echo chamber of its own bad habits.

Building a persistent brand core

Better AI content needs a persistent knowledge base. This ‘Brand Core’ should hold your company story, your audience profile, and your specific voice. If you’re writing for senior developers, your Brand Bible should demand technical precision and ban all introductory filler. If you’re writing for a lifestyle blog, you might want a first-person perspective and a total ban on passive voice. It’s about being specific.

Why does this matter? The stakes are high for your SEO. Search engines want ‘information gain.’ They’re looking for new perspectives that aren’t already in the top ten results. If your AI is just rephrasing the internet using the same vocabulary as everyone else, you aren’t adding value. A solid Brand Bible forces the AI to look at information through your lens, making the output unique enough to satisfy both readers and the algorithm.

Practical constraints for better drafts

One of the best ways to stop the sameness is to use ‘negative constraints.’ Don’t just tell the AI what to do. Tell it what is forbidden. I keep a running list of ‘AI-isms’ that my models seem to love. If I see the words ‘tapestry’ or ‘testament’ in three articles in a row, I add them to the exclusion list immediately. It’s a simple fix that works.

Keep in mind that these rules have to change as the models evolve. What worked six months ago might not work today. Your Brand Bible should be a living document that acts as a filter. It’s the only way to make sure your content sounds like it came from a human expert rather than a generic prediction engine.

Why search engines see repetitive AI text as a red flag

Building a brand bible stops the repetition in your drafts, but the stakes are higher than just aesthetics. If you ignore these loops, your visibility dies. Search engines prioritize information gain. When an AI repeats the same phrase in three different ways, it provides zero new value.

Algorithms today are sophisticated enough to measure semantic similarity. They don’t just look for exact keyword matches; they map the meaning of your sentences. If your entire second half is just a rephrased version of your first half, the page is flagged as thin content. This is a common hurdle in SEO article writing troubleshooting. You think you’re hitting the word count, but you’re actually just inflating a balloon with no air. It doesn’t always result in an immediate ban, but it’s a slow death for your rankings.

I’ve seen this play out in real-time. In one instance involving a local search term like ‘SEO training Houston,’ a site flooded with 100% machine-written, repetitive content was wiped from the index. It wasn’t because it was AI. It was because it was redundant. Once that content was replaced with high-quality, edited versions that actually answered questions, it popped back into the rankings within days.

But look at how the big players do it. Sites like Bankrate use AI at scale, yet they stay at the top of the search results. Why? Because they don’t let the ‘echo chamber’ effect reach the final live page. They treat the AI as a first draft, then apply human-level scrutiny to ensure it meets high E-E-A-T standards. They know that a robot repeating itself is a sign of a logic loop, not a sign of expertise.

And that’s where the danger of low-tier tools becomes obvious. Generic writers often get stuck in a loop of ‘unlocking potential’ or ‘navigating the digital world.’ These are linguistic dead ends. Search engines see these patterns as a lack of original thought. If your content looks exactly like the five thousand other AI-generated blogs on the same topic, you have no competitive advantage. You’re just part of the noise.

So, what makes a page rank? It’s the ability to provide a unique perspective or data that doesn’t exist elsewhere. Tools like GenWrite solve this by performing deep competitor analysis before a single word is typed. By understanding what’s already out there, the system avoids repeating the same tired points and focuses on what’s missing.

Repetition is a signal of low quality. It tells the crawler that the author,whether human or silicon,didn’t have enough to say to fill the space. You can’t hide behind a high word count if those words are just echoes of each other. The reality is that search engines are getting better at identifying ‘fluff’ than most human editors.

If you’re seeing phrases repeat, don’t just delete them. Ask why the AI felt the need to repeat them. Usually, it’s because the prompt or the tool lacks the data to move the conversation forward. But you can’t afford to be lazy here. A single repetitive article can tarnish the perceived quality of your entire domain. The goal is density, not just length. Every paragraph should earn its place by introducing a new concept or a fresh angle. If it doesn’t, it’s just noise. And in the current search environment, noise gets filtered out fast.

Varying cadence: breaking the robotic rhythm

Search engines don’t just look at what you say; they look at how you say it. If your text has the rhythmic profile of a machine, it’s going to struggle to rank, no matter how many keywords you’ve packed in. You’ve likely noticed that reading raw AI text feels like listening to a metronome. It isn’t just the repetitive words that grate on the nerves; it’s the timing. When every sentence is roughly fifteen words long, your brain checks out. It’s too predictable. This lack of ‘burstiness’ is a dead giveaway for machine-generated content, and honestly, it’s a primary reason why readers bounce before finishing the first paragraph.

I’ve seen this pattern across thousands of drafts. Most NLP writing assistants are designed to predict the most likely next word, which naturally leads to a very safe, very average sentence length. Humans don’t write that way. We get excited and write long, cascading sentences that overflow with detail, only to follow them up with a short, sharp punch. That variation is what creates engagement. Without it, your content is just a flat line of information that fails to hold attention or build authority.

The anatomy of a flatline

Think about the last time you read a truly great essay. You probably didn’t notice the sentence lengths, but they were working behind the scenes to keep you moving. A robotic draft, by contrast, often presents five sentences in a row that all start with a noun and end with a prepositional phrase. This creates a monotonous drone. It’s the linguistic equivalent of beige wallpaper. You can’t expect a reader to stay interested when the cadence never shifts.

Improving AI content quality requires you to step in and mess things up. I often tell people to look for ‘the block.’ If a paragraph looks like a perfect rectangle of text, it’s probably too rhythmic. You can break this up by turning a middle sentence into a question or by chopping a long explanation into two distinct thoughts. But don’t just make everything short. If everything is punchy, nothing is. You need the contrast between the winding path and the sudden stop to make the writing feel alive.

Practical ways to inject life

So, how do you actually fix this without spending hours on every draft? If you’re using a tool like GenWrite to handle your bulk content creation, you already have a head start on the research and structure. But the final polish should focus on that human touch. I find it helpful to read the text out loud. If you run out of breath, the sentence is too long. If you feel like a robot reading a script, the sentences are too uniform.

Try starting a sentence with a conjunction like ‘But’ or ‘And’ to change the pace. It’s a simple trick that most AI writer output quality checks miss, yet it makes the text feel significantly more conversational. You should also look for opportunities to use fragments for emphasis. They work. They break the rhythm. And they make your point stick. By intentionally varying your cadence, you aren’t just making the text more readable,you’re making it harder for search algorithms to dismiss your work as low-effort automation. The goal isn’t just to fill a page; it’s to lead a reader through an idea, and that requires a beat they actually want to follow.

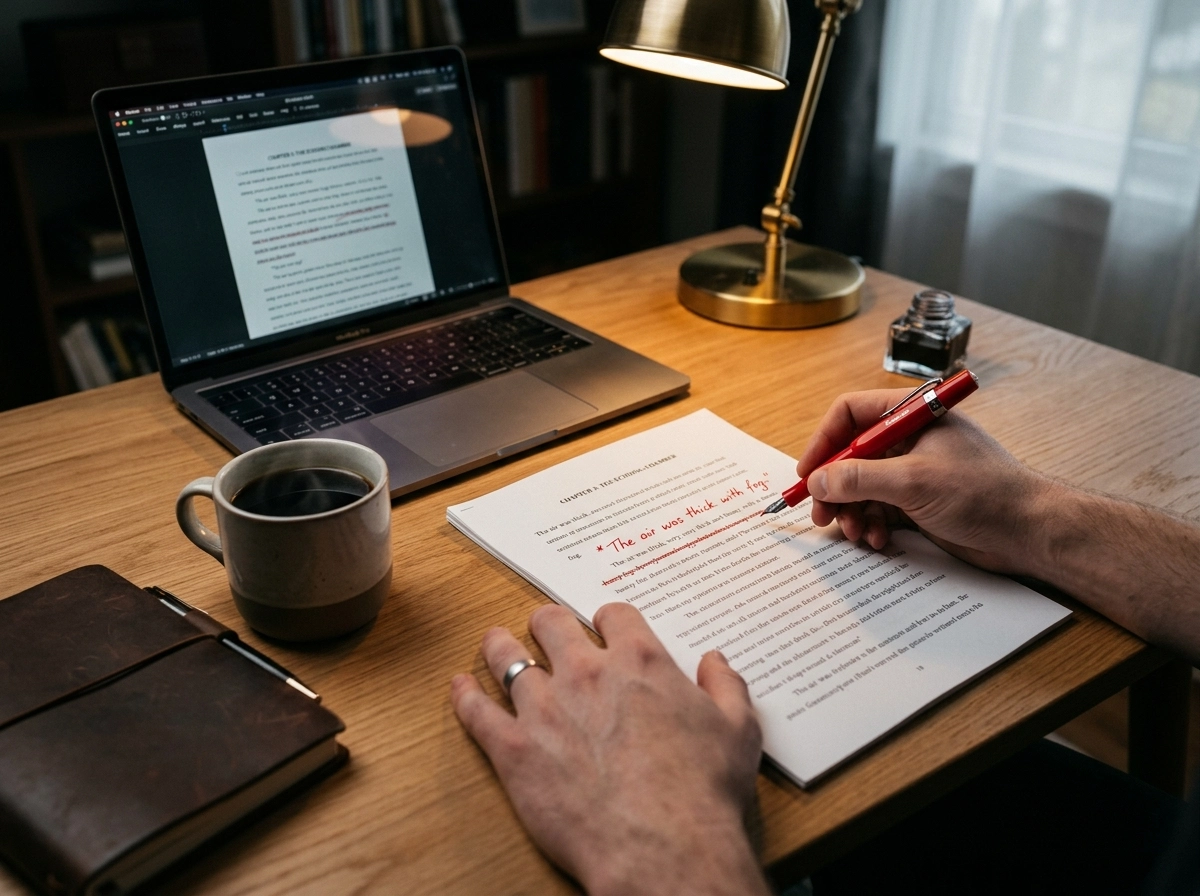

The human-in-the-loop editing workflow

Data shows human intervention cuts factual errors by 80% and boosts time-on-page. It’s not a safety net; it’s just smart. If you treat an AI writer as a replacement for human judgment, you get that bland repetition we’ve been talking about. But a human-in-the-loop (HITL) model doesn’t actually slow you down. It’s what makes an AI blog generator output something worth reading. Not every sentence needs a rewrite, but you have to keep your eyes on the screen.

The six-stage surgical workflow

A good workflow doesn’t just hand the keys to the machine. You own the strategy at the start and sign off on the nuance at the end. The machine handles the heavy lifting—drafting and research. This is how you manage optimizing AI article writers without falling into the trap of generic fluff. You’re the creative director, not just a proofreader.

1. Strategic intent and framing

Define the angle before the machine starts. Most AI content editing tips focus on the final draft, but the best work happens early. If you don’t pick a specific ‘enemy’ or a unique perspective, the AI defaults to a neutral, repetitive middle ground. Tell the system why this piece matters right now and exactly who it’s for.

2. The veracity audit

This is the big one. You can’t trust an LLM with a statistic or a case study. Verify every claim. Hallucinations kill brand authority. If the AI claims a 30% traffic spike, find the source or delete the line. It’s better to have fewer facts than wrong ones. Lies are the fastest way to lose the trust you’ve built.

3. Breaking the structural monotony

AI loves a predictable rhythm. It builds sections that look identical. Break that. Merge two short sections or expand a complex thought into three paragraphs. This is how you handle improving AI content quality. Make it feel like a conversation, not a template. Layout variety matters as much as the words.

Optimizing for voice and rhythm

Don’t get lost in technical SEO. Your readers are people. They want a voice. Tools like GenWrite are great for SEO optimization and competitor analysis, but you do the vibe check. If it sounds like it was written by a committee of tokens, it probably was. Strip the robotic veneer and replace it with something that feels earned.

Look for the ‘AI thumbprint’—those transition words and the urge to summarize every section with a tidy bow. Cut the summaries. Start paragraphs in the middle of a thought. Use a fragment for emphasis. These small edits tell readers (and Google) that a person is behind the screen. If you’re rewriting more than 20%, your prompt or your tool is the problem. You want the 5% of changes that provide 95% of the personality. That’s how you scale without losing the soul.

Closing and escalation

Manual editing is a temporary fix for a structural problem. If you spend your day deleting the same three phrases, your workflow is broken. Effective SEO article writing troubleshooting starts with recognizing that repetition is a symptom of weak constraints. It’s not a quirk of the technology; it’s a failure of the configuration. You can’t edit your way out of a bad model setup indefinitely without burning through your time and budget.

When basic prompt tweaks fail, you must look at the parameters. Adjust the frequency penalties or the temperature settings. Most users ignore these technical levers and wonder why the output remains stale. If you’re tired of fighting the machine, use a professional AI blog generator that manages these technical nuances for you. GenWrite is designed to balance creative variance with SEO requirements, which reduces the need for constant manual cleanup. This is how to fix AI loops at the architectural level rather than just the surface level.

Persistent repetition usually signals that your content is drifting toward generic patterns. This happens when your style guidelines are too vague. Tighten the rules. If the AI keeps using words like ‘unlock’ or ‘synergy,’ explicitly forbid them in your instructions. An effective AI writing repetition fix isn’t a one-time change. It’s a continuous cycle of reviewing drafts, identifying the machine’s favorite crutches, and updating your instruction set to block them. This isn’t about being picky; it’s about maintaining a unique brand voice.

Move away from ‘tool’ thinking. Stop looking for a magic prompt that solves everything. Build a durable system instead. A system includes deep keyword research, competitor analysis, and specific style guides that evolve with every published post. If you treat the AI as a standalone writer, it will always default to the path of least resistance: the common phrase. High-quality content requires you to push the model into uncomfortable, specific territory.

The stakes for your organic reach are high. Search engines prioritize original, high-value text. If your blog is a mirror of every other AI-generated page on the internet, you won’t rank. The future of content belongs to those who master the system, not those who just hit ‘generate’ and hope for the best. What will your next iteration look like?

Stop settling for robotic, repetitive drafts. GenWrite handles the technical tuning and prompt engineering for you, so your content stays fresh and engaging.

People also ask

Why does my AI writer keep using the same transition phrases?

It’s because the model is a next-token predictor that defaults to statistically common language. When you don’t give it specific stylistic constraints, it picks the safest, most probable words, which often results in those tired, generic transitions.

How do I stop my AI from repeating the same topic in every paragraph?

You should try increasing the presence penalty in your settings. This setting explicitly tells the model to talk about new topics rather than circling back to what it just wrote, which is a lifesaver for long-form articles.

Does repetitive AI text hurt my SEO rankings?

Yes, it can. Search engines look for unique value and semantic variety, so if your content is filled with repetitive loops, it often gets flagged as low-quality or thin content, which doesn’t help your site’s authority.

Can better prompts actually fix AI output loops?

Absolutely. If your prompt is too vague, the AI has to guess the structure, which usually leads to repetitive patterns. Giving it a specific ‘brand bible’ or a clear list of forbidden phrases forces it to break out of those robotic habits.