When seo friendly content generators miss the mark — and how to pivot

The gap between ‘optimized’ and ‘authoritative’

We’ve all seen that flatline on the analytics dashboard. You put out a 2,000-word guide that checks every technical box and scores a 95 on your seo content optimization tool. But three weeks later? Nothing. Traffic is stagnant and the bounce rate is stuck at 88%. It’s a tough pill to swallow, but just hitting keywords isn’t the same as proving you’re an authority.

When teams lean too hard on basic automation, they often get pages that are technically perfect but totally hollow. A standard seo friendly content generator can hit your TF-IDF targets with ease. But if the result reads like a dry encyclopedia entry, readers won’t stick around. We saw this firsthand while tracking a seo content generator tool over a 30-day cycle. Automation alone usually leads to a quick drop-off because it ignores the actual human problem behind the search.

That’s the gap between being “optimized” and actually being authoritative.

Moving past surface-level metrics

We recently worked with a B2B SaaS client who spent six months churning out “what is” articles using various seo ai tools. Their vanity metrics looked okay at first, but discovery calls didn’t move. They were winning the click but losing the audience. The fix wasn’t just writing more words. It was a complete strategy overhaul. They traded high-volume fluff for guides that solved specific pain points, focusing entirely on search intent alignment.

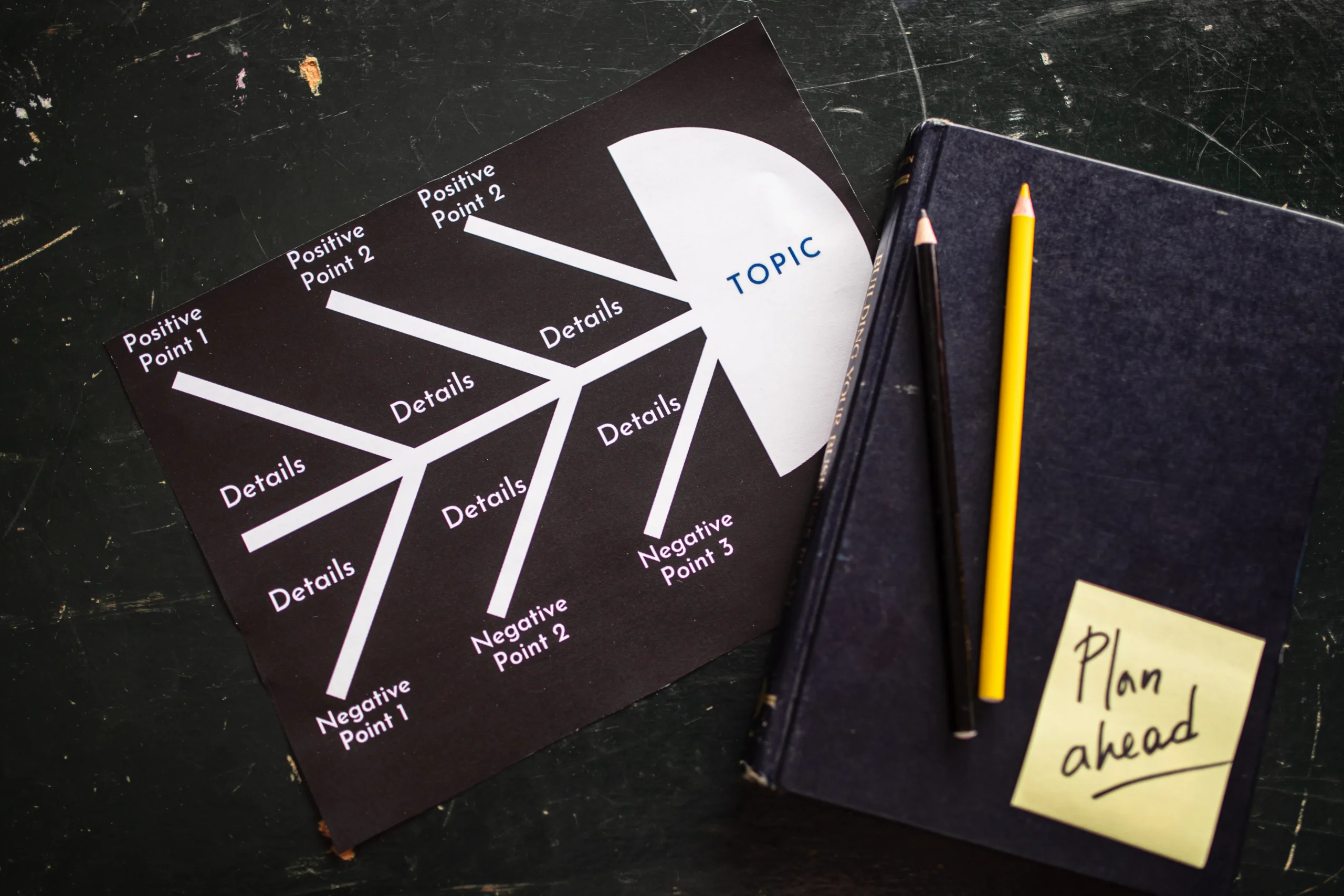

If you want to make that shift, your workflow has to change. Modern content writing isn’t about one-off posts anymore. It’s about topical clusters with hub pages. You need deep ai keyword research to see how topics actually connect, followed by smart content structure and internal linking to prove your expertise to both users and Google.

Rethinking the production line

This doesn’t mean you should ditch automation. At GenWrite, we see teams scale their work every day. The trick is using AI to handle the heavy lifting, not the thinking. When you use an automated seo blog writer, let it manage the automated on-page seo writing and the formatting. Your job is to add the perspective a machine can’t fake.

You can still use keyword-driven blog writing to grab attention. But that first draft is just a starting point. Real seo optimization for blogs means answering the unspoken questions your reader brings to the page. Look at the health niche. If a site targets big keywords but skips the expert-backed details, the algorithm will eventually dump them for a site that actually helps people.

Why the ‘one-click’ blog post is a ranking liability

Hitting “generate” and publishing the raw output feels efficient. But it creates a specific type of technical debt: content bloat. Search algorithms actively identify and demote unoriginal, low-effort text. We see this constantly when teams rely on a basic ai seo article writer without injecting human judgment or structural editing. Volume simply does not equal visibility anymore.

Consider a user searching for “best SEO tools 2025.” They want a sharp, comparative analysis to make a buying decision. A one-click draft usually ignores this entirely. It spits out a dictionary definition of search engine optimization, follows up with a generic list, and completely misses the searcher’s actual intent. You can’t fix this foundational error with a quick meta tag generator after the fact. The core architecture of the post is fundamentally flawed.

Then there is the structural fingerprint. Raw AI output follows a rigid, exhausted pattern: an introduction, five perfectly symmetrical subheadings, a bulleted list, and a tidy conclusion. Human readers recognize this cadence instantly. So do spam filters. When you check AI content across thousands of raw outputs, the structural redundancy becomes glaringly obvious. It signals a lack of original perspective, practically begging search engines to ignore the page.

Thin content isn’t just about a low word count. It is about a severe lack of original data. Large language models predict the next likely word based on existing information. They do not conduct original research. If you just copy and paste, you are publishing average consensus. To break out of this echo chamber, you have to humanize AI text by injecting proprietary data, actual case studies, or contrarian viewpoints. Otherwise, you are just adding to the noise.

The fix isn’t to abandon automation. Manual drafting is often too slow for modern marketing demands. Instead, the strategy must shift from raw generation to guided orchestration. This is where an AI writing assistant for marketers proves its worth. By using a specialized AI writing tool, you can automate the heavy lifting,like scraping keywords from competitor URLs,while reserving human energy for strategic framing and tone adjustments.

We built GenWrite specifically to address this execution gap. A high-performing agency marketing workflow requires more than just a text spinner. It needs a system that handles end-to-end blog creation, from analyzing search intent to structuring the final piece, without sacrificing depth. You can even pull insights from complex documents using a PDF AI analyzer to feed the generator original, dense material rather than relying on its base training data.

The evidence here is straightforward, though algorithmic penalties do vary depending on the specific niche. Sites publishing unedited, one-click articles often see temporary traffic spikes followed by sharp algorithmic demotions. The path forward requires using AI as a research and structuring engine, not a final-draft vending machine. It is about building intent-focused SEO strategies where automation serves the content, rather than the content serving the automation.

Paying the hallucination tax (and how to avoid it)

Imagine you’ve just hit ‘publish’ on a massive Ottawa travel guide. Traffic starts climbing. Then, the local news calls because your ‘must-see’ landmark is actually a community food bank. It sounds like a joke, but a tech giant actually lived this nightmare. They didn’t just post thin content; they posted a confident lie that wrecked their credibility.

We’ve already talked about how unoriginal data kills your search performance. But publishing outright falsehoods? That’s a whole different level of risk. Large language models don’t actually ‘know’ facts. They just guess the next logical word in a sequence. Sometimes they guess wrong and invent a court case or a company policy out of thin air.

This is the hallucination tax. A New York lawyer paid it when he cited fake legal precedents generated by a chatbot. If you don’t have a human checking the work, you’ll eventually pay it too.

I see teams treat AI like a magic oracle rather than a probabilistic drafting tool. Relying blindly on raw output is a massive gamble. We built GenWrite to handle the heavy lifting of bulk blog generation and competitor research, giving you the structure you need to scale. But even with the best models, you still need a workflow to fix ai content before it goes live.

The stakes are real. An airline recently had to pay damages after their chatbot made up a fake bereavement fare policy on the fly. Cross-checking facts against real sources isn’t just a good idea; it’s a non-negotiable part of your pipeline. You can’t afford to publish unchecked claims.

Building a verification workflow

So, how do you stay fast without being reckless? You bake a checklist into your editorial process. Any time the AI spits out a statistic, a quote, or a date, flag it. Rigorous ai writing correction takes a few extra minutes, but it saves you from months of reputation management.

You should also verify the structure. Even if you use an AI SEO content generator to build FAQ sections, the answers have to be factually sound. One trick is to ground the AI in reality. For example, using a YouTube video summarizer to turn a webinar into an article is much safer because the model is forced to stick to your specific transcript.

Finding the balance between speed and oversight isn’t always easy. We don’t know if these models will ever be 100% accurate. But if you treat factual accuracy as one of your core seo ranking tips, you’ll build authority that actually survives a manual review.

From creator to strategist: the workflow pivot

Retroactively fixing hallucinations is a defensive move. If you’re spending hours line-editing LLM output just to ensure the software didn’t invent its own statistics, you’re still treating the tool like a primary author. That workflow is broken. The real pivot requires stepping away from the cursor and repositioning yourself as a strategist. You use AI as a high-speed data processor, not a ghostwriter.

Creators stare at blank pages; strategists manage systems. Instead of throwing lazy prompts at a model—like asking it to “act as an SEO expert”—a strategist sets hard constraints. You feed the system raw transcripts from internal experts and tell it to extract the core arguments. You map the semantic entities your competitors ignore to find topical gaps. By employing AI for keyword research and intent analysis, you build a technical framework before a single paragraph is drafted. This shift from writing to directing changes the unit economics of the entire operation.

This is where purpose-built automation matters. When you integrate an AI generator like GenWrite into your pipeline, the system handles the mechanical execution. It structures headers, embeds internal links, and processes competitor data at scale. But the human operator still dictates the ranking strategy. You aren’t just clicking a button and hoping for a reward. You review the architecture and inject the proprietary insights that algorithms can’t simulate. If you look at GenWrite pricing and its feature tiers, the value isn’t just bulk generation. It’s the workflow compression that lets your team focus on high-level strategy.

This transition isn’t always seamless. Optimizing AI drafts requires a cynical editorial hand. Left alone, LLMs default to safe, mathematically average syntax. They smooth out the friction and contrarian takes that make technical writing interesting to human practitioners.

Your daily job shifts. You no longer draft from scratch. Instead, you put the tension back into the machine-generated text. You replace generic scenarios with messy, real-world client outcomes. You strip out the introductory fluff. We aren’t trying to bypass human involvement, because fully autonomous publishing rarely wins in competitive SERPs. The goal is to move the human intervention point further downstream. Let the machine compile the research and format the HTML. You step in to ensure the final piece actually says something worth reading.

Engineering for citability in the age of AI search

Shifting from a high-volume creator to a strategic editor means rethinking who your reader actually is. Increasingly, your first reader is a large language model. Content structured with original data tables and clear, modular summaries gets cited 4.1x more often in AI-driven search results. That number forces a complete rethink of how we format information online. Generative Engine Optimization requires making your arguments so cleanly structured that an algorithm can extract and credit them without hallucinating the surrounding context.

If an AI engine can’t easily parse your thesis, it bypasses your page for a competitor who organized their insights better. The models hunt for definitive, easily categorizable answers. When you publish a dense wall of text, you force the system to guess your main point. Breaking concepts down into distinct listicles, direct comparisons, and clearly labeled documentation feeds directly into what these systems prefer to cite. You can rely on standard on-page seo tools to audit basic headings, or implement Schema.org protocols to feed the machine structured data. But the core logic relies entirely on human clarity.

Proprietary data is the ultimate moat in this environment. An AI model can summarize general knowledge instantly. It can’t invent your internal metrics or customer outcomes. One SaaS deployment generated 20 new trial signups a month purely from ChatGPT referencing their original research tables. They didn’t win by writing longer posts. They won by providing structured, unique numbers that the model needed to answer user prompts.

At GenWrite, we designed our platform around this specific structural reality. Generating bulk text is easy enough, but formatting it so that search algorithms actually recognize its authority takes deliberate engineering. We handle the background keyword research and competitor analysis automatically. That frees you up to inject the proprietary data and unique viewpoints that LLMs actively seek out. You stop fighting the algorithm and start supplying it with exactly what it needs to build a response.

Of course, this approach doesn’t guarantee immediate visibility across every single niche. Highly saturated markets still require significant domain authority to break through the noise. And accuracy remains completely non-negotiable. Algorithms are getting highly aggressive about penalizing unsubstantiated claims. Understanding how unverified AI content hurts SEO forces publishing teams to prioritize rigorous fact-checking over raw publishing volume. A model will drop your site from its citation pool the moment it detects conflicting or hallucinated data within your structural blocks.

You have to build for citability from the ground up. This means abandoning the bloated introductions and repetitive conclusions that used to dominate legacy seo ranking tips. Get straight to the point. Frame your insights in a way that allows an AI to lift a complete, accurate thought in a single pass. Instead of writing paragraphs of prose, consider whether a simple data table serves the user better. Any modern ranking strategy treats the AI search engine as an overworked researcher looking for the fastest, most reliable citation available. Your job is simply to be the easiest source to quote.

Does your content answer the query in 10 seconds?

So we just covered why modular clarity helps you get cited in this new era of generative search. But honestly? None of that structure matters if you bury the lead. Think about your own search habits. When you click a link, how long do you stick around if the first paragraph is a rambling history lesson? You bounce. You have about 10 seconds to prove you actually have the answer.

This is exactly where raw AI outputs usually fall flat on their face. They love to start with broad, sweeping generalizations. You know the ones. They start with something vague about how fast the digital world is moving. No one cares. If a user is searching for how to fix a leaky pipe, they want the wrench size and the first step. They definitely do not want a thesis on the evolution of modern plumbing. When you are optimizing ai drafts for publication, your first job is to mercilessly chop that fluff. Seriously, just highlight the first hundred words and hit backspace.

Instead, you need absolute, ruthless search intent alignment. Give them the direct answer right out of the gate. Opening paragraphs that directly address the core query get picked up for AI-driven citations about 67% more often than standard intros. It just makes sense. Try dropping a concise, two-sentence summary or a bolded TL;DR block at the very top of the page. It feeds the exact ‘what’ or ‘how’ to both the impatient human reader and the crawling bot before anyone even touches the scroll wheel.

This is exactly why I lean heavily on GenWrite for my production workflow. It automates the heavy lifting of keyword research, competitor analysis, and drafting the core structure. But even with a highly capable AI blog generator handling the bulk of the work, I always spot-check that very first block of text. Automation gets the page built and formatted quickly, but you still need to ensure that opening hook hits the searcher’s immediate need. Granted, this direct-answer strategy doesn’t always hold users on the page for five minutes if the query is super simple, but it secures the initial ranking.

You really do not need a massive stack of expensive on-page seo tools to tell you if your intro actually works. Just read it out loud to yourself. Does it resolve the user’s core question before you have to take your second breath? If it doesn’t, rewrite it entirely. Stop teasing the answer and just give it away. The readers who want the deep dive will keep scrolling anyway.

Topical clusters vs. keyword stuffing 2.0

Nailing the 10-second snippet gets you the initial click. But retaining search engine trust across an entire domain requires architectural integrity, not just localized optimization. The industry moved away from cramming exact-match phrases into paragraphs a decade ago. Now we face a mutation: keyword stuffing 2.0.

This modern iteration occurs when marketing teams use an AI blog generator to spin up forty isolated pages targeting semantic variations of the exact same intent. It looks like high-volume production. It functions like spam. Search algorithms parse entity relationships, not just raw text strings. Pumping out disjointed articles on “best running shoes,” “top shoes for running,” and “running footwear guide” triggers aggressive keyword cannibalization. Multiple URLs on your domain end up competing in the index for the identical query. Your crawl budget burns out on redundant nodes, diluting the overall authority of the site.

Architecting the pillar-cluster model

Topical authority requires mapping content to how search engines actually parse entities. The pillar-cluster model aligns perfectly with this structural reality. You establish one comprehensive pillar page covering the core subject entity. Then you deploy specific cluster pages to target distinct, non-overlapping subtopics.

A health site shifting from keyword-centric production to a strict topical authority model often sees massive organic traffic gains. But this doesn’t always hold true if the internal linking architecture breaks down. Links are the literal connective tissue of your topical cluster. A cluster page without a bidirectional link to its pillar is an algorithmic dead end. It isolates PageRank. It fails to signal categorical expertise to the crawler.

One of the most overlooked seo ranking tips involves the distribution of anchor text within these clusters. If every cluster page links back to the pillar using the exact same anchor phrase, you risk triggering an over-optimization filter. You need semantic variance. Link back using secondary keywords, long-tail variations, and natural phrasing. Any effective ranking strategy demands this level of tight, varied interlinking.

Automation with structural intent

Executing this at scale requires shifting your operational focus. When we developed GenWrite, the goal was to automate the blog creation process while respecting these architectural rules. You can run competitor analysis to identify missing cluster topics, then deploy bulk blog generation to fill those specific gaps. The AI handles the drafting, keyword research, and internal link mapping, keeping the cluster tightly bound.

But sheer velocity introduces new failure points. Generating cluster pages blindly without verifying the underlying data creates compounding errors across your site architecture. If you skip manual verification, poorly managed AI outputs can trigger algorithmic penalties that tank the entire cluster’s visibility. Fact-checking remains a mandatory human layer.

Real content strategy improvement means treating your site as a relational database. Every new post must answer a specific sub-query while linking back to the broader entity. Stop trying to force-rank individual URLs through localized keyword density. Instead, map out the entire user journey. When a user searches for a broad term, they need the pillar. When they search for a highly specific technical application, they need the cluster. Start building a dense, interconnected web of topical coverage that serves both the crawler and the human. That is how you dominate modern search.

The ‘human-in-the-loop’ editing checklist

Building topical authority through intelligent clustering sets the architectural foundation. But a flawless site structure won’t save a page that reads like a predictive text algorithm on autopilot. The workflow shift from primary author to strategist demands a rigorous final filter. We rely on GenWrite to handle the heavy lifting of bulk blog generation, deep competitor analysis, and initial drafting. It establishes a highly optimized baseline. Yet, publishing raw, unedited output remains a massive ranking liability. You need a structured protocol to fix AI content before it hits your production server.

The empirical verification pass

The primary risk in any automated workflow is the hallucination tax. LLMs predict the next statistically plausible token. They do not natively verify truth. Start your audit by isolating every empirical claim, specific metric, and historical reference. Cross-check these data points against primary sources. If an AI draft cites a 47% conversion increase or names a specific technical whitepaper, trace it back to the origin URL. This step is strictly non-negotiable for YMYL (Your Money or Your Life) topics. An unverified claim in finance or health triggers severe algorithmic quality flags.

Stripping algorithmic syntax

Algorithmic text relies heavily on specific lexical patterns. Words like “unleash,” “discover,” “navigate,” or “realm” frequently signal a machine-generated draft. Strip these out immediately. Effective AI writing correction goes beyond swapping vocabulary. It requires breaking the monotonous syntactic rhythm. LLMs default to uniform sentence lengths and predictable transitional clauses. Fragment sentences deliberately. Remove formulaic connectors entirely. If a paragraph starts with a sweeping generalization about the digital age, delete it. Force the opening sentence to deliver the core thesis immediately.

Intent-mapping the header hierarchy

Search engines map heading tags directly to user query intent. Vague or overly clever subheadings break this mapping and dilute topical relevance. Scan your H3s and H4s in the draft. They must explicitly describe the underlying paragraph’s tactical value. “Navigating the future” tells the crawler absolutely nothing. “Configuring your server crawl rate limits” provides exact contextual relevance. Optimizing AI drafts means forcing every structural element, right down to the DOM level, to work for the specific search intent.

Validating internal link architecture

Automated drafts often force internal links into awkward phrasing. During your review, check the anchor text distribution. Are the links descriptive, or do they rely on generic prompts? An effective editor ensures that every injected link naturally supports the surrounding context. If a tool suggests a link to a pricing page, verify that the user’s journey at that exact moment actually warrants a commercial transition.

Injecting proprietary data density

An LLM aggregates existing knowledge from its training data. It cannot generate lived experience. To elevate the content above the baseline, you must inject proprietary data, internal case studies, or specific troubleshooting anecdotes. If the draft explains how a software deployment works, insert a brief account of a specific edge case your team encountered during a recent cloud migration. Name the actual tools you used. This unique information density separates a generic overview from an authoritative, citable resource.

This protocol admittedly introduces friction. Applying human oversight takes time, and honestly, the impact varies heavily depending on the editor’s actual domain expertise. A junior editor might miss nuanced technical errors that a senior engineer would catch instantly. But this friction is entirely intentional. While AI content automation accelerates the research, assembly, and formatting phases, the human-in-the-loop checklist provides the final layer of editorial governance. It converts an optimized string of text into a trusted asset.

Where most teams get stuck: the over-optimization trap

That editing checklist gets the draft clean. But many teams take it too far. They fixate on green lights in their SEO plugins. They cram exact-match phrases into every single paragraph. The result is the uncanny valley of search copy. It looks technically perfect to a machine, but actual humans hate reading it.

An seo friendly content generator gives you a solid foundation. It shouldn’t be an excuse to abandon readability. When marketers run aggressive ai writing correction passes just to hit an arbitrary keyword density, the copy breaks. Sentences become bloated. The rhythm dies completely. You end up with shallow doorway pages disguised as thought leadership. Search engines penalize this behavior aggressively. They spot robotic phrasing instantly. We know that relying on repetitive AI fluff hurts your SEO far more than having slightly lower keyword repetition.

Look at the history of search penalties. Brands have vanished from page one overnight for unnatural optimization schemes. The tactics change over time, but the penalty remains brutal. Today’s version is keyword stuffing 2.0. Writers force semantic variants into sentences where they simply do not belong.

Stop optimizing for algorithms from a decade ago. Focus entirely on search intent alignment. If a user searches for a quick definition, give them the definition immediately. Do not bury it under three paragraphs of historical context they didn’t ask for. If the intent is transactional, get straight to the product details and pricing. Over-optimization usually happens when teams try to force massive, informational content structures into a purely transactional query. It never works.

This is exactly why we built GenWrite. We automate the heavy lifting,keyword research, competitor analysis, and bulk blog generation,so you can focus on actual strategy. GenWrite handles the structural SEO baseline natively. You don’t need to manually inject a target phrase 15 times to rank. Our platform aligns with modern LLM and search guidelines from the start, preventing the urge to over-engineer the text.

Good SEO feels invisible. When readers notice your optimization, you’ve failed completely. Cut the robotic phrasing. Drop the forced synonyms. Write directly to the user’s actual problem. The reality is, not every page needs 2,000 words to rank. If the content feels stiff, it is stiff. Delete the filler and just start over.

Building a site architecture that AI models can parse

Fixing robotic phrasing stops you from triggering spam filters, but it doesn’t guarantee visibility. Surface-level readability means nothing if the underlying site architecture remains an opaque mess to a crawler. AI models don’t read pages like humans do. They parse node relationships, extract entities, and map semantic distances. So, shifting your ranking strategy requires building a machine-readable map that tells an LLM exactly what you know and how your data connects.

Large language models and AI-driven search engines rely heavily on explicit data structuring. JSON-LD schema markup acts as the literal translation layer. Instead of hoping a model infers that a specific URL is a software review rather than an informational guide, schema explicitly defines the entity. You inject TechArticle, SoftwareApplication, or FAQPage tags directly into the code. This removes the inference tax. When crawlers hit the page, they immediately categorize the content type, the author entity, and the primary subject matter.

Doing this manually across hundreds of URLs scales poorly. That’s exactly where an AI blog generator like GenWrite proves its utility. GenWrite automates the backend formatting, ensuring the content it produces automatically aligns with search engine guidelines by structuring the necessary markup during generation. But even automated systems need a logical, site-wide hierarchy to hook into.

Look at how data-heavy enterprise platforms segment their information. Scale AI isolates industry-specific pathways,automotive, defense, healthcare,into distinct structural silos. Labelbox organizes its architecture by educational depth, cleanly separating use cases from technical documentation. This isn’t just for user navigation. It creates strict semantic boundaries for AI crawlers. When an LLM parses these sites, the URL structure itself provides immediate context.

Breadcrumbs act as the connective tissue in this environment. They map the exact parent-child relationship of every page, feeding the crawler a clear taxonomic tree. Keep the hierarchy flat. If a crawler has to dig five levels deep to find core feature pages, the semantic weight of that content drops exponentially.

Will perfect schema guarantee a top spot in generative search summaries? Honestly, the evidence here is mixed. Sometimes models still hallucinate connections despite flawless JSON-LD. Yet ignoring these technical baselines is a fast track to obscurity. If you want reliable seo ranking tips, you have to treat inaccurate AI content that hurts SEO as a structural failure, not just an editorial one. Bad architecture feeds bad model outputs.

Modern on-page seo tools have shifted their focus entirely from keyword frequency to entity validation. They audit your DOM to ensure the semantic HTML tags,like <article>, <aside>, and <nav>,align perfectly with the injected schema. A messy DOM tree confuses parsers. Semantic HTML combined with clean JSON-LD ensures that when an AI model chunks your site for a Retrieval-Augmented Generation (RAG) database, it extracts the exact context you intended.

Should you delete your low-performing AI backlog?

We just spent time making sure LLMs can parse your site architecture. But what happens when the bots crawl through those perfectly structured schemas only to find a graveyard of mediocre, thin articles?

You know the ones I mean. The massive batch of generic posts you spun up six months ago when everyone thought raw volume was the only game in town. Are you really going to let those zombie pages drag down your entire domain?

Keeping them around isn’t a neutral decision. It actively hurts you. Every time search crawlers hit your site, they operate on a strict budget. If they waste that crawl budget parsing hundreds of low-performing pages that offer zero unique value, they aren’t indexing your best work. This is exactly where a serious content strategy improvement becomes non-negotiable. You have to stop the bleeding before it tanks your overall quality score.

So, do you hit the delete button? Honestly, the answer is usually yes.

I know it stings to delete things you spent time or money generating. But pruning thin pages tells search algorithms you actually care about quality control. Of course, this doesn’t always hold true for every single page. Sometimes, you can salvage a cluster of weak posts by consolidating them into one massive, authoritative pillar guide. You take five 300-word articles that get zero traffic, combine the core concepts, add real insights, and redirect the old URLs to the new master guide. You kill the dead weight, and your site authority starts to recover.

If you want to fix ai content that missed the mark, you need a proactive ranking strategy, not a reactive one. This is why I rely on GenWrite to handle content automation properly from day one. Instead of just blasting out raw text that you’ll have to delete later, GenWrite integrates competitor analysis and keyword research before a single sentence is drafted. It handles the heavy lifting,like smart link building and automatic image addition,so the output actually aligns with search engine guidelines. You get the scale of an AI blog generator without the hangover of a toxic backlog.

If you leave that legacy backlog untouched, you are essentially telling Google that your baseline for quality is the floor. Clean house. Audit your analytics right now. If a post hasn’t seen a human visitor in 90 days, provides no original data, and is structurally thin, kill it. Merge it if there is a tiny spark of value. But whatever you do, don’t just leave it sitting there rotting your domain authority from the inside out.

The final verdict: win by becoming a cited source

Deleting your dead backlog clears the board, but now you have to play offense. Out-writing AI is a losing game because the models synthesize text faster than you ever will. Your new ranking strategy relies entirely on citation authority. AI models do not invent data. They aggregate it. You win by becoming the exact source they are forced to reference.

Generative search engines look for proprietary insights. They scrape the web for original case studies, raw survey data, and first-hand experience. If your page just remixes the top ten search results, it offers zero net-new information to the ecosystem. Relying on raw, unedited generation without adding your own expertise is exactly how AI content hurts SEO in 2025. The models ignore derivative pages and link directly to the primary source.

Recency drives relevance in this environment. Content updated within the last 30 days earns up to 3.2x more citations in AI-driven search results. Stale pages die quickly. You cannot afford a slow, manual production pipeline when the algorithms demand constant freshness. This reality requires an aggressive cycle of research, optimization, and iteration.

This is where a proper AI blog generator belongs in your daily workflow. GenWrite automates the baseline mechanics by handling structural SEO optimization, competitor analysis, and the initial drafting process. It builds the framework so you do not have to start from a blank page. That automation buys you valuable time to inject the specific failure states and unique data that algorithms crave.

A genuine content strategy improvement separates the mechanical tasks from the creative ones. Let the machine do the heavy lifting for structure while you focus on insight. Forget chasing outdated seo ranking tips like keyword density or arbitrary word count targets. They simply do not matter anymore. Build modular, highly structured arguments that provide definitive answers to complex queries.

The search environment will keep shifting. The major platforms will constantly change their interfaces and delivery mechanisms. But the foundational rule of information retrieval remains static. Systems need original inputs to function properly. Stop acting like a basic synthesizer and start acting like the definitive origin point for your industry’s data. If you want to survive the next major algorithm update, you have to feed the machine something it cannot generate on its own.

If you’re tired of manually fixing robotic AI drafts, GenWrite handles the research and SEO optimization for you so you can focus on adding the human expertise that actually ranks.

Frequently Asked Questions

Does Google actually penalize AI-generated content?

Google doesn’t penalize content just because it’s AI-made. They care about whether the content is helpful, original, and demonstrates real-world experience. If you’re just scraping the web for generic info, that’s where you’ll run into trouble.

How can I make my AI drafts sound less robotic?

You’ve got to inject your own perspective. Add specific case studies, unique data points, or personal anecdotes that aren’t in the training data. If it reads like a textbook, it’s probably too stiff.

What is Generative Engine Optimization or GEO?

GEO is all about making your content easy for AI models to parse and cite. It involves using clear headers, structured data, and direct answers to common questions. It’s the modern way to ensure your site shows up in AI-driven search results.

Is it worth deleting my old, low-performing AI blog posts?

Honestly, if they aren’t bringing in traffic and they’re just thin, low-effort pages, you’re better off pruning them. Cleaning up your site helps search engines focus on your high-quality, authoritative content.